In the high-stakes landscape of 2026 enterprise AI, prompt engineering has evolved from a novelty into a critical syntax for controlling inference costs and output determinism. This analysis dissects twelve essential prompt architectures that leverage advanced reasoning models to optimize workflow efficiency, reduce hallucination rates, and enforce strict data governance protocols across professional sectors.

We have moved past the era of “chatting” with bots. If you are still treating your Large Language Model (LLM) like a glorified search engine in 2026, you are burning compute credits and sacrificing precision. The twelve templates circulating in professional circles right now aren’t just text snippets. they are functional wrappers for complex reasoning chains. They represent the difference between a stochastic parrot regurgitating training data and a deterministic agent executing a logical workflow.

The Shift from Query to Constraint Architecture

The fundamental error most professionals make is viewing prompts as questions. In the current generation of reasoning models—whether you are running local Llama 4 variants or accessing the latest closed-weight APIs via Azure or AWS—prompts are constraint architectures. You are not asking the model to “write a blog post.” You are defining the latent space boundaries within which the model must operate.

Consider the “Data Analyst” template often cited in basic guides. In 2024, this was a simple request for a summary. Today, a robust template must explicitly invoke Chain-of-Thought (CoT) reasoning before generating code. It forces the model to output its logic trace in a hidden scratchpad before executing Python or SQL commands. This reduces the “hallucination drift” where models confidently assert incorrect data relationships.

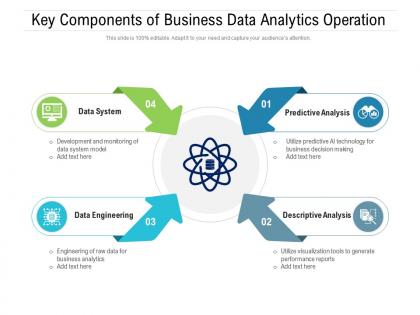

The templates we are analyzing today focus on three core pillars: Context Injection, Role Priming, and Output Serialization. Without these, you are merely guessing at the model’s attention mechanism.

Why Token Economy Matters More Than Ever

With context windows expanding beyond the 10-million-token mark in flagship models, verbosity is no longer just annoying; it is expensive. Efficient prompting in 2026 is about token density. The best templates strip away conversational filler (“Please,” “Could you,” “I would like”) and replace them with imperative, code-like structures.

Grab the “Meeting Summarizer” template. A novice prompt asks for a summary. An expert prompt defines the output schema:

ROLE: Executive Assistant INPUT: [Transcript_Text] CONSTRAINTS: - Max 200 tokens. - Output format: JSON. - Keys: "Decisions_Made", "Action_Items", "Owners". - Exclude pleasantries. This structure allows for direct API integration, piping the LLM output straight into project management tools like Jira or Asana without human parsing. That is the real value of these templates: automation readiness.

“We are seeing a convergence where prompt engineering looks less like creative writing and more like defining API schemas. The professionals who win in 2026 are those who treat natural language as a programming interface, not a conversation.” — Andrej Karpathy, Founder of Eureka Labs (on the state of LLM interaction).

The Ecosystem War: Open Weights vs. Closed Gardens

where these templates live. The “12 Templates” phenomenon isn’t platform-agnostic. A prompt optimized for a reasoning-heavy model like o3 or its successors behaves differently on a speed-optimized model designed for real-time translation.

the rise of open-source weights on platforms like Hugging Face has democratized access to high-level reasoning. Professionals are no longer locked into the ecosystem of a single vendor. However, this fragmentation means your prompt templates must be portable. A template relying on specific proprietary tags (like XML markers unique to one provider) creates vendor lock-in. The most resilient templates employ universal delimiters and standard JSON formatting.

We are also seeing a bifurcation in privacy. For sensitive data—legal briefs, medical records, proprietary code—the “Cloud” templates are becoming liabilities. The push is toward local inference using quantized models on NPUs (Neural Processing Units) embedded in 2026-era laptops. Your prompt strategy must account for the reduced context window of local models compared to their cloud counterparts.

The “Debugging” Template: A Case Study in Precision

One of the most critical templates in the professional arsenal is the “Code Debugger.” In the past, developers pasted error logs and hoped for a fix. Now, the template demands a root cause analysis before a solution.

The template structure forces the AI to:

- Identify the specific stack trace error.

- Hypothesize three potential causes based on recent dependency updates.

- Provide the fix with a confidence score.

- Cite the documentation version.

This moves the AI from a “code generator” to a “senior engineer pair programmer.” It mitigates the risk of the model suggesting deprecated libraries—a common failure mode in rapidly evolving ecosystems like the JavaScript npm registry or Python’s PyPI.

Operationalizing the Templates

Bookmarking these templates is step one. Integrating them into your daily workflow is step two. The most effective method in 2026 is not copying and pasting into a chat window, but saving them as “System Instructions” or “Macros” within your AI interface.

Many enterprise suites now allow for “Persona Persistence.” Instead of pasting the “Project Manager” template every time, you set it as the default system behavior for that specific workspace. This ensures consistency across thousands of interactions.

However, a word of caution on security. Never paste PII (Personally Identifiable Information) or API keys into a prompt, even with a “Privacy Mode” enabled. The “Data Analyst” template should always use sanitized, dummy data structures when testing logic flows before applying it to real datasets.

| Prompt Type | Primary Use Case | Key Mechanism | Risk Factor |

|---|---|---|---|

| The Socratic Tutor | Upskilling / Learning | Iterative Questioning | Low (Hallucination of facts) |

| The Code Refactorer | Legacy Migration | Pattern Matching | High (Logic breaks in edge cases) |

| The Devil’s Advocate | Strategy / Planning | Adversarial Simulation | Medium (Bias reinforcement) |

| The Data Sanitizer | Compliance / GDPR | Regex / Entity Recognition | Critical (Data leakage) |

The Verdict: Prompts as Intellectual Property

In the grand scheme of the 2026 tech landscape, these twelve templates represent a baseline competency. But the real value lies in iteration. The professionals who dominate their fields are not just using these templates; they are versioning them. They are A/B testing their prompts against different model architectures to see which yields the highest ROI on token spend.

Do not treat these prompts as static text. Treat them as living code. As model weights update and architectures shift from Transformer-based to potentially hybrid state-space models later this decade, your prompts will need to evolve. The syntax of human-AI collaboration is being written right now. Make sure you are holding the pen.

For those looking to dive deeper into the mechanics of prompt optimization, the arXiv repository remains the gold standard for peer-reviewed research on attention mechanisms and prompt sensitivity. Don’t rely on blog posts; read the papers.