Vietnam Drawn into Group E for 2027 Asian Cup

Vietnam has been drawn into Group E for the 2027 AFC Asian Cup, hosted by Saudi Arabia. The draw, finalized on May 9, 2026, positions the “Golden Star Warriors” in ... Read More

Saturday Edition

Stay updated with Archyde – your source for breaking news, global headlines, economy, entertainment, health, technology, and sports. Fresh stories daily.

Vietnam has been drawn into Group E for the 2027 AFC Asian Cup, hosted by Saudi Arabia. The draw, finalized on May 9, 2026, positions the “Golden Star Warriors” in ... Read More

Continuous Coverage

U.S.-Iran negotiations face a critical juncture as renewed attacks threaten a fragile cease-fire in the region. This volatility…

Defense analysts have identified a technical feedback loop between Moscow and Tehran that is accelerating the evolution of…

Bobby Cox, the Hall of Fame manager who steered the Atlanta Braves through 25 seasons and a 1995…

Europe is forfeiting energy security to China by failing to scale low-cost electricity systems required for heavy industry.…

The air in Bangkok’s tech corridors is usually thick with the scent of street food and the hum…

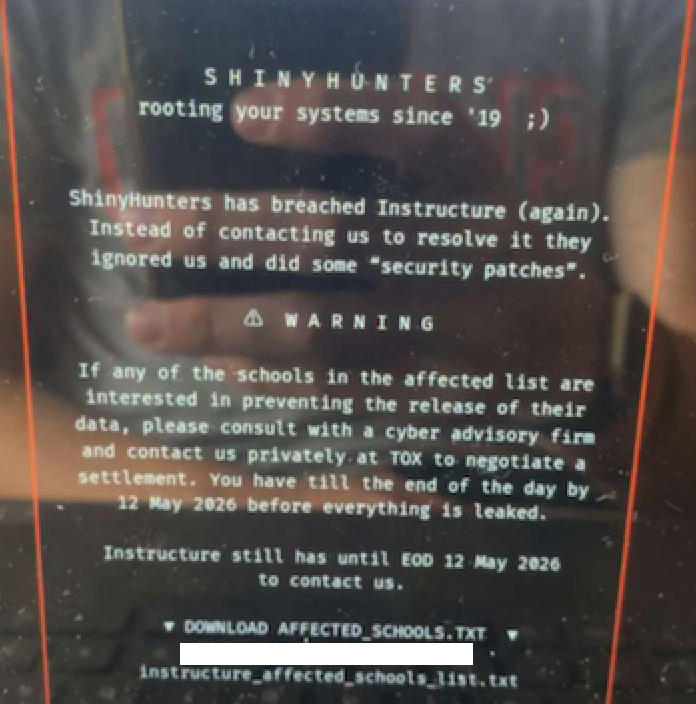

ShinyHunters has crippled Instructure’s Canvas LMS, impacting 275 million users across 9,000 institutions. The breach, stemming from a…

Global Affairs

The 3 ft 6 in gauge railways in the United Kingdom, known globally as “Cape Gauge,” were specialized…

Markets And Money

A Croatian service center’s disclosure of exorbitant Ferrari LaFerrari battery replacement costs highlights a critical valuation risk for…

Digital Culture

The “Analog-First” movement of 2026 is a systemic rebellion against algorithmic aesthetic homogenization. By leveraging invisible IoT frameworks…

Science And Wellbeing

Pregnancy is a complex biological process involving the orchestration of hormonal signaling, placental development, and fetal organogenesis. Understanding…

Screen And Sound

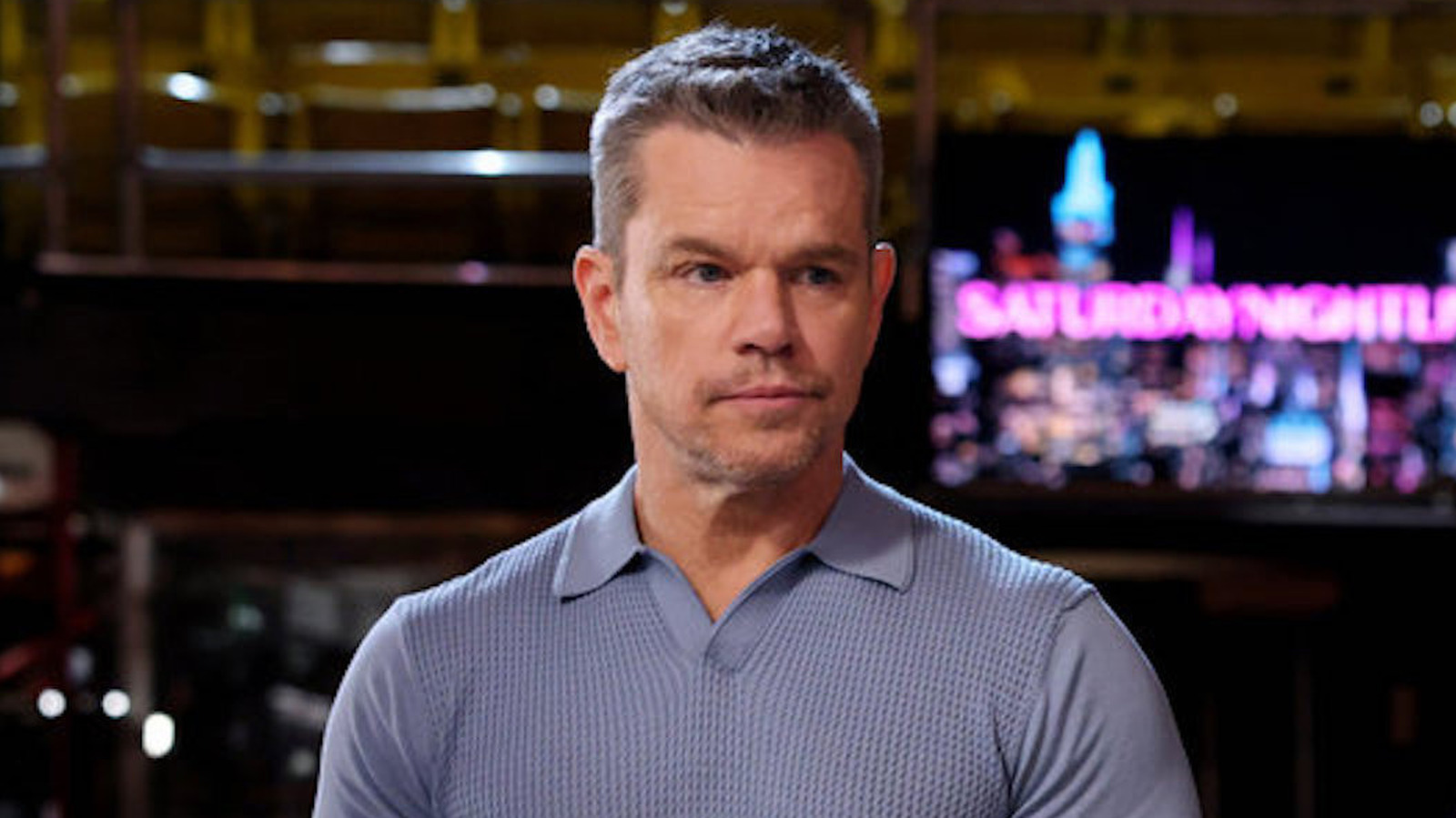

This Saturday, May 9, 2026, Matt Damon returns to host Saturday Night Live at Studio 8H, while Valerie…

Fixtures And Form

The Cleveland Cavaliers face a critical crossroads in Game 2 of the 2026 NBA Playoffs second-round series against…