Orioles vs. Guardians Game Thread: Live Updates

Baltimore and Cleveland are set to face off in a pivotal American League Central matchup on Thursday night, with the Orioles traveling to Progressive Field for a 6:10 p.m. ET ... Read More

Saturday Edition

Stay updated with Archyde – your source for breaking news, global headlines, economy, entertainment, health, technology, and sports. Fresh stories daily.

Baltimore and Cleveland are set to face off in a pivotal American League Central matchup on Thursday night, with the Orioles traveling to Progressive Field for a 6:10 p.m. ET ... Read More

Continuous Coverage

Russian Defense Minister Sergei Shoigu claimed on April 15 that either Russia’s air defense systems are failing to…

On 15 April 2026, Mohammad Bagher Ghalibaf, Speaker of the Iranian Parliament, stated that the completion and consolidation…

This weekend, Avenue Q’s revival at London’s Shaftesbury Theatre proves that two decades after its Off-Broadway debut, the…

When markets open on Monday, analysts will assess whether recent U.S. Policy shifts toward Venezuela have materially improved…

When Indiana’s Pacers stormed into Gainbridge Fieldhouse on April 9, 2026, and walked out with a 128–95 victory…

Elgato’s Stream Deck Neo has dropped to $59.99, its lowest price ever, transforming what began as a niche…

Global Affairs

On a tense Tuesday night in April 2026, Real Madrid’s Champions League quarter-final hopes collapsed after a moment…

Markets And Money

World Liberty Financial, a crypto project linked to FTX’s collapsed ecosystem, faces scrutiny after allegations emerged that it…

Digital Culture

In April 2026, German economists at Makronom reignited a long-simmering debate: the persistent conflation of gross domestic product…

Science And Wellbeing

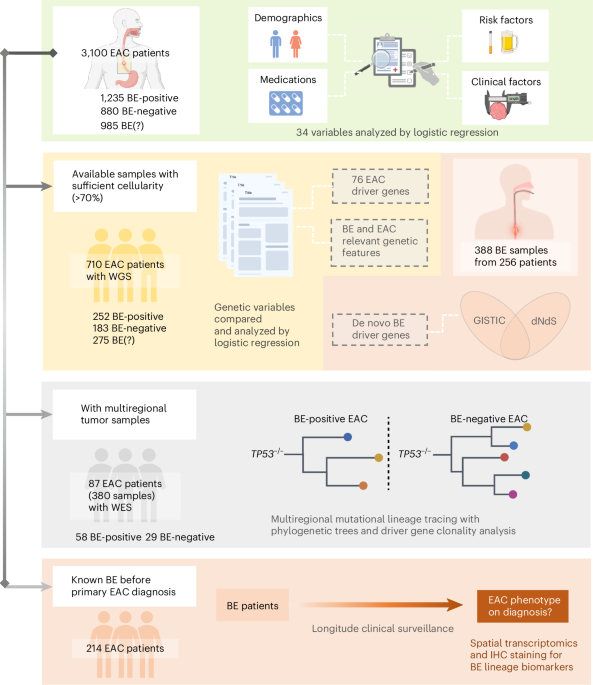

A recent study published this week in Nature Medicine reveals that intestinal metaplasia is the sole precancerous pathway…

Screen And Sound

The Rocky Horror Picture Show has been abruptly canceled from its scheduled April 2026 revival tour following credible…

Fixtures And Form

Following Crystal Palace’s dramatic 3-2 aggregate victory over Fiorentina to reach the UEFA Europa Conference League semi-finals, the…