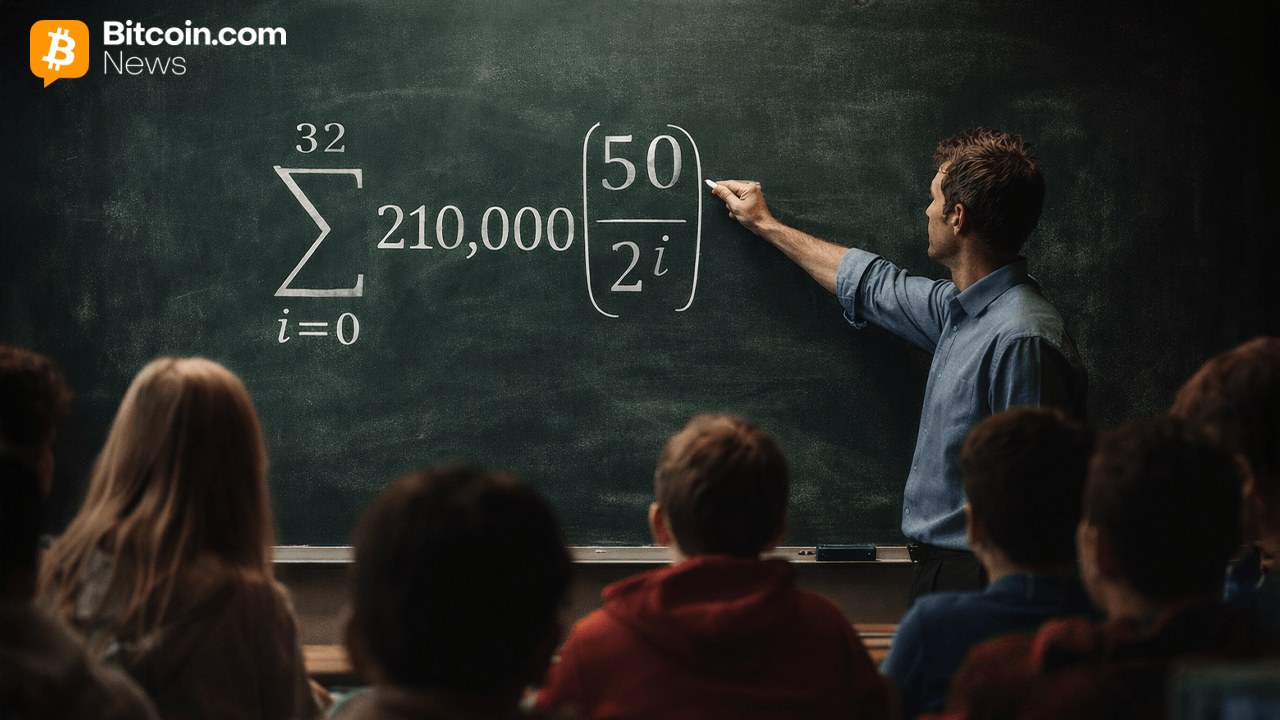

Bitcoin Scholars Fund to Redirect $21M in Tax Credits to K-12 Education

The Bitcoin Scholars Fund announced on April 16, 2026, that it aims to redirect $21 million in federal tax credits toward K–12 Bitcoin education across the United States, offering donors ... Read More