Birmingham Match Preview: Stats, H2H, and Standings

There is something inherently poetic about a clash between SC Freiburg and Aston Villa. On the surface, We see a standard fixture on the calendar—a meeting of a disciplined Black ... Read More

Saturday Edition

Stay updated with Archyde – your source for breaking news, global headlines, economy, entertainment, health, technology, and sports. Fresh stories daily.

There is something inherently poetic about a clash between SC Freiburg and Aston Villa. On the surface, We see a standard fixture on the calendar—a meeting of a disciplined Black ... Read More

Continuous Coverage

The NHTSA has certified the Tesla Model Y as the first vehicle to pass its updated advanced driver…

Freddie Freeman reached a historic milestone on May 9, 2026, smashing his 100th home run as a member…

Home fitness products popularized on TikTok—including resistance bands, walking pads and adjustable weights—provide accessible alternatives to gym memberships…

Arc Raiders players are currently grappling with systemic failures in gadget physics, where tactical projectiles exhibit erratic collision…

Mexico’s Secretary of Economy, Marcelo Ebrard, is spearheading a strategic initiative to reduce the nation’s pharmaceutical dependence. By…

Banner Bank provides competitive home loan products and mortgage rates, emphasizing personalized prequalification through lending experts. As regional…

Global Affairs

London Fire Brigade crews responded to a medical incident at a bank on Golders Green Road, NW11, after…

Markets And Money

The Lotería de Medellín announced the winning numbers for the May 8, 2026, draw, featuring a primary jackpot…

Digital Culture

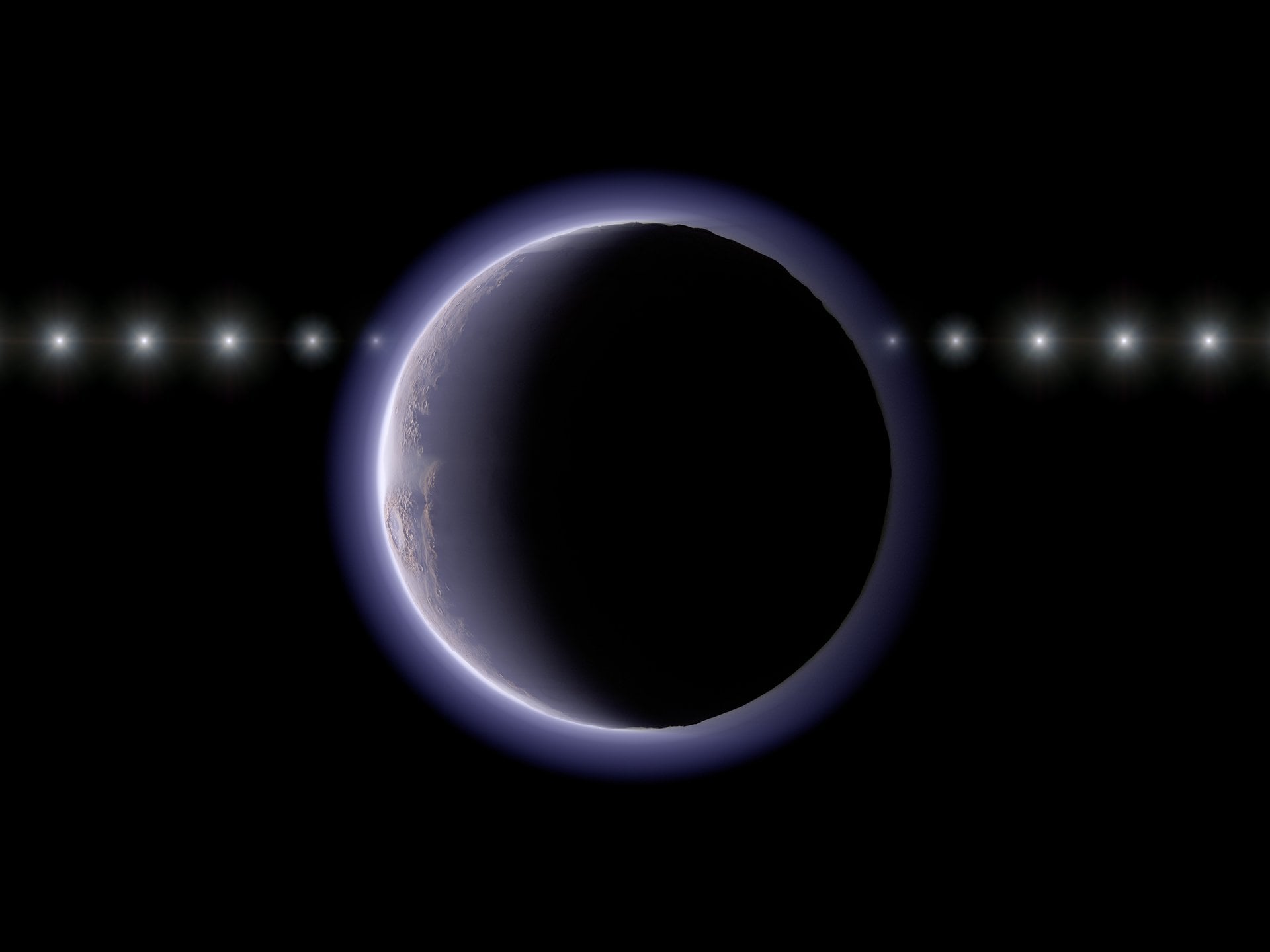

Astronomers have detected a thin, unexpected atmosphere on a distant Trans-Neptunian Object (TNO) beyond Pluto, challenging established planetary…

Science And Wellbeing

A cruise ship carrying passengers infected with Hantavirus is currently approaching Tenerife, triggering a high-alert public health response.…

Screen And Sound

Strong Minds hosts its 9th annual Run for Change on Sunday, May 10, 2026, raising critical funds and…

Fixtures And Form

The Vegas Golden Knights and Blackstone Valley hockey communities have forged a coast-to-coast bond following a devastating tragedy.…