NVIDIA and Energy Providers Forge AI Factories as Dynamic Grid Assets

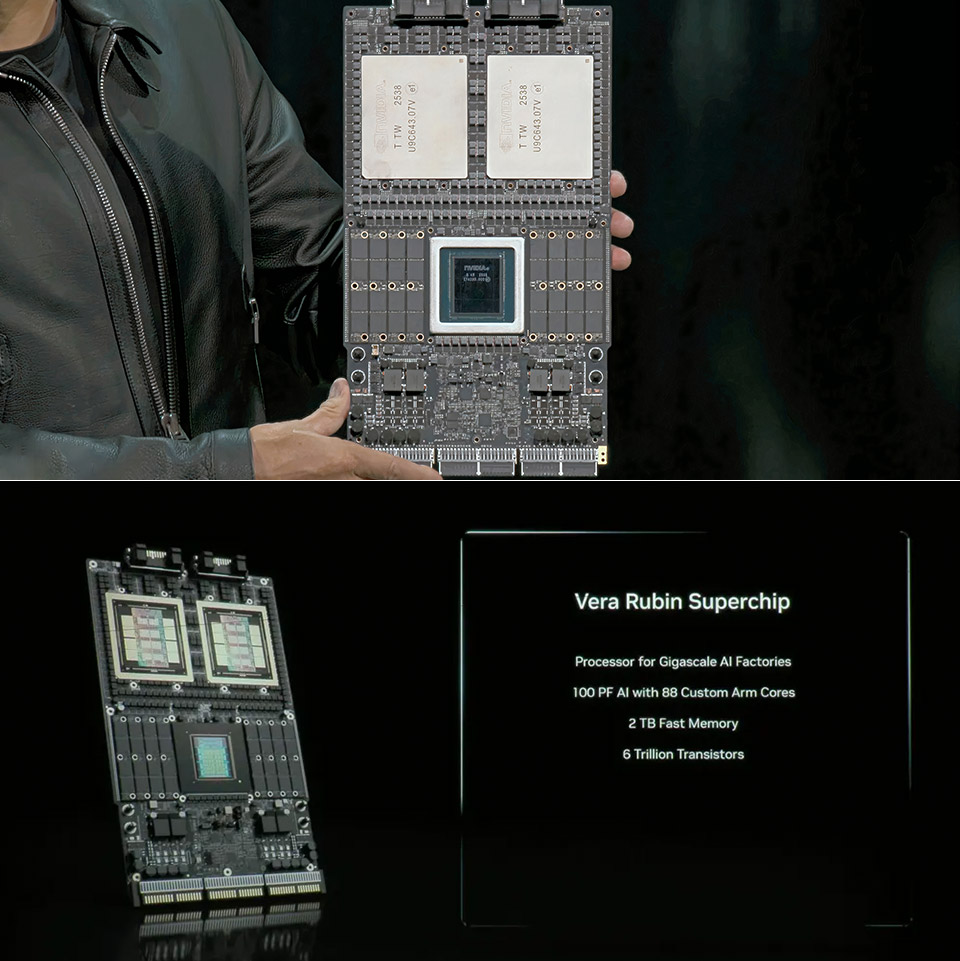

NVIDIA, alongside energy leaders like AES and Constellation, is fundamentally reshaping how AI infrastructure interacts with the power grid. They’re moving beyond treating AI factories as static power drains, instead positioning them as flexible, intelligent assets capable of dynamically adjusting energy consumption to bolster grid stability and efficiency. This initiative, unveiled at CERAWeek, leverages NVIDIA’s Vera Rubin DSX AI Factory reference design and Emerald AI’s Conductor platform to optimize compute, power networking, and control—a critical shift as AI demand strains existing energy resources.

The Token-Per-Watt Imperative: Beyond Moore’s Law

The relentless pursuit of computational power is hitting a wall – not of theoretical limits, but of practical energy constraints. We’ve long tracked performance gains through metrics like FLOPS, but the new battleground is “tokens per second per watt.” This isn’t merely about efficiency; it’s about economic viability. Large Language Models (LLMs), particularly, are ravenous consumers of energy. Scaling LLM parameter counts without corresponding gains in energy efficiency quickly becomes unsustainable. NVIDIA’s historical improvements – a 1 million-fold increase in tokens generated per watt since the Kepler GPU in 2012 – demonstrate the power of extreme codesign, optimizing both hardware and software. But this isn’t a solo effort. Jensen Huang correctly frames this as a “five-layer AI cake,” where energy is the foundational layer. Without a robust and adaptable energy infrastructure, the entire stack collapses.

The Vera Rubin DSX architecture is key. It’s not just about faster GPUs; it’s about a holistic system designed for power management. The DSX reference design incorporates advanced power distribution units (PDUs) with granular monitoring and control capabilities, allowing for precise allocation of power to individual GPUs and other components. This level of control is essential for responding to grid signals and dynamically adjusting workloads. The integration with Emerald AI’s Conductor platform adds a layer of real-time energy orchestration, enabling AI factories to participate in demand response programs and provide ancillary services to the grid.

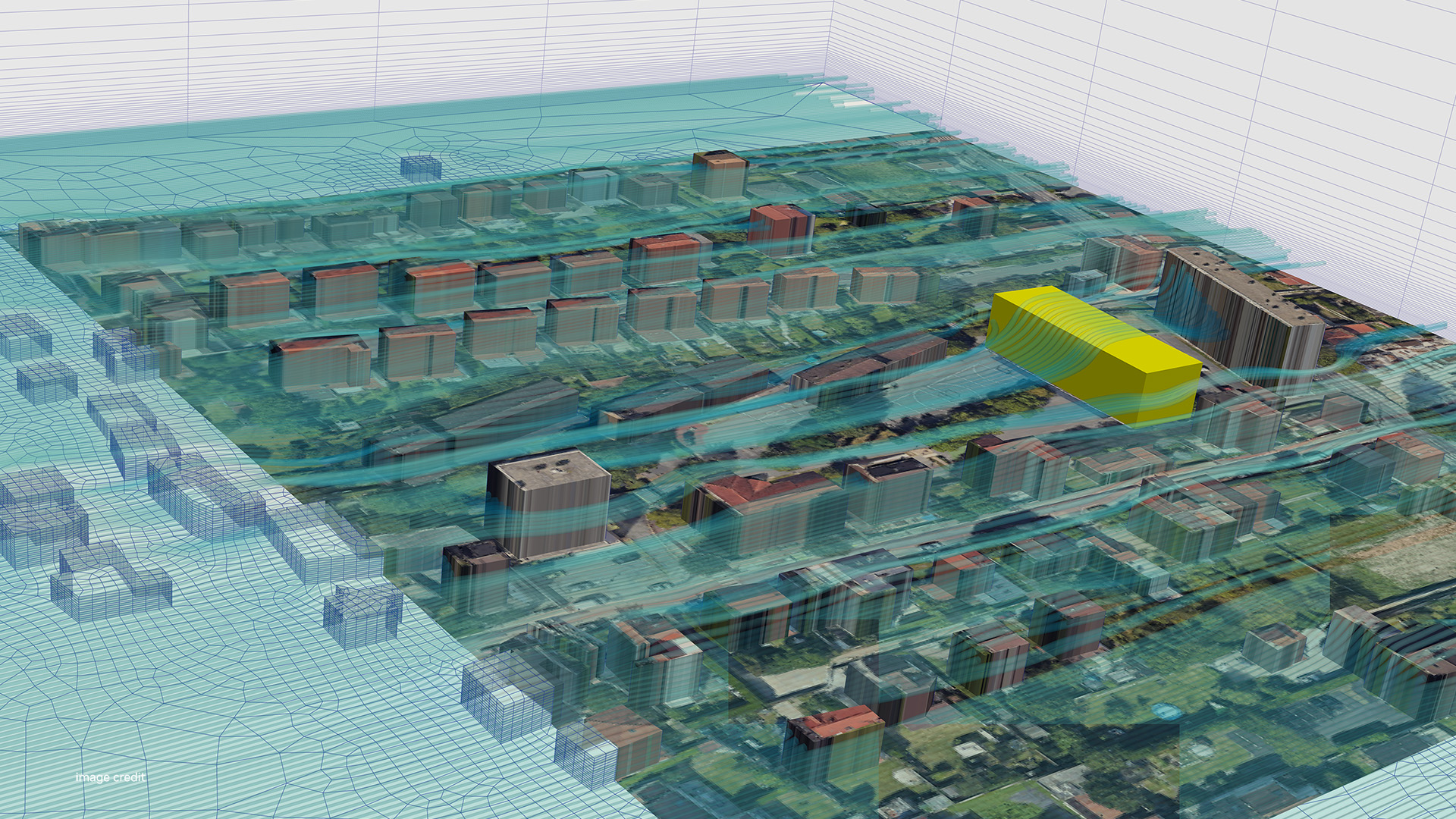

Digital Twins and the Power-to-Rack Challenge

The “power-to-rack” problem – efficiently delivering sufficient power to AI infrastructure – is a significant bottleneck. Traditional data center designs weren’t built to handle the concentrated power demands of modern AI workloads. This is where digital twins, powered by NVIDIA Omniverse, approach into play. GE Vernova is leveraging Omniverse to simulate grid behavior, substations, and AI factory loads *before* deployment. This allows utilities to validate interconnection strategies, identify potential bottlenecks, and optimize power delivery infrastructure. The ability to model the entire system – from the power source to the individual GPU – is a game-changer. It’s a shift from reactive problem-solving to proactive design.

Schneider Electric’s validated Vera Rubin reference designs, developed with AVEVA, further streamline this process. By simulating power, cooling, and controls within Omniverse, operators can optimize performance per watt, validate designs, and operate AI factories more efficiently. This isn’t just about reducing costs; it’s about ensuring reliability. A poorly designed AI factory can destabilize the grid, leading to outages and economic disruption.

Robotics and AI-Driven Construction: Accelerating Deployment

The speed at which we can deploy new energy infrastructure is a critical constraint. Traditional construction methods are slow and labor-intensive. Maximo, an AES-incubated solar robotics company, is demonstrating the potential of AI-driven robotics to accelerate solar installations. Using NVIDIA accelerated computing, Omniverse libraries, and the Isaac Sim framework, Maximo has achieved reliable autonomous installations at utility scale. This improves installation speed, safety, and consistency, helping to close the gap between rising electricity demand and construction capacity. The use of Isaac Sim for robotic training is particularly noteworthy. It allows Maximo to simulate various scenarios and optimize robot behavior before deploying them in the field.

TerraPower’s use of an NVIDIA Omniverse-powered digital twin platform to shorten advanced nuclear plant siting and design timelines is equally significant. Reducing design cycles from years to months is a massive acceleration, enabling faster deployment of clean energy sources. The platform leverages AI and simulation to optimize plant design and grid integration, addressing critical safety and regulatory concerns.

The Workforce Gap and Adaptive Construction Solutions

Technology alone isn’t enough. We need a skilled workforce to build and maintain these AI-powered energy systems. Adaptive Construction Solutions, in collaboration with NVIDIA, is addressing this challenge with a national registered apprenticeship initiative. This program aims to scale training for critical trades, expanding access to high-demand careers and supporting the rapid buildout of AI-driven power systems. The focus on apprenticeships is crucial. It provides a pathway for individuals to acquire the skills needed to succeed in this rapidly evolving field.

Security Implications: A New Attack Surface

Integrating AI factories directly into the grid introduces a new attack surface. The potential for malicious actors to disrupt power delivery by targeting AI infrastructure is a serious concern. The dynamic nature of these systems – constantly adjusting workloads and responding to grid signals – makes them particularly vulnerable to sophisticated attacks. Consider a scenario where an attacker compromises the Emerald AI Conductor platform, manipulating grid signals to overload the system or create cascading failures. Robust cybersecurity measures, including end-to-end encryption, intrusion detection systems, and regular security audits, are essential. The reliance on digital twins introduces the risk of “simulation poisoning,” where attackers manipulate the simulation data to compromise the real-world system.

“The convergence of AI and critical infrastructure demands a paradigm shift in cybersecurity. We’re moving beyond traditional perimeter defenses to a model of continuous monitoring, threat intelligence, and adaptive security controls. The potential consequences of a successful attack are simply too high to ignore.” – Dr. Anya Sharma, CTO, SecureGrid Solutions.

Ecosystem Lock-In and the Open-Source Alternative

NVIDIA’s dominant position in accelerated computing raises concerns about ecosystem lock-in. The Vera Rubin DSX architecture and Omniverse platform are tightly integrated with NVIDIA hardware and software. Whereas this provides performance advantages, it too limits flexibility and potentially stifles innovation. The open-source community is actively developing alternatives, such as the Open Compute Project (OCP) and various open-source AI frameworks like PyTorch and TensorFlow. Although, these alternatives currently lack the same level of integration and optimization as NVIDIA’s proprietary solutions. The challenge for the open-source community is to create a compelling alternative that offers comparable performance and scalability without sacrificing flexibility. Open Compute Project is attempting to address this, but faces significant hurdles in matching NVIDIA’s vertically integrated approach.

The rise of RISC-V architectures also presents a potential challenge to NVIDIA’s dominance. RISC-V is an open-source instruction set architecture that allows for greater customization and flexibility. Several companies are developing RISC-V-based GPUs that could compete with NVIDIA’s offerings. The RISC-V Foundation is actively promoting the adoption of this architecture, and we can expect to see more RISC-V-based AI hardware in the coming years.

What In other words for Enterprise IT

For enterprise IT departments, this shift has significant implications. AI deployments will no longer be treated as isolated projects; they will be integrated into the broader energy management strategy. IT teams will need to collaborate closely with facilities management and energy providers to ensure that AI workloads are aligned with grid capacity and sustainability goals. The adoption of digital twins will turn into increasingly common, enabling IT departments to simulate and optimize AI infrastructure before deployment. Cybersecurity will become a top priority, requiring robust security measures to protect against potential attacks.

The move towards power-flexible AI factories isn’t just a technological innovation; it’s a fundamental shift in how we think about computing and energy. It’s a recognition that AI’s future is inextricably linked to the sustainability and resilience of our power grid. And NVIDIA, along with its partners, is positioning itself at the forefront of this transformation.