Samsung and Google have jointly deployed a native television gallery featuring on-device artificial intelligence editing, marking a significant shift in consumer hardware architecture as of April 2026. This integration leverages localized neural processing units to handle image manipulation without cloud dependency, directly addressing latency and privacy concerns inherent in previous generative models. The move solidifies the Android TV ecosystem against competing proprietary standards while introducing new vectors for adversarial testing in consumer electronics.

The implications here extend far beyond a simple software update. We are witnessing the commoditization of edge AI inference at a scale that demands rigorous security postures previously reserved for enterprise infrastructure. When you put generative capabilities directly into the hands of consumers via a television interface, you aren’t just selling features. you are distributing potential exploit surfaces. The collaboration suggests a maturation of the Secure AI Innovation Engineer role, where security is no longer an afterthought but a foundational layer of the product design.

The Architecture of On-Device Inference

Historically, high-fidelity image editing required round-trip communication with centralized cloud servers. This model introduced unacceptable latency for real-time interaction and created significant privacy liabilities. By shifting the workload to the television’s system-on-chip (SoC), Samsung and Google are betting on the efficacy of modern NPUs (Neural Processing Units) to handle LLM parameter scaling locally. This represents not merely a convenience upgrade; it is a structural necessity for the next phase of ambient computing.

However, local processing introduces thermal constraints that cloud infrastructure does not face. Continuous AI editing sessions can trigger thermal throttling, degrading performance precisely when the user expects peak responsiveness. The engineering challenge lies in balancing model quantization with visual fidelity. If the model is too aggressive in compression, artifacts appear. If it is too heavy, the device overheats. This is the precise friction point where the AI Red Teamer becomes critical, stress-testing the hardware limits under adversarial conditions to ensure stability.

What In other words for Enterprise IT

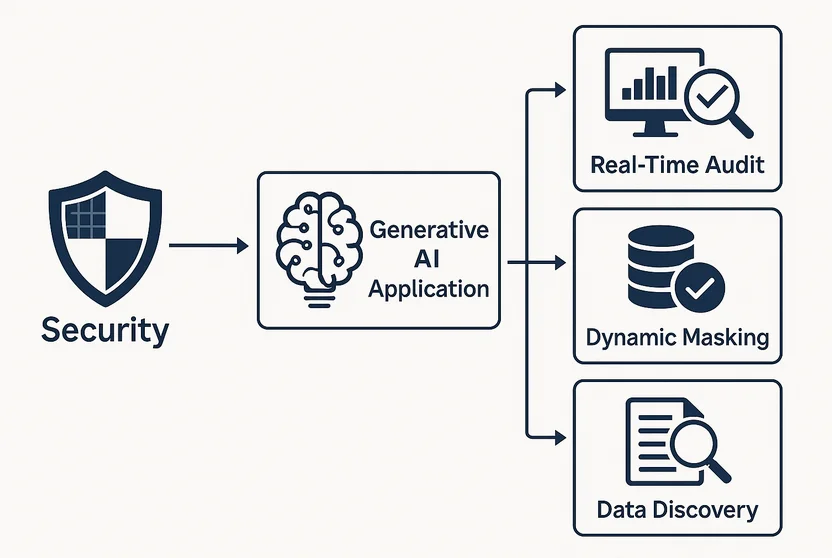

While this launch targets consumers, the underlying technology stacks are identical to those being deployed in corporate environments for secure data handling. The ability to process sensitive visual data without exfiltration to the cloud is a key requirement for regulated industries. We are seeing a convergence where consumer electronics lead the R&D for enterprise-grade privacy tools. The demand for professionals who can architect these secure pathways is skyrocketing, as evidenced by roles focusing on AI-Powered Security Analytics that monitor these very interactions.

The integration also signals a hardening of the walled garden. By making the gallery native and AI-enhanced, Google and Samsung reduce the reliance on third-party applications that might introduce vulnerabilities. This is platform lock-in disguised as a security feature. Developers who previously built gallery extensions now face a higher barrier to entry, needing to match the performance of native NPUs to remain relevant. The ecosystem is closing, but the security posture is theoretically strengthening.

Security Implications of Generative Editing

Generative AI on edge devices opens unique attack vectors. Adversarial inputs could potentially manipulate the editing algorithms to produce harmful content or exploit buffer overflows in the image processing pipeline. The industry is currently live-tracking whether AI will replace principal cybersecurity engineering jobs, but the consensus suggests a transformation rather than replacement. Senior individual contributors with 12+ years of experience are needed to oversee these complex intersections of hardware and probabilistic software.

“The role requires a strong interest in cybersecurity, innovation, and modern technologies, with a willingness to learn, grow, and take ownership of security topics.”

This requirement, drawn from current hiring standards for secure AI roles, underscores the human element still required to safeguard automated systems. Automation cannot fully police itself, especially when the AI is generating content dynamically. The need for human oversight in the loop remains paramount, particularly when dealing with the potential for deepfake propagation through consumer television interfaces.

the supply chain for these AI models must be verified. Where did the training data originate? Was it sanitized for copyright and bias? These questions are no longer academic; they are legal liabilities. The technical elite commanding salaries in the $200k–$500k range are being hired specifically to engineer the intelligence layer that governs these decisions. They are the architects ensuring that the AI does not violate compliance standards while delivering the promised user experience.

The 30-Second Verdict

- Performance: Dependent on NPU efficiency; expect thermal throttling during extended 4K editing sessions.

- Privacy: Improved via on-device processing, reducing cloud data exfiltration risks.

- Ecosystem: Strengthens Google/Samsung lock-in, potentially marginalizing third-party gallery apps.

- Security: Requires continuous adversarial testing to prevent model manipulation.

The Economic Reality of AI Integration

The cost of implementing this level of intelligence is non-trivial. It requires specialized hardware that can handle the computational load of diffusion models or similar architectures without lag. This drives up the bill of materials for the television sets, potentially pricing out entry-level consumers. We are seeing a stratification in the market where AI capabilities become a premium differentiator, much like refresh rates or panel technology were in previous decades.

For developers, the API capabilities surrounding this native gallery will determine the innovation ceiling. If Google exposes these AI editing functions via robust APIs, we could see a renaissance of creative applications. If they remain closed, the feature becomes a stagnant showcase. The history of platform wars suggests a tendency toward closure once dominance is established. Monitoring the developer documentation for these tools will be essential to gauge the openness of the new ecosystem.

this launch is a bellwether for the industry. It proves that AI is moving from the cloud to the edge, carrying both immense promise and significant risk. The technology is shipping, not just promised, which separates it from the vaporware that plagues the sector. However, the success of this initiative hinges on the invisible function of security engineers and red teamers who ensure that the intelligence layer remains robust against the inevitable attempts to break it. The future of television is smart, but it must also be secure.