Apple is diversifying its smart glasses strategy by testing at least four premium frame styles to challenge Meta’s dominance. By prioritizing high-finish materials and aesthetic variety, Apple aims to transition wearable AI from a niche gadget to a mainstream fashion accessory integrated into the broader Apple Intelligence ecosystem.

This isn’t a mere exercise in industrial design. This proves a calculated strike at the “glasshole” stigma that has haunted head-mounted displays for a decade. By mimicking the launch cadence of the 2015 Apple Watch—offering multiple materials and styles—Apple is attempting to decouple the hardware’s utility from its appearance. They are betting that the consumer will tolerate a camera on their face if the frame looks like a luxury accessory from a boutique in Milan rather than a piece of plastic from a lab in Cupertino.

The real battle, however, isn’t happening on the surface of the frames. It is happening in the millimeters of space between the temple and the wearer’s skull.

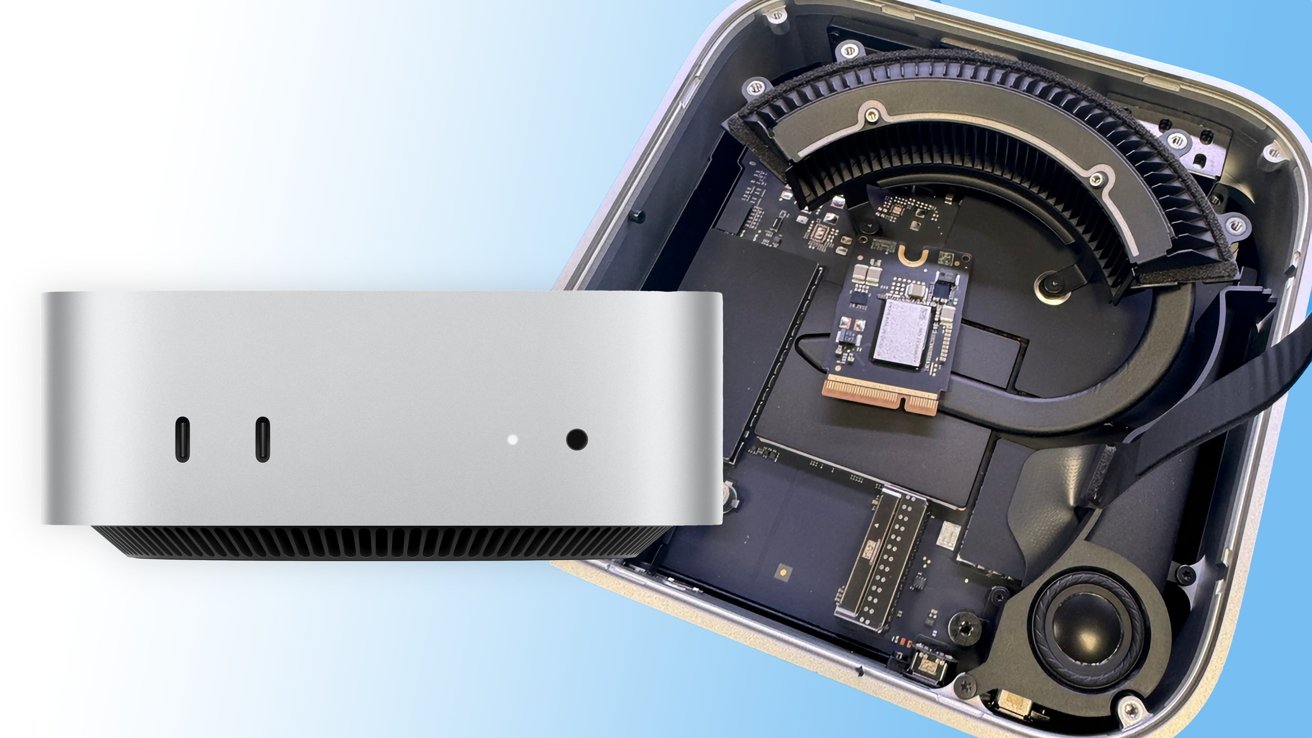

The Thermal Paradox of Premium Materials

Integrating a high-performance SoC (System on Chip) into a lightweight frame creates a brutal engineering trade-off: thermal throttling versus aesthetic slimness. Premium materials like titanium or high-grade acetate are excellent for luxury appeal, but they are notoriously poor at dissipating heat compared to aluminum or specialized ceramics. If Apple pushes an NPU (Neural Processing Unit) to handle real-time multimodal AI—essentially “seeing” and “interpreting” the world in a continuous stream—the frames risk becoming uncomfortably warm against the temple.

To solve this, Apple is likely leveraging a split-processing architecture. By offloading the heaviest compute loads to the paired iPhone via a low-latency wireless link, the glasses can maintain a slim profile while the phone handles the heavy lifting of Core ML execution. This minimizes the power draw on the glasses’ internal battery, which is physically limited by the thinness of the frame arms.

It is a delicate dance of milliwatts.

“The primary constraint for the next generation of wearables isn’t battery capacity—it’s thermal density. You cannot put a high-wattage processor two millimeters from a human temple without sophisticated heat spreading or extreme offloading.” — Marcus Thorne, Lead Hardware Architect at NexaCore Systems

Multimodal AI: Moving Beyond the Audio-Only Loop

While the Ray-Ban Meta glasses have found success with a “hear-and-speak” AI model, Apple is aiming for a higher tier of spatial awareness. The goal is a seamless integration of Apple Intelligence that doesn’t just respond to prompts but anticipates needs based on visual context. This requires a sophisticated pipeline: a low-power camera sensor feeds a specialized ISP (Image Signal Processor), which then triggers a localized LLM (Large Language Model) to identify objects or text in the user’s field of view.

This is where the “Information Gap” between Apple and its rivals widens. Apple isn’t just building a gadget; they are building a sensor array for their ecosystem. By utilizing advanced computer vision frameworks, these glasses can potentially act as a peripheral for the Vision Pro, allowing users to transition from a lightweight “glance” interface during the day to a full “immersive” experience at night.

The 30-Second Verdict: Apple vs. Meta

- Meta: Focuses on social integration and “AI as a companion.” Lower price point, plastic-heavy builds.

- Apple: Focuses on “AI as an invisible utility” and luxury positioning. Higher price point, premium metallurgy.

- The Edge: Apple’s vertical integration (Silicon + OS + Hardware) allows for tighter latency control than Meta’s reliance on third-party chipsets.

The Luxury Moat and the Ecosystem Trap

By offering four distinct styles, Apple is employing a classic psychological pricing and positioning strategy. They aren’t selling a tool; they are selling an identity. This creates a “luxury moat” that makes it harder for open-source alternatives or cheaper competitors to gain a foothold in the professional or high-fashion demographics.

But the deeper play is platform lock-in. Once a user integrates their daily visual and auditory stream into the Apple Intelligence cloud, the friction of switching to an Android-based wearable becomes nearly insurmountable. The data generated by these glasses—everything from where you look to how often you interact with specific physical objects—will feed back into Apple’s user profile, refining the AI’s predictive capabilities in a way that no standalone device can match.

This raises significant concerns regarding data sovereignty and “always-on” surveillance.

From a cybersecurity perspective, the attack surface expands exponentially. We are no longer talking about a device in a pocket, but a device with a camera and microphone permanently positioned at eye level. The implementation of end-to-end encryption for the visual stream is non-negotiable, yet the potential for “zero-day” exploits in the proprietary wireless protocol between the glasses and the iPhone remains a critical vulnerability.

Hardware Benchmarks: The Fight for Milliseconds

To understand the technical leap required, we must look at the latency requirements for “invisible” AI. For a user to feel that the AI is reacting in real-time to the world, the round-trip latency (Camera → SoC → Cloud/Local LLM → Audio Feedback) must stay under 200 milliseconds. Anything higher feels like a laggy voice assistant from 2015.

| Metric | Current Meta Ray-Bans (Est.) | Apple Glasses Target (Projected) | Industry Gold Standard |

|---|---|---|---|

| Processing Architecture | Qualcomm Snapdragon AR1 | Custom Apple Silicon (S-Series/H-Series) | Dedicated Neural Engine |

| Material Thermal Conductivity | Low (Polycarbonate) | Medium-High (Titanium/Ceramic) | Active Cooling (Not viable) |

| AI Latency | ~300-500ms (Cloud dependent) | <200ms (Hybrid Local/Cloud) | <100ms (On-device) |

| Ecosystem Linkage | App-based / Meta AI | Deep OS Integration / Siri | Universal API Access |

Apple’s insistence on premium materials isn’t just about vanity. It’s about using the physical chassis as a heat sink to push the limits of what a wearable NPU can achieve. If they can solve the thermal equation while maintaining a “chic” aesthetic, they won’t just win the hardware war—they will redefine how we interface with the digital world.

The industry is watching. The code is written. Now, it’s just a matter of whether the frames fit.