April’s Celestial Showcase: From Pink Moon to Potential Comet and the Tech Enabling Our View

April 2026 promises a vibrant astronomical display, beginning with a full “Pink Moon” on April 1st, coinciding with NASA’s Artemis II lunar mission launch. Observers can too anticipate a potential glimpse of Comet MAPS (C/2026 A1) as it nears the sun, the peak of the Lyrid meteor shower, and a dazzling Venus in the evening sky. This confluence of events highlights not only the beauty of the cosmos but also the increasingly sophisticated technology—from advanced image sensors to AI-powered noise reduction—that allows us to witness and analyze these phenomena.

The Artemis II Launch and the Demand for Real-Time Data Processing

The launch of Artemis II this week is a pivotal moment, not just for lunar exploration, but for demonstrating the capabilities of edge computing in extreme environments. The mission will generate terabytes of data – high-resolution imagery, sensor readings, and telemetry – that must be processed in near real-time. This isn’t simply about sending data back to Earth; it’s about enabling autonomous decision-making onboard the spacecraft. The processing relies heavily on radiation-hardened System-on-Chips (SoCs) utilizing ARM architecture, specifically designed to withstand the harsh conditions of space. The shift towards ARM is significant; historically, space missions favored radiation-hardened PowerPC processors, but ARM’s power efficiency and increasing performance are proving decisive. We’re seeing a parallel here with automotive applications – the same demands for low power and high compute are driving innovation in both sectors.

What This Means for Satellite Communications

The data throughput requirements of Artemis II are pushing the boundaries of satellite communication technology. Expect to see increased adoption of Ka-band and even optical communication systems (lasercom) to handle the bandwidth demands. Optical communication, in particular, offers significantly higher data rates and improved security compared to traditional radio frequency (RF) systems. However, it requires precise pointing and tracking, necessitating advanced algorithms and highly accurate attitude control systems.

Comet MAPS: A Test Case for Solar Observatory Image Processing

Comet C/2026 A1 (MAPS) presents a unique challenge and opportunity. As a Kreutz sungrazer, it will pass incredibly close to the sun, potentially becoming exceptionally bright – or disintegrating entirely. Observing it requires specialized equipment and sophisticated image processing techniques. The key challenge is separating the faint signal of the comet from the overwhelming glare of the sun. This is where advancements in computational photography and AI come into play. Specifically, techniques like stacked imaging, wavelet transforms, and deep learning-based noise reduction are crucial. The Solar Orbiter and Parker Solar Probe are already employing similar techniques, but MAPS offers a closer, potentially brighter target. The success of observing MAPS will depend heavily on the performance of the image sensors used – specifically, their dynamic range and low-light sensitivity. Modern CMOS sensors, with their ability to handle high frame rates and low noise, are ideally suited for this task.

“The ability to predict the behavior of sungrazing comets is still limited. We rely heavily on real-time data analysis and adaptive algorithms to track their trajectory and brightness. The advancements in AI-powered image processing are proving invaluable in this regard.” – Dr. Emily Carter, Chief Scientist, Space Imaging Technologies.

Lyrid Meteor Shower and the Rise of Automated Detection Systems

The Lyrid meteor shower, peaking around April 21-22, offers a more predictable astronomical event. However, even here, technology is transforming how we observe and study meteors. Traditional visual observation is being supplemented by automated detection systems utilizing all-sky cameras and machine learning algorithms. These systems can detect fainter meteors and provide more accurate measurements of their trajectory and velocity. The data collected is used to study the origin and composition of meteoroids, providing insights into the early solar system. The software powering these systems often leverages open-source libraries like OpenCV for image processing and TensorFlow or PyTorch for machine learning. OpenCV, in particular, provides a comprehensive suite of tools for computer vision tasks, making it a popular choice for astronomical applications.

The Role of Citizen Science and Distributed Computing

Citizen science initiatives, such as those coordinated by the Zooniverse platform, are playing an increasingly important role in analyzing the vast amounts of data generated by these automated systems. Volunteers can help identify meteors in images and classify their characteristics, contributing to a larger scientific effort. Zooniverse demonstrates the power of distributed computing and the potential for engaging the public in scientific research.

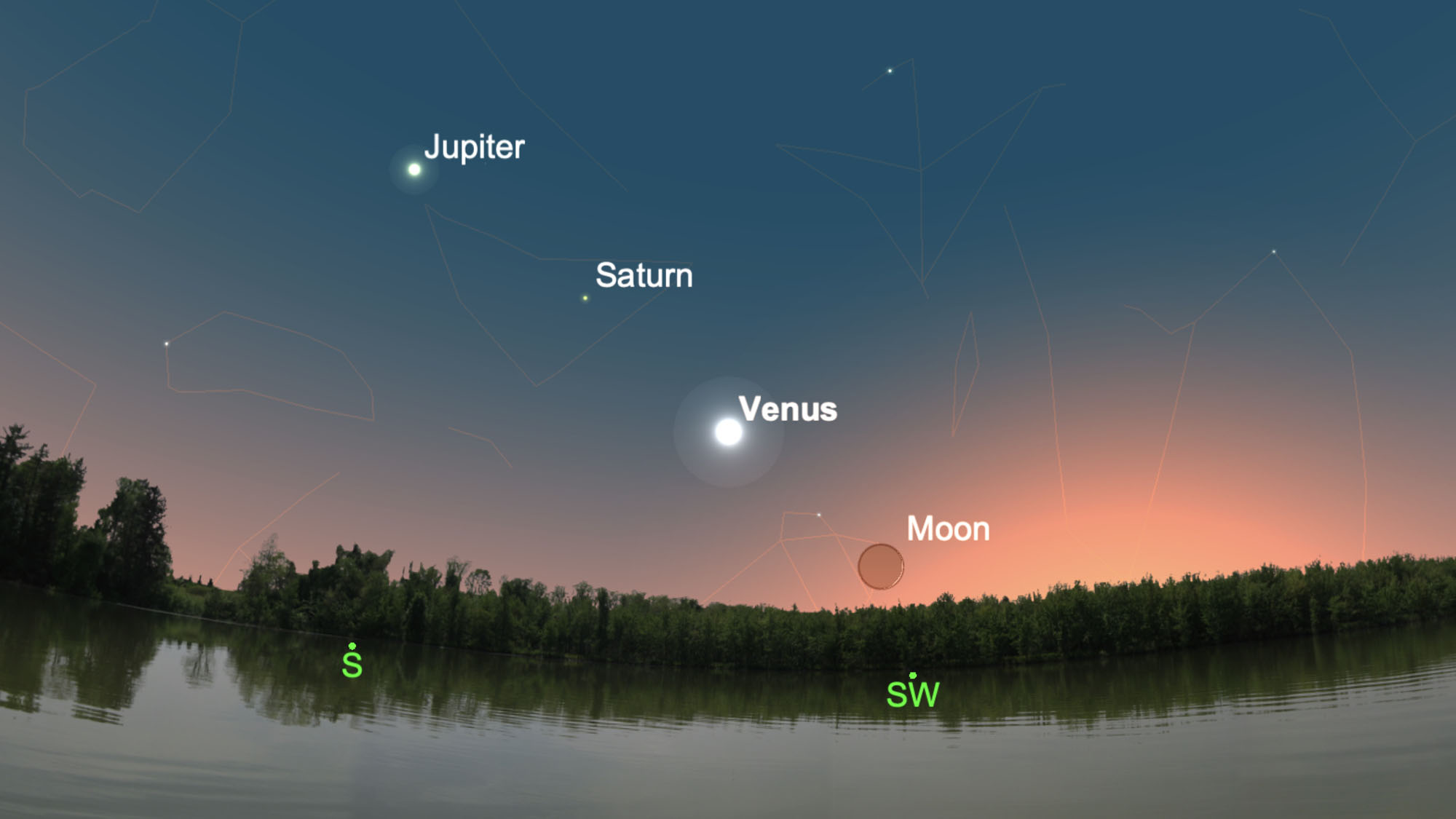

Venus’s Evening Appearance and the Challenges of Atmospheric Mapping

Venus’s bright appearance in the western sky in late April provides an opportunity for both visual observation and scientific study. However, studying Venus’s atmosphere is notoriously difficult due to its dense cloud cover. Radar mapping, pioneered by the Magellan spacecraft, has provided detailed images of the surface, but understanding the dynamics of the atmosphere requires different techniques. Millimeter-wave and submillimeter-wave observations are used to penetrate the clouds and study the temperature and wind patterns. The Atacama Large Millimeter/submillimeter Array (ALMA) in Chile is a key instrument for these observations. ALMA’s high sensitivity and resolution allow astronomers to map the Venusian atmosphere with unprecedented detail. Future missions, such as NASA’s VERITAS and DAVINCI+, will build on these observations, providing even more comprehensive data on Venus’s atmosphere and surface.

Data Integrity and the Fight Against Deepfakes in Astronomy

As astronomical images become increasingly detailed and readily available, the risk of manipulation and the creation of deepfakes increases. Ensuring data integrity is paramount. This involves implementing robust authentication mechanisms, such as digital signatures and blockchain-based provenance tracking. Developing algorithms to detect anomalies and inconsistencies in astronomical images is crucial. The field of forensic astronomy is emerging, focusing on the detection and prevention of image manipulation. The principles of cryptographic hashing and digital watermarking, commonly used in cybersecurity, are being adapted for astronomical applications.

| Observational Event | Key Technology | Data Volume (Estimated) |

|---|---|---|

| Artemis II Launch | Radiation-Hardened ARM SoCs, Ka-band/Optical Communication | 10+ Terabytes |

| Comet MAPS Observation | CMOS Image Sensors, AI-Powered Noise Reduction | 100+ Gigabytes |

| Lyrid Meteor Shower | All-Sky Cameras, Machine Learning Algorithms | 500+ Gigabytes |

| Venus Atmospheric Mapping | Millimeter-Wave Radiotelescopes (ALMA) | 200+ Gigabytes |

April’s night sky offers a compelling reminder of the universe’s grandeur and the technological prowess that allows us to explore it. From the launch of Artemis II to the potential sighting of Comet MAPS, each event presents unique challenges and opportunities for innovation. The convergence of astronomy and technology is not merely about capturing beautiful images; it’s about pushing the boundaries of scientific knowledge and our understanding of the cosmos.