Artemis II Achieves Successful Launch: Beyond the Headlines, a Triumph of Redundancy and Modern Flight Software

On April 2nd, 2026, at 14:57 UTC, NASA’s Artemis II mission successfully launched from Kennedy Space Center, initiating a planned lunar flyby. This uncrewed test flight, carrying the Orion spacecraft atop a Space Launch System (SLS) rocket, represents a critical step towards establishing a sustained human presence on the Moon. Beyond the celebratory imagery, the mission’s success hinges on advancements in fault-tolerant systems, real-time data analytics, and a departure from legacy flight software architectures. This isn’t simply a repeat of Apollo; it’s a fundamentally different approach to deep-space exploration, leveraging decades of advancements in computing and materials science.

The Shift from Apollo-Era Computing to Modern Redundancy

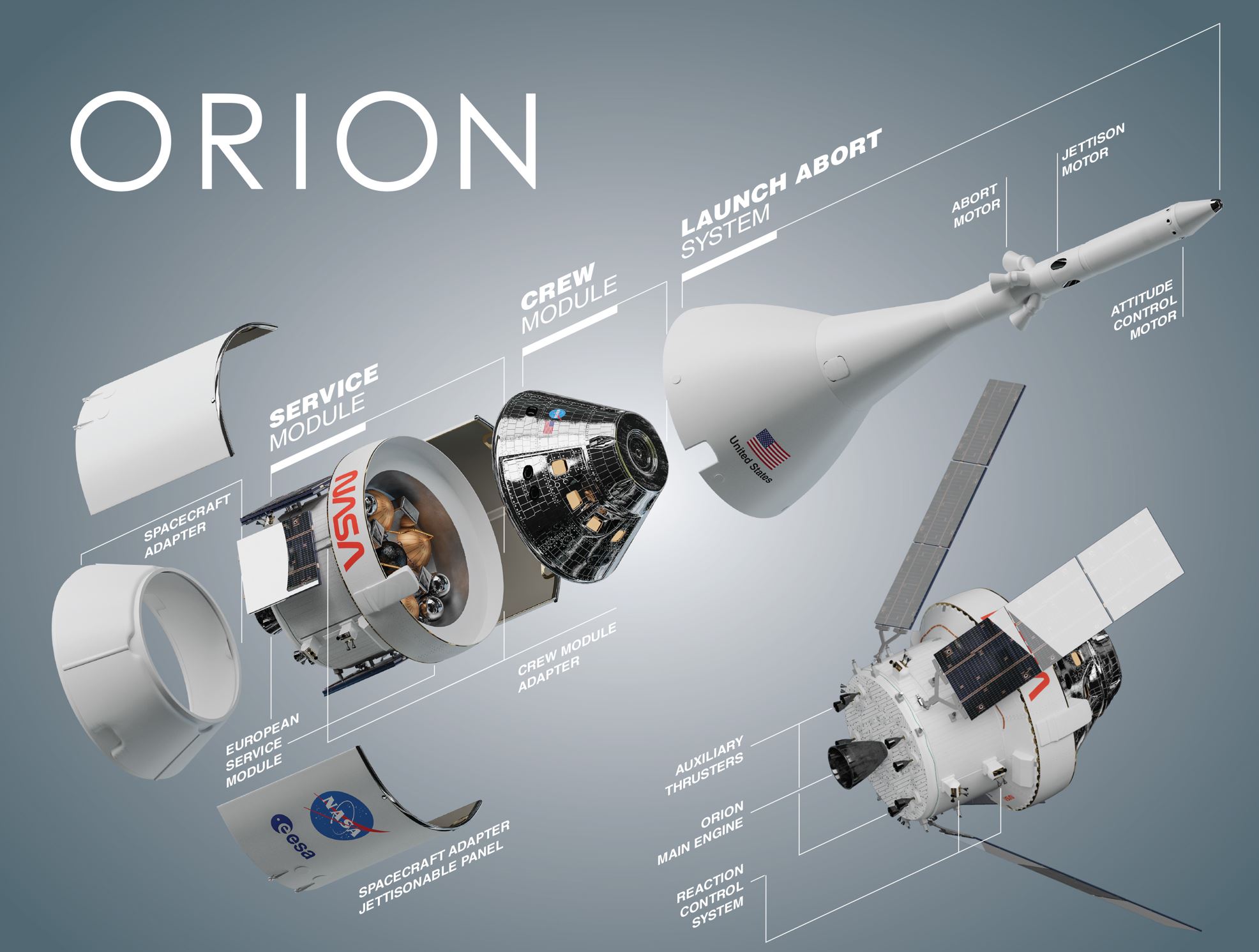

The Apollo missions, while groundbreaking, operated on computing power that pales in comparison to modern smartphones. The Apollo Guidance Computer (AGC) relied on a single, relatively leisurely processor. Artemis II, in contrast, employs a highly redundant system. The Orion spacecraft utilizes the Lockheed Martin Orion Flight Software (OFS), built around a radiation-hardened multi-core processor architecture. This isn’t just about raw processing speed; it’s about graceful degradation. Multiple processors operate in parallel, constantly cross-checking each other’s calculations. Should one processor fail – a very real possibility in the harsh radiation environment of space – the others seamlessly accept over, preventing catastrophic system failure. The SLS rocket itself incorporates similar redundancy in its avionics systems, employing triple-redundant inertial reference units (IRUs) and flight computers. This level of redundancy is a direct response to lessons learned from past failures and a recognition of the inherent risks of deep-space travel.

The OFS also represents a significant shift in software architecture. Instead of monolithic codebases, it’s built on a modular, object-oriented design. This allows for easier testing, debugging, and future upgrades. The software is written primarily in C and C++, leveraging real-time operating systems (RTOS) to ensure deterministic performance – crucial for critical flight control functions. The system incorporates advanced fault detection and isolation (FDI) algorithms, capable of identifying and mitigating anomalies before they escalate into major problems. This is a far cry from the manual troubleshooting often required during the Apollo era.

Data Analytics and the Real-Time Health Monitoring of Orion

Artemis II isn’t just about getting to the Moon and back; it’s about gathering data. The Orion spacecraft is equipped with a comprehensive suite of sensors, monitoring everything from temperature and pressure to radiation levels and structural integrity. This data is streamed back to mission control in real-time, where it’s analyzed by sophisticated algorithms. These algorithms, powered by machine learning models, can detect subtle anomalies that might indicate a developing problem. For example, changes in vibration patterns could signal a failing component, allowing engineers to take corrective action before a critical failure occurs. The data pipeline utilizes a combination of onboard processing and ground-based analytics, leveraging the power of cloud computing to handle the massive data volumes generated during the mission. NASA’s Artemis program website details the sensor suite and data management systems.

This real-time health monitoring is particularly important for assessing the performance of the spacecraft’s heat shield. The heat shield is critical for protecting the Orion capsule during re-entry into Earth’s atmosphere, where it will experience temperatures exceeding 2,700 degrees Celsius. Sensors embedded within the heat shield monitor its temperature and ablation rate, providing engineers with valuable data to validate their models and ensure its effectiveness. The data collected during Artemis II will be used to refine the design of future heat shields, enabling even more ambitious missions to deeper space.

The Cybersecurity Implications of Deep-Space Missions

While often overlooked, cybersecurity is a growing concern for space missions. The Artemis II spacecraft, like any complex computer system, is vulnerable to cyberattacks. Even though the risk of a direct hack from Earth is relatively low, the potential for interference from electromagnetic pulses (EMPs) or even malicious code introduced during the manufacturing process is real. NASA employs a multi-layered cybersecurity approach, including encryption, authentication, and intrusion detection systems. The OFS is designed with security in mind, incorporating features such as memory protection and code signing to prevent unauthorized modifications. However, the increasing complexity of space systems and the growing reliance on commercial off-the-shelf (COTS) components are creating new vulnerabilities.

“The attack surface for these missions is expanding exponentially. We’re moving beyond isolated systems to interconnected networks, and that introduces new risks. It’s no longer enough to just protect the software; we demand to secure the entire supply chain, from component manufacturers to launch providers.” – Dr. Emily Carter, CTO of Stellar Cybernetics, a space cybersecurity firm.

The Artemis program is also exploring the use of blockchain technology to enhance the security and integrity of mission-critical data. Blockchain can provide a tamper-proof record of all software updates and configuration changes, making it more difficult for attackers to compromise the system. IEEE’s exploration of blockchain in aerospace highlights the potential benefits and challenges of this technology.

The Broader Tech War: US Leadership and the Rise of Space-Based Computing

The success of Artemis II isn’t just a scientific achievement; it’s a demonstration of US technological leadership. The mission relies on cutting-edge technologies developed by American companies, from Lockheed Martin and Boeing to Intel and NVIDIA. This is particularly important in the context of the ongoing “chip wars” with China, which is also investing heavily in space exploration. China’s own lunar program, Chang’e, is rapidly advancing, and the competition between the two countries is likely to intensify in the years to come. The ability to develop and deploy advanced space-based computing systems is becoming a key strategic advantage.

the data generated by Artemis II will have applications far beyond space exploration. The algorithms developed for real-time health monitoring could be used to improve the reliability of critical infrastructure on Earth, such as power grids and transportation systems. The advancements in radiation-hardened computing could benefit industries such as healthcare and finance, where data security and reliability are paramount. The mission is a catalyst for innovation, driving advancements in a wide range of technologies that will have a positive impact on society.

The Artemis II mission represents a paradigm shift in space exploration. It’s a testament to the power of redundancy, real-time data analytics, and modern software engineering. It’s also a reminder that space exploration is not just about reaching for the stars; it’s about pushing the boundaries of human knowledge and innovation. The data collected during this mission will pave the way for a sustained human presence on the Moon and beyond, ushering in a new era of space exploration. Space.com’s Artemis II explainer provides a comprehensive overview of the mission’s objectives and timeline.

What This Means for Enterprise IT

The fault-tolerance and real-time monitoring techniques employed in Artemis II aren’t limited to space travel. Principles of redundancy, modular design, and predictive maintenance are directly applicable to enterprise IT infrastructure. Organizations can leverage these concepts to improve the reliability and resilience of their own systems, minimizing downtime and reducing the risk of data loss. The investment in advanced data analytics can also provide valuable insights into system performance, enabling proactive identification and resolution of potential problems.