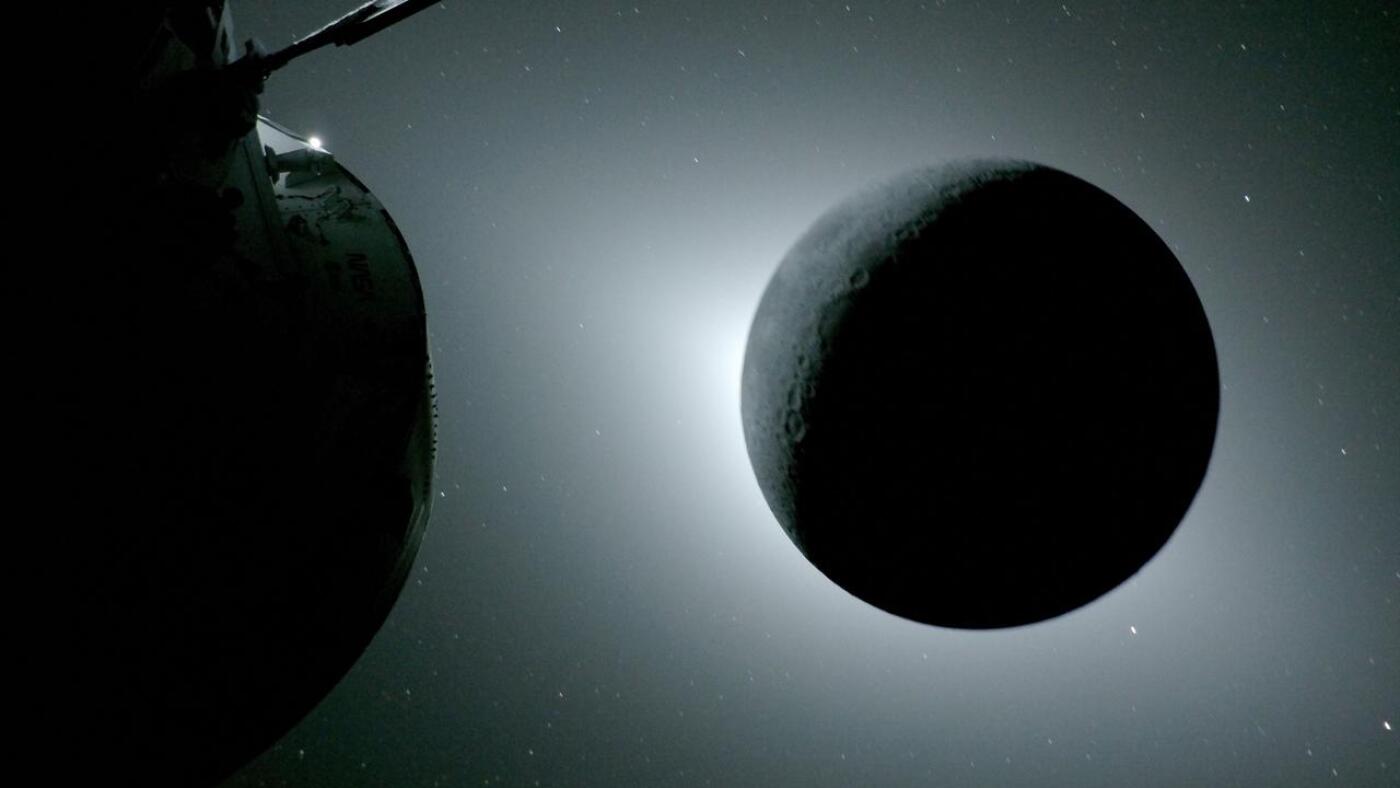

NASA’s Artemis II crew has successfully completed its lunar flyby, capturing unprecedented imagery of the Moon’s far side and a rare lunar solar eclipse. This mission validates the Orion spacecraft’s life-support systems and deep-space navigation, marking the first human return to lunar proximity since 1972.

Let’s be clear: the “jaw-dropping” photos of Earthsets and lunar craters are the PR gold, but for those of us tracking the actual telemetry, the real story is the edge-computing stack that made this possible. We aren’t just talking about cameras; we are talking about the massive data throughput required to transmit high-resolution imagery across 240,000 miles of vacuum without losing packet integrity. The Artemis II mission is essentially a stress test for the Artemis program’s long-term communication architecture.

The sheer scale of this achievement is often buried under the “beauty” of the photos. When the crew describes seeing parts of the moon “never seen before,” they aren’t just talking about a different angle. They are talking about the biological and psychological impact of observing the lunar far side—a region shielded from Earth’s radio noise—while operating a complex, software-defined vehicle.

The Latency War: Deep Space Network vs. Real-Time Telemetry

One of the most overlooked technical hurdles in the Artemis II flyby is the “speed of light” problem. Even at the speed of light, signals take roughly 1.3 seconds to travel from the Moon to Earth. While that sounds negligible for a photo upload, We see a nightmare for real-time systems monitoring. To manage this, NASA relies on the Deep Space Network (DSN), a global array of massive radio antennas that hand off signals as the Earth rotates.

The “Information Gap” here is the transition from traditional bent-pipe communications to more autonomous, onboard processing. To handle the massive datasets generated by the novel high-res lunar imaging, the Orion spacecraft utilizes radiation-hardened processors that are, by modern standards, ancient. We are talking about hardware that prioritizes stability and fault tolerance over the raw clock speeds of an ARM-based M3 chip. The bottleneck isn’t the sensor; it’s the compute power available to compress those images before they hit the X-band transmitter.

If you’re wondering why we don’t just place a modern GPU on the ship, the answer is “Single Event Upsets” (SEUs). A single high-energy proton hitting a 5nm transistor can flip a bit, crashing the entire flight computer. This is why space-grade hardware remains stuck in a cycle of “conservative engineering”—using larger process nodes (like 150nm or 65nm) to ensure the hardware doesn’t literally fry in the Van Allen belts.

“The challenge of lunar missions isn’t just getting there; it’s the data return. We are moving from an era of ‘snapshot’ telemetry to ‘streaming’ science. The infrastructure required to maintain a high-bandwidth link while moving at orbital velocities is an engineering feat that dwarfs the actual launch.”

The Far Side Blindspot and the Role of Relay Satellites

When the Artemis II crew swung around the Moon, they entered a radio shadow. For a few critical moments, they were cut off from Earth. This is the “Far Side” problem. To solve this for future Artemis III landings, NASA is deploying the Lunar Gateway and various relay satellites. This is essentially creating a “Lunar ISP,” moving from a point-to-point connection to a networked mesh architecture.

This shift mirrors the evolution of terrestrial networking. We are seeing the transition from simple hub-and-spoke models to a decentralized lunar network. For developers and data scientists, So the eventual availability of “Lunar Edge Computing,” where data is processed on the moon’s surface or in orbit before only the most relevant metadata is beamed back to Earth.

The 30-Second Verdict: Why This Matters for Earth-Side Tech

- Radiation-Hardened Logic: The mission proves that “leisurely and steady” hardware wins in extreme environments, pushing the industry to find a middle ground between power, and resilience.

- Network Resilience: The successful hand-offs between DSN stations provide a blueprint for future interplanetary internet protocols (DTN – Delay Tolerant Networking).

- Optical Comms: This mission paves the way for laser-based communication, which could increase data rates by 10x to 100x compared to traditional RF.

Decoding the ‘Earthset’: More Than a Pretty Picture

The “Earthset” captured by the crew—where the Earth disappears behind the lunar horizon—is more than a poetic moment. It is a calibration event. By analyzing the atmospheric fringe of the Earth from a lunar perspective, scientists can refine models of planetary atmospheres. This is essentially using the Moon as a vantage point for a giant, natural telescope.

From a technical perspective, capturing these images requires precise synchronization between the spacecraft’s attitude control system (ACS) and the camera’s shutter speed. Any oscillation in the spacecraft’s orientation would result in motion blur that renders the scientific data useless. The precision here is measured in arcseconds, requiring a level of stability that makes a gimbal-stabilized drone look like a toy.

The integration of these systems is handled by a complex layer of flight software, likely written in a mix of C and Ada for maximum reliability. Unlike the “move fast and break things” ethos of Silicon Valley, the Artemis codebase is built on “verify and double-verify.” There is no “beta” when you are 200,000 miles from the nearest repair shop.

To understand the scale of the data being managed, consider the following comparison of communication modes:

| Metric | Traditional RF (S-Band/X-Band) | Next-Gen Optical (Laser) | Impact on Science |

|---|---|---|---|

| Bandwidth | Kilobits to Megabits per second | Gigabits per second | Real-time 4K video streaming |

| Latency | ~1.3 seconds (one way) | ~1.3 seconds (one way) | No change (limited by physics) |

| Power Efficiency | High power for long distance | Lower power per bit | Longer mission durations |

| Reliability | High (pierces clouds/dust) | Low (blocked by clouds) | Requires relay constellations |

The Macro-Market Play: The New Space Race is a Data Race

We need to stop viewing Artemis II as just a NASA project and start seeing it as the foundation for a lunar economy. The ability to navigate the far side and maintain communication is the “API” that commercial entities like SpaceX and Blue Origin will eventually build upon. Whoever controls the lunar communication infrastructure controls the “toll road” to the Moon.

This is the “chip war” expanded to a planetary scale. Just as the US and China fight over EUV lithography and IEEE standards for 6G, the battle for the Moon is about who establishes the primary network protocols. If the US establishes the standard for lunar data transmission, every other nation or company wanting to land there must play by those rules. It is the ultimate form of platform lock-in.

The Artemis II mission isn’t just about the crew’s awe at the lunar landscape. It’s about the silent, invisible architecture of bits and photons that allowed them to tell us about it. The real victory isn’t that they saw the far side; it’s that the data survived the trip home.

The Takeaway: While the public focuses on the images, the industry should focus on the pipeline. The transition to optical communications and edge-computing in deep space is the next great frontier for software engineers and hardware architects. The Moon is no longer just a destination; it’s the first node in a multi-planetary network.