AWS Accelerates Agentic AI & Serverless Development: A Deep Dive into March 2026 Updates

Amazon Web Services unveiled a series of updates this week, including the 2026 AI & ML Scholars program aiming to train 100,000 individuals, the Agent Plugin for AWS Serverless to streamline AI-assisted development, and enhancements to Aurora PostgreSQL and Lambda. These moves signal AWS’s continued push towards democratizing AI access and simplifying serverless application construction, directly challenging competitors like Microsoft Azure and Google Cloud Platform in the rapidly evolving cloud landscape.

The AI Skills Gap & AWS’s Bold Response

The launch of the 2026 AWS AI & ML Scholars program is a direct acknowledgement of the widening skills gap in artificial intelligence. While LLM parameter scaling continues to dominate headlines, the practical ability to *build* with these models remains limited. The program’s structure – a foundational challenge followed by a fully-funded Udacity Nanodegree for top performers – is a smart approach. It filters for genuine aptitude and provides substantial upskilling opportunities. The Udacity Nanodegree component is particularly crucial; simply providing access to foundational materials isn’t enough. The program’s focus on generative AI is also strategically aligned with current market demand. Applications close on June 24, 2026, so aspiring AI practitioners should act quickly.

This isn’t merely altruism. A larger pool of skilled AWS users translates directly into increased adoption of AWS AI/ML services. It’s a calculated investment in the ecosystem. The program’s scale – 100,000 learners – is ambitious, but achievable given AWS’s existing infrastructure and partnerships. It’s a clear signal that AWS intends to be a dominant force in AI education, potentially eclipsing existing initiatives from other cloud providers.

Agent Plugin for AWS Serverless: AI-Powered Development Takes Center Stage

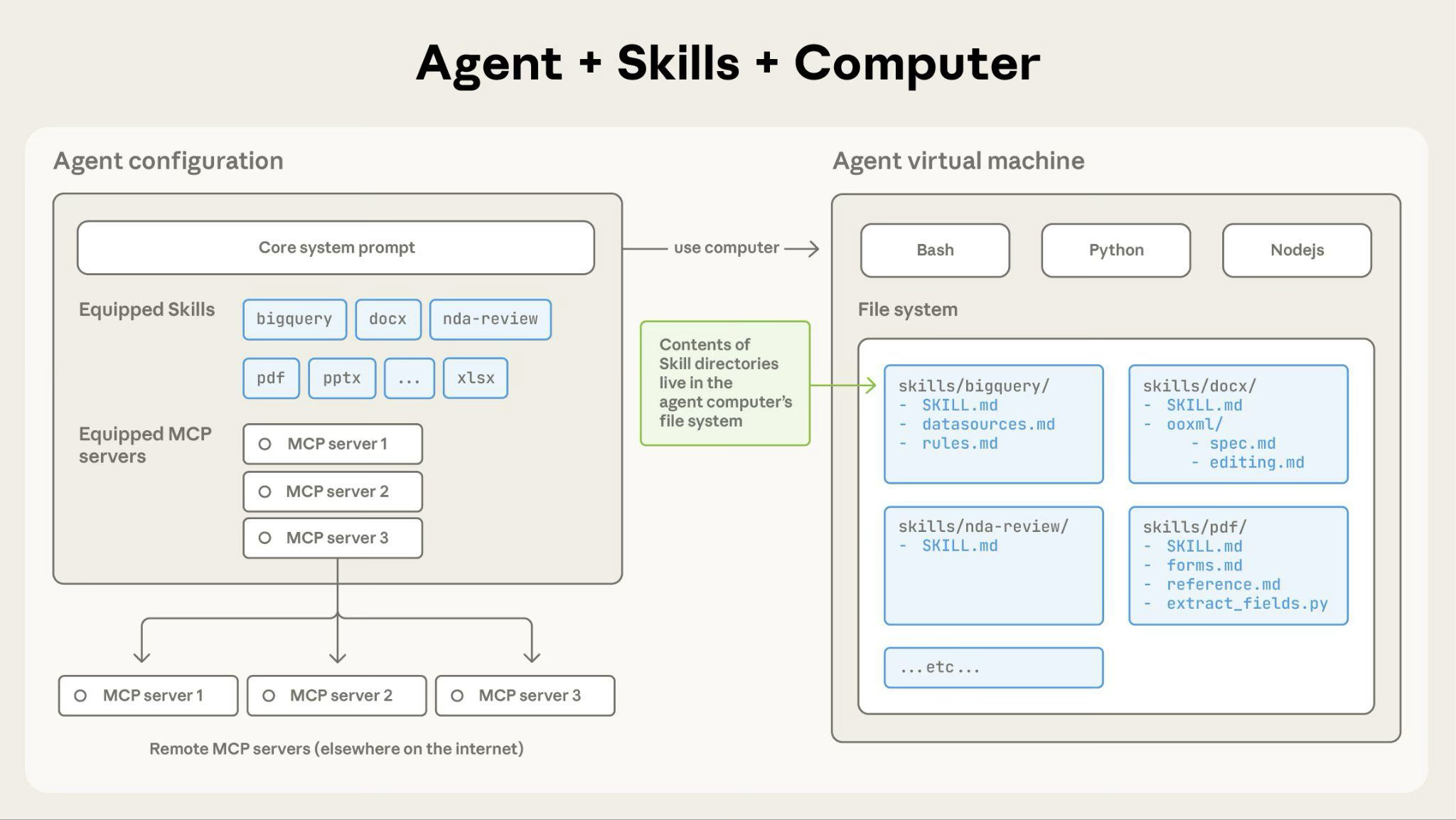

The Agent Plugin for AWS Serverless is arguably the most technically interesting announcement. It’s not just about integrating AI coding assistants like Kiro, Claude Code, and Cursor; it’s about creating a structured framework for AI-assisted development. The use of the Model Context Protocol (MCP) is key. MCP allows the plugin to package skills, sub-agents, and contextual information into modular units, effectively creating a “skill store” for serverless development. This addresses a critical pain point: the lack of consistent guidance and best practices when building serverless applications.

Traditionally, serverless development has been prone to configuration drift and security vulnerabilities due to its inherent complexity. The Agent Plugin aims to mitigate these risks by embedding expertise directly into the development workflow. It’s a move towards “opinionated tooling,” guiding developers towards secure and efficient serverless architectures. This is a significant departure from the more open-ended approach of many existing serverless frameworks.

“The biggest challenge with serverless isn’t the technology itself, but the operational complexity. Anything that can automate best practices and reduce cognitive load for developers is a huge win,” says Dr. Anya Sharma, CTO of Serverless Security Solutions, a firm specializing in serverless application security. “The MCP approach is particularly promising, as it allows for the creation of reusable, verifiable security modules.”

Aurora PostgreSQL & Lambda Enhancements: Scaling Performance & Reducing Friction

The improvements to Amazon Aurora PostgreSQL – express configuration and availability on the AWS Free Tier – are focused on lowering the barrier to entry. Express configuration, allowing database creation in “seconds,” is a welcome change. Previously, configuring Aurora PostgreSQL could be a time-consuming process, particularly for developers unfamiliar with the service. The Free Tier availability further democratizes access, allowing developers to experiment and prototype without incurring costs.

The Lambda updates – increased file descriptor limits (to 4096) and support for up to 32 GB of memory and 16 vCPUs – are targeted at more demanding workloads. The 4x increase in file descriptors is particularly significant for I/O-intensive applications, such as high-concurrency web services and data processing pipelines. The increased memory and vCPU options allow Lambda to handle more complex computations, potentially displacing some workloads currently running on EC2 instances. This is a clear indication that AWS is positioning Lambda as a more versatile compute platform.

Here’s a quick comparison of Lambda memory and vCPU options as of March 30, 2026:

| Memory (GB) | vCPUs | Price per GB-hour (US East (N. Virginia)) |

|---|---|---|

| 1 | 0.5 | $0.0000166667 |

| 2 | 1 | $0.0000333333 |

| 4 | 2 | $0.0000666667 |

| 8 | 4 | $0.0001333333 |

| 16 | 8 | $0.0002666667 |

| 32 | 16 | $0.0005333333 |

These pricing figures are based on the current AWS Lambda pricing model and are subject to change. See the official AWS Lambda pricing page for the most up-to-date information.

Bidirectional Streaming API for Amazon Polly: Real-Time Speech Synthesis for Conversational AI

The introduction of the Bidirectional Streaming API for Amazon Polly is a subtle but vital update. Traditional text-to-speech APIs operate on a request-response model, which introduces latency. The streaming API allows for incremental synthesis, enabling real-time speech generation for conversational AI applications. This is particularly crucial for LLM-powered chatbots and virtual assistants, where responsiveness is paramount. The ability to start synthesizing audio *before* the full text is available significantly improves the user experience.

This move positions Polly as a more competitive alternative to other text-to-speech services, such as Google Cloud Text-to-Speech and Microsoft Azure Cognitive Services Text to Speech. The streaming API is a key differentiator, particularly for applications requiring low-latency speech synthesis. Explore Amazon Polly’s features to understand the full capabilities.

What This Means for Enterprise IT

These updates collectively reinforce AWS’s strategy of providing a comprehensive, finish-to-end AI and serverless platform. The AI & ML Scholars program addresses the talent shortage, the Agent Plugin simplifies development, and the Aurora and Lambda enhancements improve performance and scalability. For enterprises, this translates into faster time-to-market, reduced operational costs, and increased innovation. The focus on security and automation is particularly appealing to organizations with stringent compliance requirements.

However, it’s important to note that AWS’s ecosystem is increasingly complex. Managing these services effectively requires specialized expertise. Enterprises should invest in training and tooling to ensure they can fully leverage the benefits of the AWS platform. The potential for vendor lock-in is also a concern. Organizations should carefully evaluate their options and consider adopting a multi-cloud strategy to mitigate this risk.

The 30-Second Verdict: AWS is doubling down on AI and serverless, making it easier than ever to build and deploy intelligent applications. The Agent Plugin is a game-changer for developer productivity, and the Lambda enhancements unlock new possibilities for compute-intensive workloads.