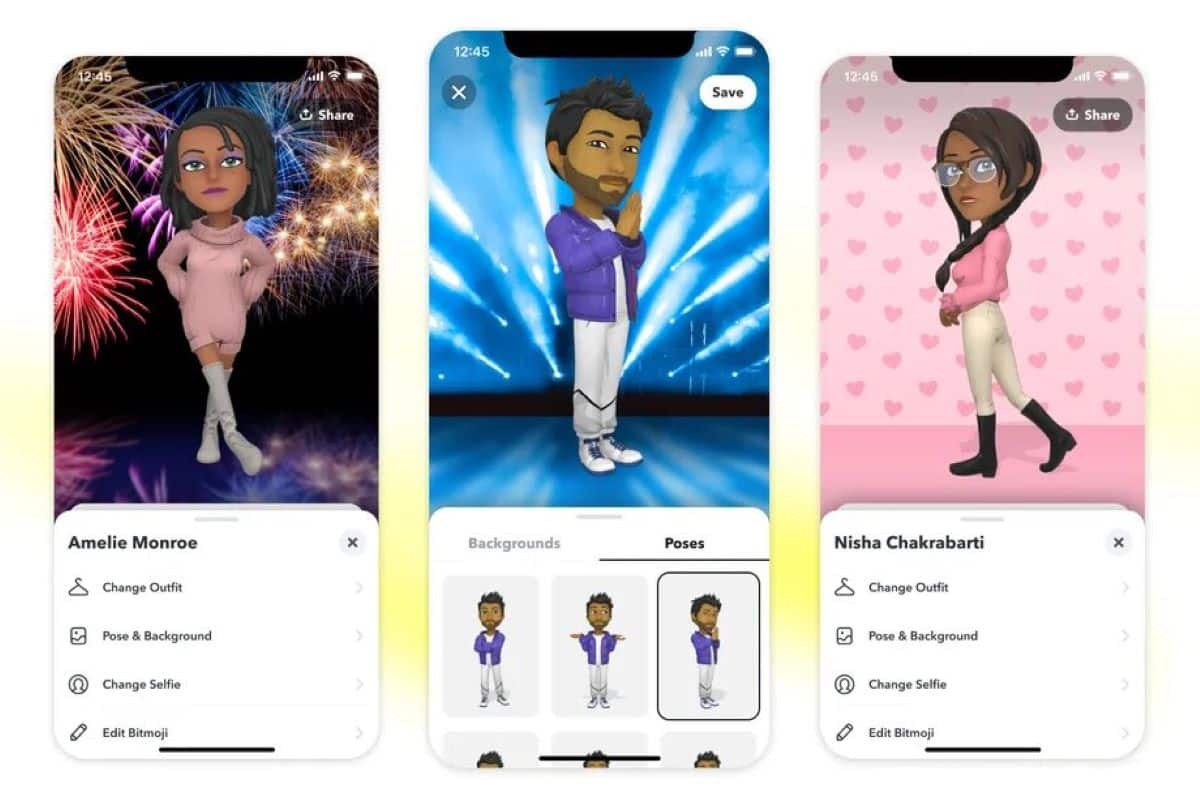

Bitmoji, the personalized avatar system initially popularized on Snapchat, is undergoing a significant evolution, now deeply integrated with YouTube’s creator tools. This week’s beta rollout introduces AI-powered avatar animations directly within YouTube Studio, allowing creators to generate short-form video responses and personalized content using their Bitmoji. The move signals a broader strategy by Snap Inc. To extend Bitmoji’s reach beyond its core social platform, leveraging the massive scale of YouTube’s creator ecosystem and the growing demand for personalized video content.

From Static Stickers to Dynamic Digital Selves: The AI Infusion

For years, Bitmoji functioned primarily as a library of static stickers and images. The current shift isn’t merely about adding motion; it’s about leveraging generative AI to create nuanced, contextually relevant animations. Snap is employing a proprietary blend of Large Language Models (LLMs) and diffusion models to interpret text prompts and translate them into Bitmoji animations. Crucially, this isn’t a simple text-to-animation pipeline. The system analyzes the *sentiment* of the prompt, adjusting facial expressions and body language accordingly. Early benchmarks, gleaned from developer access, suggest a latency of approximately 2.5 seconds for animation generation on a standard M2-class machine, a figure Snap aims to reduce to under 1.8 seconds with ongoing optimizations to their NPU-accelerated inference engine.

What This Means for Content Moderation

The introduction of AI-generated content, even in the form of avatars, inevitably raises content moderation concerns. Snap is reportedly implementing a multi-layered approach, combining automated filtering with human review. The system flags prompts containing potentially harmful or offensive language and generated animations are subject to a secondary review process before being made available to creators. However, the effectiveness of these safeguards remains to be seen, particularly as users attempt to circumvent the filters through creative phrasing.

The Ecosystem Play: Challenging Meta’s Avatar Dominance

Snap’s move isn’t happening in a vacuum. Meta has been aggressively pushing its own avatar platform, Horizon Worlds, and integrating avatars across its suite of apps – Facebook, Instagram, and WhatsApp. However, Meta’s approach has been largely focused on creating a metaverse-centric experience, while Snap is taking a more pragmatic approach, embedding Bitmoji directly into existing workflows. This is a key differentiator. YouTube creators aren’t being asked to adopt a new platform; they’re being given a new tool within the platform they already use. This lowers the barrier to entry and increases the likelihood of adoption.

The architectural difference is as well significant. Meta’s avatars rely heavily on a centralized server infrastructure, requiring substantial bandwidth and processing power. Bitmoji’s AI-powered animations, while initially generated on Snap’s servers, are designed to be increasingly offloaded to the user’s device, reducing latency and improving scalability. This aligns with the broader trend towards edge computing, where processing is moved closer to the data source.

“The beauty of Snap’s strategy is its distribution model. They’re not trying to build a new digital world; they’re enhancing existing ones. This is a far more sustainable approach, particularly in the current economic climate.” – Dr. Anya Sharma, CTO of AI-driven content creation startup, Synthetica.

API Access and the Third-Party Developer Landscape

Snap has quietly opened up limited API access to Bitmoji’s animation engine, allowing third-party developers to integrate the technology into their own applications. The API currently supports a subset of features, including basic animation generation and avatar customization. Pricing is tiered, based on the number of API calls and the complexity of the animations generated. A basic tier, allowing for up to 1,000 API calls per month, is priced at $9.99. Higher tiers, offering increased capacity and access to advanced features, are available for enterprise customers. Snap’s Developer Portal provides detailed documentation and pricing information.

This API access is crucial for fostering innovation and expanding the Bitmoji ecosystem. It allows developers to create custom integrations with other platforms and tools, further solidifying Bitmoji’s position as a leading avatar platform. However, the API’s limitations – particularly the restrictions on commercial use of generated animations – may hinder widespread adoption.

The Privacy Implications: Data Collection and Avatar Ownership

The use of AI to generate personalized animations raises essential privacy concerns. Snap collects data on user prompts and generated animations to improve the accuracy and relevance of the system. This data is anonymized and aggregated, but it still represents a potential privacy risk. Users should be aware of Snap’s data collection practices and exercise caution when submitting prompts. Snap’s Privacy Policy outlines these practices in detail.

the question of avatar ownership remains unresolved. While users create and customize their Bitmoji avatars, Snap retains the intellectual property rights to the underlying technology and the generated animations. This raises concerns about potential misuse of user avatars and the lack of control over their digital likeness. The debate over digital ownership is likely to intensify as AI-powered avatar platforms become more prevalent.

The 30-Second Verdict

Snap’s Bitmoji integration with YouTube is a smart move, leveraging AI to deliver personalized content creation tools directly to a massive audience. It’s a calculated challenge to Meta’s metaverse ambitions, focusing on practical integration rather than world-building. However, privacy concerns and API limitations remain key hurdles.

Beyond YouTube: The Future of AI-Powered Avatars

The integration with YouTube is just the first step in Snap’s broader strategy to transform Bitmoji into a ubiquitous avatar platform. The company is reportedly exploring integrations with other popular platforms, including TikTok, Twitch, and Discord. The long-term vision is to create a world where users can seamlessly express themselves across all their digital interactions using their personalized Bitmoji avatars. This vision hinges on continued advancements in AI, particularly in the areas of natural language processing and computer vision. The ability to accurately interpret user intent and generate realistic, expressive animations will be critical for achieving this goal. The IEEE Transactions on Affective Computing journal provides ongoing research into the field of emotional AI, which is central to the development of more sophisticated avatar systems.

The competition is fierce. Apple is also rumored to be developing its own avatar platform, leveraging its silicon expertise and its control over the iOS ecosystem. The “chip wars” are extending beyond CPUs and GPUs to include NPUs (Neural Processing Units), which are essential for accelerating AI workloads. The company with the most powerful and efficient NPU will have a significant advantage in the race to create the next generation of AI-powered avatars. Ars Technica’s coverage of Apple’s M3 chip highlights the company’s advancements in this area.

“We’re seeing a fundamental shift in how people interact with technology. Avatars are becoming increasingly important as a means of self-expression and communication. The companies that can deliver truly personalized and engaging avatar experiences will be the winners in this space.” – Ben Thompson, Principal Analyst at Stratechery.

the success of Bitmoji – and other AI-powered avatar platforms – will depend on their ability to strike a balance between personalization, privacy, and performance. The current beta rollout is a promising sign, but the real test will approach as the technology matures and is adopted by a wider audience.