Data drift occurs when the statistical properties of machine learning (ML) input data evolve, causing security models to lose predictive accuracy. In the current 2026 threat landscape, this phenomenon creates critical vulnerabilities in malware detection and network analysis, allowing sophisticated adversaries to bypass defenses by exploiting outdated model snapshots.

Here is the reality: your “set-and-forget” AI security stack is rotting. We’ve spent the last few years treating LLMs and ML classifiers as static shields, but in the world of offensive security, the ground shifts every hour. When the delta between your training distribution and your live production data grows too wide, your model doesn’t just “underperform”—it becomes a blind spot that attackers can navigate with surgical precision.

This isn’t theoretical. We are seeing a systemic shift where “Strategic Patience” is the new attacker’s mantra. Elite actors aren’t just smashing against the wall; they are observing how models react, identifying the drift, and sliding through the gaps. If you are relying on a model trained on 2024’s attack patterns to stop 2026’s agentic AI threats, you aren’t defending a network; you’re maintaining a museum.

The Silent Erosion: Five Red Flags Your Model is Failing

Detection isn’t always a loud alarm. More often, it’s a unhurried bleed of confidence and precision. If you’re seeing these five markers, your model is already compromised by drift.

1. The Metric Death Spiral

When accuracy, precision, and recall begin a synchronized descent, you’re witnessing a classic distribution shift. In a security context, this manifests as a spike in false negatives. You aren’t seeing the breaches because the model no longer recognizes the “shape” of the attack. It’s the digital equivalent of looking for a 1990s sedan while a Tesla stealthily drives past.

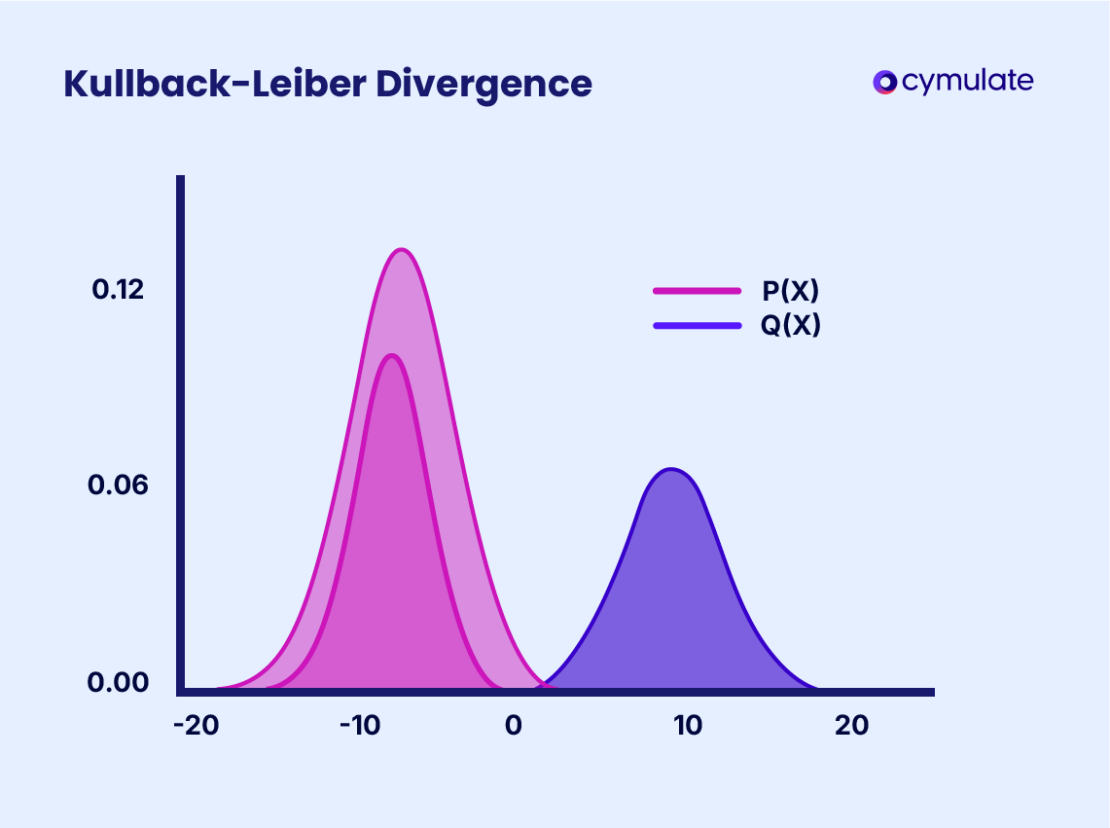

2. Statistical Divergence in Input Features

You need to be monitoring the mean, median, and standard deviation of your features. If your phishing classifier was trained on a 2MB average attachment size and you’re suddenly seeing a surge of 10MB payloads—or conversely, tiny 1KB “heartbeat” packets—the model is operating in an alien environment. This is where IEEE research on anomaly detection becomes critical; you cannot trust a model that is processing data outside its known variance.

3. Prediction Distribution Anomalies

Watch for “prediction drift.” If your fraud detection system typically flags 1% of traffic but suddenly jumps to 5% without a corresponding increase in actual attacks, the model is confused. It’s not that the threats have increased; it’s that legitimate user behavior has evolved, and the model is now misclassifying “normal” as “malicious,” leading to catastrophic alert fatigue.

4. The Confidence Gap (Uncertainty Quantification)

Modern models provide a probability score. When the average confidence across all predictions begins to dip, the model is effectively saying, “I think this is a threat, but I’ve never seen anything quite like it.” This uncertainty is the canary in the coal mine. Attackers use this “grey zone” to iteratively tweak their payloads until they find the exact threshold where the model ceases to be certain.

5. Feature Decoupling

In a healthy model, certain features correlate—say, packet size and traffic volume. When those correlations break (feature decoupling), it often signals a new tunneling tactic or a stealthy exfiltration method that bypasses traditional heuristics. The model sees the components, but it no longer understands the relationship between them.

Beyond the Basics: The Architecture of Drift Mitigation

To stop the bleed, you need more than a retraining script. You need a pipeline that treats model decay as an inevitability. The industry is moving toward “Continuous Training” (CT) pipelines where the model is updated in near real-time based on verified labels from SOC analysts.

The technical heavy lifting involves using the Kolmogorov-Smirnov (KS) test to determine if two datasets differ significantly and the Population Stability Index (PSI) to quantify the magnitude of the shift. If the PSI exceeds 0.25, the distribution has shifted significantly, and a trigger for retraining should be automatic.

“The danger isn’t just that the model becomes less accurate, but that it becomes predictably wrong. Once an adversary maps the drift, they can craft adversarial examples that are mathematically guaranteed to be ignored by the classifier.”

This is the “Attack Helix” in action. Offensive AI architectures are now designed to probe security models for these exact statistical weaknesses. By sending “probe” packets and analyzing the response, attackers can essentially reverse-engineer the boundaries of your model’s training set.

The Drift Detection Toolkit

- KS Test: Non-parametric test to check if a sample comes from a specific distribution.

- PSI (Population Stability Index): Measures how much a variable’s distribution has shifted over time.

- Concept Drift: When the relationship between input and output changes (e.g., a previously “safe” behavior becomes a “malicious” signal).

- Covariate Shift: When the distribution of the input data changes, but the conditional probability of the output remains the same.

The Ecosystem War: Open Source vs. Black-Box AI

This drift problem creates a massive divide in the market. Proprietary “Black-Box” security AI from the big cloud providers often hides drift behind a curtain of “managed updates.” You don’t know when they retrain, what data they use, or if they’ve overfitted the model to a specific set of high-profile targets.

Conversely, the open-source community is leveraging frameworks like scikit-learn and PyTorch to build transparent monitoring layers. The shift toward the Open Cybersecurity Schema Framework (OCSF) is a direct response to this; by standardizing the data language, security teams can move their models between different environments without introducing artificial drift caused by formatting changes.

The “Chip War” also plays a role here. The move toward on-device NPUs (Neural Processing Units) allows for local, federated learning. Instead of sending data to a central cloud for retraining—which introduces latency and privacy risks—security models can now adapt to local network drift on the edge, creating a more resilient, distributed defense mechanism.

The 30-Second Verdict: Actionable Hardening

If you are a Principal Security Engineer or a CISO, your priority for the remainder of Q2 2026 is not buying a new tool, but auditing your existing ones. Stop trusting the “99% accuracy” claim on the vendor’s slide deck. That number is a snapshot of the past.

Immediate Steps:

- Implement PSI Monitoring: Set up automated alerts for any feature distribution shift > 0.2.

- Quantify Uncertainty: Force your models to output confidence intervals; flag any “low-confidence” predictions for human review.

- Adversarial Testing: Use red-team AI to intentionally induce drift and see how long it takes for your monitoring to trigger.

Data drift is the silent killer of AI security. You can either manage the decay through rigorous engineering, or you can wait for an attacker to tell you that your model is obsolete. In this game, the latter is a catastrophic failure.