For decades, the relentless pursuit of faster, more efficient computer storage has driven innovation in unexpected directions. While today’s solid-state drives (SSDs) largely occupy dedicated M.2 slots or replace traditional hard drives, a curious chapter in storage history saw SSDs taking root in a most unusual place: the RAM slot. These “DIMM SSDs,” as they’re known, represent a fascinating, and largely forgotten, experiment in bridging the gap between memory and storage.

The concept, emerging as a potential solution to storage bottlenecks, involved creating SSDs that physically fit into a computer’s DIMM (Dual In-line Memory Module) slots – the same slots used for RAM. But these weren’t simply repurposed memory modules. Depending on their design, DIMM SSDs could function as standard SATA SSDs or, more ambitiously, as a form of persistent memory, offering performance closer to DRAM than conventional storage. The appeal was clear: reduce latency and potentially unlock significant performance gains.

What Exactly *Is* a DIMM SSD?

The core idea behind DIMM SSDs was to leverage the high-bandwidth interface of the memory bus. Traditional SATA SSDs, while a massive improvement over hard drives, were still limited by the SATA interface. By plugging a storage device directly into the RAM slots, designers hoped to bypass this bottleneck. However, implementing this wasn’t straightforward. A DIMM SSD isn’t just an SSD crammed into a different form factor; it requires a specialized controller and interface to communicate with the system.

As How-To Geek explains, the behavior of a DIMM SSD could vary. Some were designed to emulate standard SATA SSDs, offering a performance boost within the constraints of the SATA protocol. Others aimed for a more radical approach, functioning as persistent memory modules, effectively blurring the line between RAM and storage. This persistent memory approach promised even faster access times and lower latency, as the storage was directly accessible by the CPU with minimal overhead.

The Rise and Fall of an Unusual Format

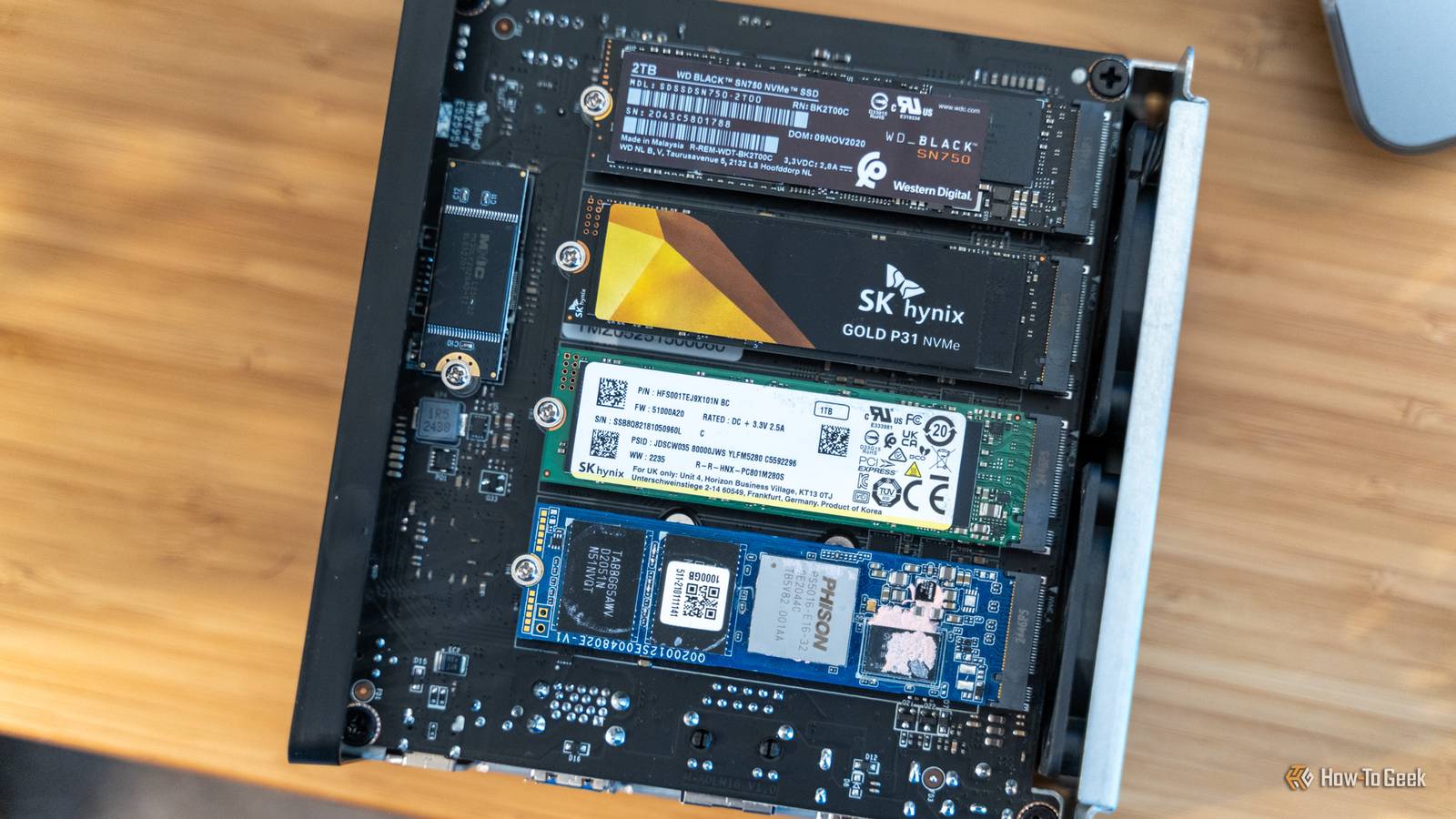

While the technical ingenuity behind DIMM SSDs was undeniable, they never achieved widespread adoption. Several factors contributed to their decline. The emergence of faster interfaces like PCIe NVMe SSDs offered a more straightforward path to increased storage performance without requiring a radical change in form factor. NVMe drives, utilizing the PCIe bus, quickly surpassed the potential performance gains offered by DIMM SSDs.

the cost and complexity of manufacturing DIMM SSDs presented significant challenges. They weren’t simply cheaper alternatives to existing storage solutions. As SSD prices generally fell, the niche appeal of DIMM SSDs diminished. The SSD Review highlights the ongoing evolution of SSD technology, including the development of DRAM-less SSDs utilizing Host Memory Buffer (HMB) technology, which further reduced the cost of entry for SSDs and lessened the need for specialized formats like DIMM SSDs.

DRAM and the Performance Equation

The presence or absence of DRAM (Dynamic Random Access Memory) within an SSD significantly impacts its performance. DRAM acts as a high-speed cache, storing data and mapping the location of data on the NAND flash memory. This speeds up access times and reduces latency. DRAM-less SSDs, while more affordable, typically exhibit slower and less consistent performance, particularly during sustained use. However, advancements like HMB allow DRAM-less SSDs to utilize system RAM as a buffer, mitigating some of these performance drawbacks.

The discussion around DRAM and DRAM-less SSDs, as seen in a Reddit thread on r/buildapc, underscores the trade-offs between cost and performance. While DRAM-less SATA SSDs might be slower than their DRAM-equipped counterparts, they still represent a substantial upgrade over traditional hard drives, making them suitable for boot drives or storage where extreme random write performance isn’t critical.

What Does This Mean for the Future of Storage?

The story of DIMM SSDs serves as a reminder that innovation in storage technology isn’t always linear. While this particular approach didn’t gain traction, it represents a valuable exploration of alternative architectures. Today, the focus remains on maximizing the performance of NVMe SSDs and exploring new memory technologies like Optane (now discontinued by Intel) and emerging persistent memory solutions. The quest for faster, more efficient storage continues, building on the lessons learned from past experiments, including the intriguing chapter of the RAM-slot SSD.

The evolution of storage technology is a constant process of refinement and adaptation. As new materials and architectures emerge, People can expect to see continued innovation in how we store and access data. What are your thoughts on the future of storage? Share your predictions in the comments below.