A critical vulnerability in the EngageLab SDK has exposed over 50 million Android users to data exfiltration. The flaw allows malicious applications to exploit trusted permissions through a “malware bridge,” bypassing the Android sandbox to access sensitive user data by leveraging the elevated privileges of legitimate host applications.

This isn’t your run-of-the-mill memory leak or a simple API oversight. We are looking at a fundamental failure in the “trusted partner” architecture of the Android ecosystem. When a developer integrates a third-party SDK, they aren’t just adding a feature. they are effectively extending their application’s security perimeter to include code they didn’t write and likely don’t fully audit. In this case, the EngageLab SDK acted as a Trojan horse, granting an open door to any malicious actor capable of sending a specifically crafted Intent to the SDK’s exported components.

It is a systemic nightmare.

The Anatomy of the SDK Permission Bridge

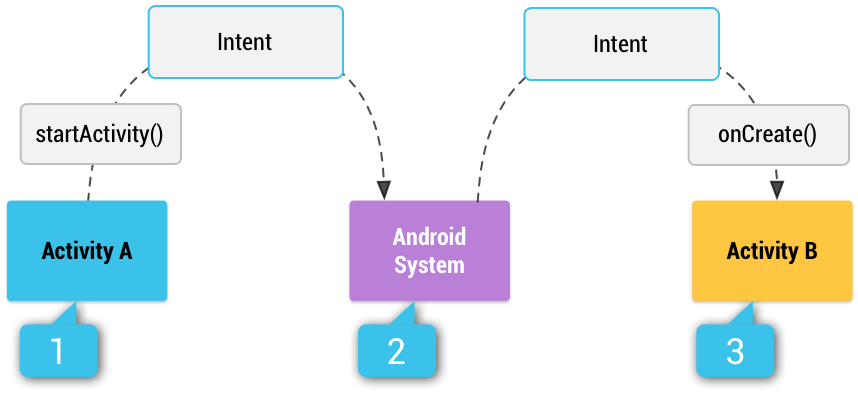

To understand how 50 million devices became vulnerable, we have to look at the Android Manifest and the way the Android Runtime (ART) handles inter-process communication (IPC). The EngageLab SDK contained exported components—likely Activities or Broadcast Receivers—that were marked as android:exported="true" without accompanying custom permissions.

In a healthy environment, the Android sandbox ensures that App A cannot peek into the data of App B. However, if App B (a legitimate app) uses the EngageLab SDK and has been granted high-level permissions—such as READ_CONTACTS or ACCESS_FINE_LOCATION—the SDK operates within that same process and inherits those permissions. The vulnerability allowed a malicious App C to send an Intent to the SDK inside App B, triggering a function that exfiltrates data. Because the request originated “inside” App B, the OS saw it as a legitimate action. What we have is a classic privilege escalation via a confused deputy attack.

The technical fallout is exacerbated by the sheer scale of SDK distribution. Because EngageLab is woven into thousands of different apps, the attack surface isn’t a single point of failure but a distributed web of vulnerabilities. This creates a “long tail” of risk where even if the SDK is patched in the latest version, thousands of legacy apps remain active on user devices, still running the vulnerable binary.

“The industry has a dangerous habit of treating SDKs as black boxes. We trust the documentation, but we rarely audit the bytecode. This EngageLab incident proves that a single permissive line in a manifest file can invalidate the entire security model of a device.” — Marcus Thorne, Principal Security Researcher at Synapse Defense.

The “Trust Proxy” Fallacy in Android Development

This incident highlights the ongoing tension between the open nature of Android and the need for rigorous security. Unlike the tightly curated frameworks provided by Apple, Android’s reliance on a fragmented ecosystem of third-party libraries creates a “Trust Proxy” fallacy. Developers trust the SDK provider, and the user trusts the developer. When that chain breaks, the user is left completely blind.

From a macro-market perspective, this reinforces the push toward Android 14’s stricter restrictions on implicit intents and exported components. We are seeing a slow, painful migration toward a “Zero Trust” model at the OS level, where the system no longer assumes that a request from a trusted app is inherently safe if it’s being routed through a third-party module.

The implications for the enterprise are even more severe. For corporate-managed devices, this vulnerability bypasses many standard Mobile Device Management (MDM) policies because the data leak occurs through a “signed and trusted” application. It renders traditional app whitelisting nearly obsolete if the whitelisted app is the one carrying the vulnerability.

The 30-Second Verdict for IT Admins

- The Threat: Privilege escalation via EngageLab SDK exported components.

- The Vector: Malicious apps triggering Intents in legitimate apps to steal data.

- The Fix: Force-update all apps utilizing EngageLab; audit

AndroidManifest.xmlfor unnecessaryexported="true"tags. - The Risk: High. 50M+ users are potentially compromised until the app-level updates are pushed through the Play Store.

Mitigating the Blast Radius: A Developer’s Checklist

Stopping this requires more than just waiting for a patch from EngageLab. Developers need to implement a defensive posture that assumes their dependencies are compromised. The first step is implementing a strict “Principle of Least Privilege” for all integrated libraries.

If you are auditing your current build, focus on the AndroidManifest.xml. Any component that does not explicitly need to be accessed by other apps must be set to android:exported="false". For those that must remain exported, implement custom permissions to ensure only authorized apps can trigger those components.

the industry needs to move toward more robust OWASP Mobile Top 10 compliance, specifically focusing on M1 (Improper Platform Usage). The use of Static Analysis Security Testing (SAST) tools can facilitate identify these “leaky” components before they hit production. We should be scanning for Intent vulnerabilities as routinely as we scan for SQL injections in web apps.

For a deeper dive into the mechanics of IPC vulnerabilities, the Android Security Samples on GitHub provide a blueprint for implementing secure communication between components.

| Risk Factor | Legacy SDK Approach | Modern Zero-Trust Approach |

|---|---|---|

| Component Visibility | Default Exported / Implicit Trust | Explicit exported="false" |

| Permission Model | Inherited from Host App | Scoped Permissions / Permission Groups |

| Update Cycle | Reactive (Wait for SDK update) | Proactive (Binary Analysis & Sandboxing) |

| IPC Validation | Trust based on App Signature | Strict Intent Filtering & Payload Validation |

the EngageLab disaster is a wake-up call. As we integrate more complex AI-driven SDKs and NPU-accelerated libraries into our mobile apps, the potential for these “malware bridges” only grows. If we continue to treat third-party code as a black box, we aren’t building software—we’re building a house of cards. It’s time to stop trusting the proxy and start auditing the code.