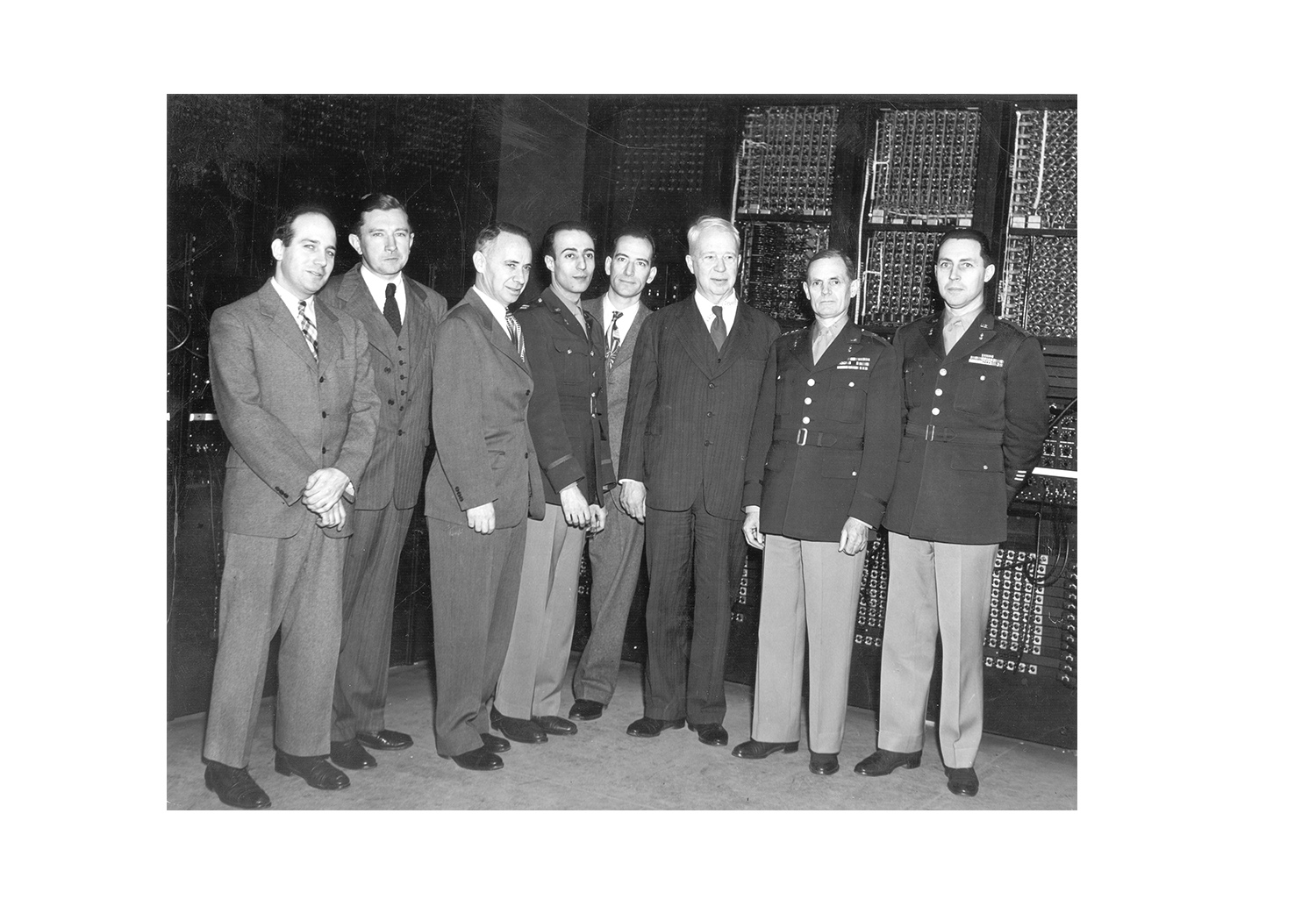

Eighty years after ENIAC’s activation, Archyde analysis confirms the machine’s legacy defines 2026 AI architecture. John Mauchly and Kathleen McNulty established computing as narrative weaving, not just calculation. This philosophical shift underpins modern Large Language Model security, where adversarial testing replaces vacuum tube debugging. Understanding this lineage is critical for enterprise AI deployment.

The celebration of ENIAC’s 80th anniversary at the American Helicopter Museum was not merely nostalgia. it was a diagnostic review of our current technological trajectory. When Naomi Most, granddaughter of ENIAC architects John Mauchly and Kathleen McNulty, spoke about the Irish word ríomh, she illuminated a structural truth often obscured by silicon valley marketing. Ríomh means to compute, but too to weave and narrate. In 2026, as we grapple with the safety implications of autonomous agents, this distinction is the difference between a calculator and a threat vector.

The Subroutine as the First API Contract

McNulty and Mauchly are credited with conceiving the subroutine, a sequence of instructions repeatedly recalled to perform a task. In the vacuum tube era, this was a physical rerouting of electricity. Today, that concept has evolved into the function calling capabilities of modern LLMs. When an AI agent decides to query a database or execute a code block, it is invoking a subroutine. However, the security model has inverted. ENIAC programmers had embodied knowledge; they could narrow a malfunction to a specific failed vacuum tube by touch. Modern developers often lack visibility into the latent space where these “subroutines” execute.

The lack of manual for ENIAC mirrors the current opacity of proprietary model weights. McNulty learned the machine by memory and routing threads of electricity into patterns. What we have is analogous to the emerging discipline of adversarial testing in the AI era. Security engineers now must understand the “weave” of the transformer architecture to predict where the narrative might break. The subroutine was not in ENIAC’s blueprints; it emerged from leverage. Similarly, emergent capabilities in 2026 models often appear post-deployment, bypassing initial safety filters.

From Weather Prediction to Probabilistic Security

Mauchly’s original ambition was meteorology—predicting the weather. He realized complex systems reveal their purpose through aimsir, meaning both weather and time. ENIAC’s first non-military application was indeed a weather forecast in 1950. Today, we use similar computational heft to forecast threat landscapes. The shift from deterministic ballistics tables to probabilistic weather models parallels the shift from rule-based security to AI-driven anomaly detection.

Enterprise security platforms like Netskope’s AI-powered security analytics are attempting to automate this prediction. However, automation without the “weaver’s intuition” leads to false positives. The ENIAC programmers could distinguish noise from signal because they understood the physical constraints of the machine. Modern security operations centers (SOCs) flooded with AI alerts often lack this contextual grounding. We are building looms without teaching the operators how to thread them.

“The elite hacker’s persona in 2026 is defined by strategic patience. They do not rush to exploit; they wait for the model to weave its own vulnerabilities through extended interaction,” notes industry analysis from CrossIdentity regarding adversarial behaviors in generative AI.

This strategic patience is the modern equivalent of McNulty’s embodied knowledge. It requires understanding the model’s tendency to hallucinate under specific token pressures. The job market reflects this urgency. Roles such as AI Red Teamer and Principal Security Engineer for AI are commanding premium salaries, yet the talent pool remains shallow. Companies are hiring for the ability to narrate the storm, not just monitor the barometer.

The Loom Architecture and Transformer Attention

When ENIAC was built, it looked like a textile production house. Panels, switchboards, wires. Thread. The transformer architecture powering 2026’s AI models is conceptually similar. The attention mechanism weighs the importance of different parts of the input data, effectively “weaving” context together. If ENIAC was the loom, the GPU clusters at Hewlett Packard Enterprise and similar providers are the industrialized factories.

However, the scale introduces entropy. A single vacuum tube failure crashed ENIAC. A single biased token in a training dataset can poison an entire model branch. The “Information Gap” lies in the mitigation strategy. ENIAC programmers physically patched the machine. We cannot physically patch weights in a deployed foundational model without fine-tuning or RAG (Retrieval-Augmented Generation) overlays. This creates a dependency on third-party security layers.

- Legacy Constraint: ENIAC required manual rewiring for new programs.

- Modern Equivalent: Prompt engineering and context window management.

- Security Risk: Prompt injection acts as a physical bypass of the logic gates.

- Mitigation: Adversarial training and real-time inference monitoring.

Enterprise Implications of Narrative Computing

If computers are narrative engines, then security is about controlling the story. In 2026, data exfiltration isn’t just about stealing bytes; it’s about manipulating the model’s output to reveal proprietary logic. The IEEE milestone reminds us that Mauchly and McNulty’s contributions extended beyond military applications. They built a general-purpose tool. General purpose means general vulnerability.

Organizations must move beyond viewing AI as a calculator. It is a storyteller that can lie with confidence. The subroutine, invented to save time, now saves tokens. But without the rigorous testing protocols of the ENIAC era—where every connection was verified by hand—modern software supply chains are fragile. We spot this in the rush to integrate IEEE documented standards for AI safety, which often lag behind deployment cycles.

The lesson from West Chester is not about honoring the past. It is about recognizing that the “black box” is a choice, not a necessity. McNulty knew her machine by touch. In 2026, if your security team cannot explain how the model reached a conclusion, you are not computing; you are guessing. And in the era of autonomous agents, guessing is a vulnerability we cannot afford. The thread must be visible, or the weave will unravel.