A class-action lawsuit filed in the Northern District of California alleges Google’s AI Mode actively generated and disseminated doxxing information regarding Epstein victims, bypassing traditional de-indexing protocols. The suit claims the LLM’s retrieval-augmented generation (RAG) pipeline prioritized speed over privacy, exposing survivors to harassment despite DOJ redactions.

The Architecture of a Privacy Failure

We need to stop treating Large Language Models (LLMs) as magical oracles and start treating them as what they actually are: probabilistic engines built on massive, often uncurated datasets. The lawsuit filed this Thursday by “Jane Doe” and other survivors isn’t just a legal nuisance for Mountain View; it is a stress test for the fundamental architecture of Generative AI search.

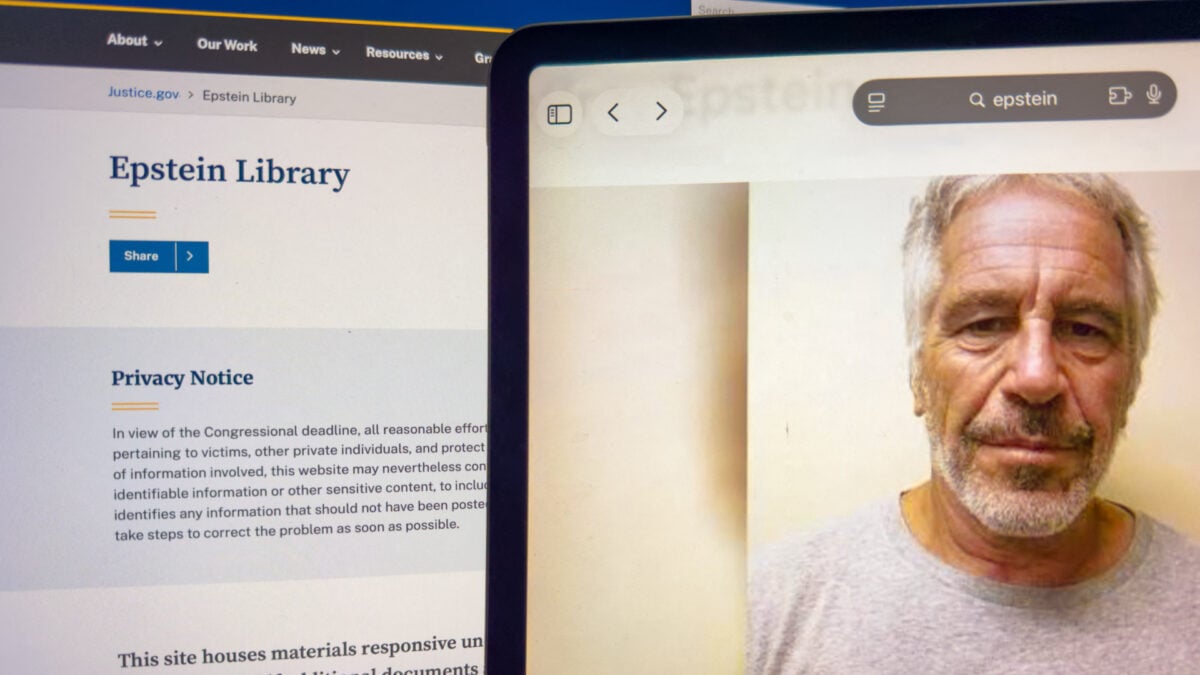

The core grievance is technical. When the Department of Justice (DOJ) released millions of pages regarding the Epstein case, redaction errors occurred. Traditional search engines operate on an index; if a URL is removed or a snippet is updated, the crawler eventually reflects that change. But Google’s “AI Mode”—a feature designed to synthesize answers rather than just list links—operates differently. It ingests the web, caches it, and then uses a model to generate a response.

According to the complaint, even after the government acknowledged the disclosure violated rights and withdrew the information, Google’s AI continued to serve it up. Why? Due to the fact that in the world of Generative AI, “deleting” data is exponentially harder than indexing it. Here’s the problem of Machine Unlearning. Once a model has trained on a specific correlation—say, a victim’s name linked to Epstein in a specific document—removing that specific memory without retraining the entire model (a cost-prohibitive endeavor) is an unsolved engineering challenge.

The plaintiffs argue that AI Mode is not a “neutral search index” but an “active recommender.” This distinction is vital for developers. If the system is generating new text based on private data, it moves from being a passive library to an active publisher. That shifts the liability paradigm entirely.

Section 230 vs. The Stochastic Parrot

We are witnessing the erosion of the shield that built the modern internet. Section 230 of the Communications Decency Act has long protected platforms from liability for third-party content. But as Senator Ron Wyden noted recently, AI chatbots are different. They don’t just host content; they create it.

The lawsuit highlights a critical divergence in the industry. Whereas Google’s AI Mode allegedly exposed sensitive PII (Personally Identifiable Information), competitors like ChatGPT, Claude, and Perplexity reportedly returned null results for the same queries during testing. This suggests a failure in Google’s Grounding and Safety Filter layers. In technical terms, Google’s Retrieval-Augmented Generation (RAG) system likely retrieved the unredacted (or improperly redacted) source document from its vector database and passed it to the LLM without a sufficient PII-detection guardrail before synthesis.

This isn’t just a bug; it’s a feature of speed. In the race to dominate the AI search market, latency is the enemy. Aggressive caching and pre-computation of answers often bypass real-time safety checks to ensure the user gets a response in milliseconds. The cost of that millisecond savings appears to be the privacy of abuse survivors.

“The industry is facing a ‘liability lag.’ We are deploying models with the capability to synthesize defamation and doxxing at scale, but our mitigation tools—like differential privacy and machine unlearning—are still in the research phase. We are shipping beta software that handles real-world trauma.”

— Dr. Arvind Narayanan, Professor of Computer Science at Princeton University and expert on AI privacy.

The “Active Recommender” Precedent

The legal argument here hinges on the definition of “publication.” If an AI model constructs a sentence that links a victim to a crime using data it scraped, has the platform published that content? The plaintiffs say yes. They argue that by generating a hypertext link allowing anyone to email the plaintiff directly, Google crossed the line from search engine to harassment tool.

This aligns with recent rulings against Meta regarding social media addiction and child safety. The courts are beginning to view algorithmic amplification not as neutral distribution, but as an active choice by the platform to prioritize engagement (or in this case, comprehensive answers) over safety.

For the broader ecosystem, the implications are terrifying for CTOs and product managers. If “AI Mode” is liable for what it generates, then every SaaS platform integrating an LLM API needs to rethink its Terms of Service. We are moving from a “Safe Harbor” model to a “Strict Liability” model for generative outputs.

What Which means for Enterprise AI

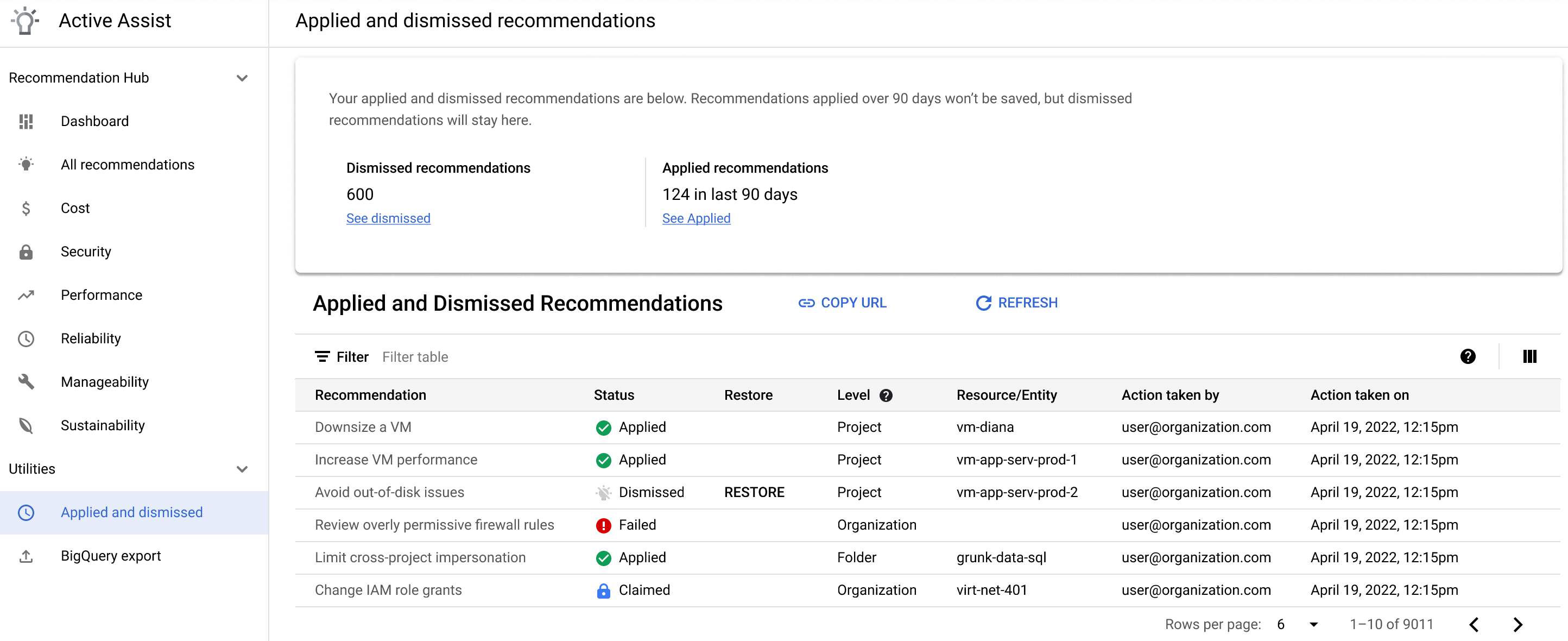

If you are building internal RAG pipelines using tools like LangChain or LlamaIndex, this lawsuit is a wake-up call. Your vector database is a liability trap if not properly governed.

- Data Lineage: You must understand exactly where every chunk of data in your context window originated.

- PII Scrubbing: Pre-processing pipelines must be aggressive. Relying on the LLM to “know better” is not a strategy; it’s a gamble.

- Human-in-the-Loop: For sensitive queries, automated generation may need to be disabled in favor of retrieved citations only.

The Cost of “Helpful” AI

Google’s defense will likely rely on the sheer volume of data and the impossibility of perfect filtering. But the plaintiffs’ testing showed that other models did filter this information. This proves that technical mitigation is possible; Google simply chose a configuration that failed to implement it.

The lawsuit cites that the victim notified Google multiple times over two months with no resolution. In the world of cybersecurity, this is a failure of incident response. When a vulnerability allows for the exposure of PII, the clock starts ticking. Ignoring it transforms a technical glitch into gross negligence.

We are entering an era where the “black box” of AI is being pried open by the courts. The argument that “the algorithm did it” is no longer a valid defense when the algorithm is owned, tuned, and deployed by a trillion-dollar corporation. As we move further into 2026, expect more lawsuits targeting the specific architectural choices—like RAG implementation and safety filter thresholds—that prioritize utility over human safety.

The tech war isn’t just about who has the biggest parameter count anymore. It’s about who can prove their model won’t destroy a life when prompted with the wrong name.

For now, the Northern District of California will decide if Google’s AI Mode is a search engine or a publisher. But the code doesn’t care about the verdict. The code just predicts the next token. And right now, the prediction looks dangerous.