A journalist has been legally convicted for failing to moderate and delete offensive third-party comments on their social media post. This ruling signals a dangerous shift in digital liability, effectively redefining individual social media profiles as editorial spaces where the account holder bears full responsibility for all user-generated content.

For years, the tech world operated under a comfortable, if fuzzy, assumption: the platform provides the pipes, and the user provides the content. We called it “Safe Harbor.” But as we move through April 2026, that harbor is drying up. This conviction isn’t just a legal anomaly; it is a systemic failure of the interface between law, and code. When a court decides that a journalist is responsible for the toxicity of their comment section, they are essentially demanding that every individual creator possess the moderation capabilities of a mid-sized tech firm.

It is a mathematical impossibility.

The Moderation Gap: Why Human Oversight Fails at Scale

To understand why this ruling is technically absurd, we have to appear at the telemetry of a viral post. When a piece of content hits the algorithmic “sweet spot,” the influx of comments doesn’t happen linearly—it happens exponentially. A journalist might see 10 comments in the first hour and 10,000 in the second. The latency between a comment being posted and a human moderator seeing it is the “Danger Zone.”

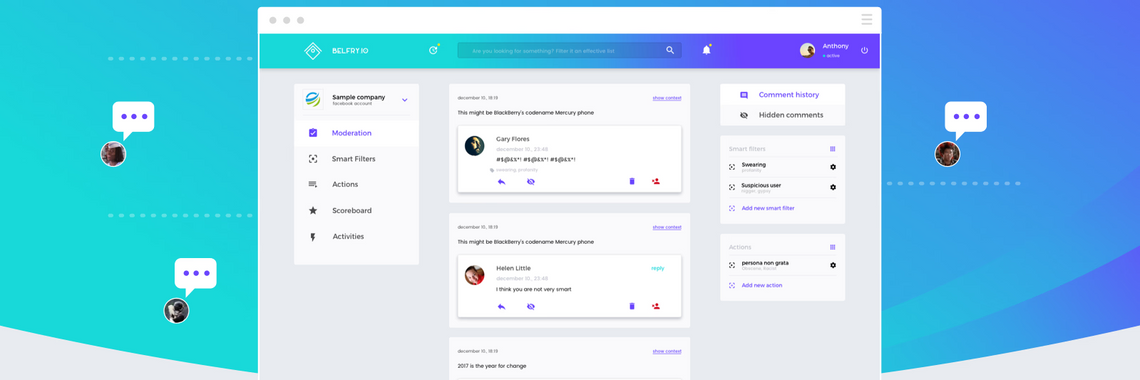

Most social media platforms provide basic tools: a “Report” button and a rudimentary keyword filter. But keyword filters are brittle. They rely on regex (regular expressions) that are easily bypassed by “leetspeak” or subtle semantic shifts. To truly moderate a high-traffic thread, you necessitate a sophisticated pipeline: an LLM-based toxicity classifier, a sentiment analysis engine, and a human-in-the-loop (HITL) system to handle edge cases.

Most journalists are using a mobile app, not a professional moderation dashboard. They are fighting a war of attrition against botnets and trolls using tools designed for casual sharing, not editorial governance.

The 30-Second Verdict: Technical Constraints vs. Legal Expectations

- The Expectation: Real-time removal of all “offensive” content.

- The Reality: API rate limits and notification lag make real-time manual moderation impossible for viral threads.

- The Tooling Gap: Platforms provide “Community Standards” for the site, but leave the legal liability of “editorial control” to the individual user.

From Platform to Publisher: The Regulatory Pivot

This conviction represents a pivot toward “Editorial Liability.” In the traditional print world, an editor is responsible for every word in the paper. The court is now applying that same logic to a Facebook or X (formerly Twitter) thread. This ignores the fundamental architecture of the modern web, where the distinction between a “publisher” and a “host” is blurred by the remarkably nature of the Digital Services Act (DSA) and similar global frameworks.

If a journalist is treated as a publisher of their comment section, they are essentially being told they must implement a rigorous content-filtering stack. In an enterprise environment, this would indicate deploying a model like Perspective API or a custom-tuned Llama-3 instance to scan every incoming token for toxicity before it ever hits the public UI. For an individual, What we have is not just a financial burden—it is a technical impossibility.

“The legal system is attempting to impose 20th-century editorial standards on 21st-century distributed architectures. Expecting a user to moderate a viral thread in real-time without professional-grade API access is like asking someone to stop a flood with a tea strainer.” — Marcus Thorne, Senior Cybersecurity Architect and Open-Source Advocate.

This creates a massive “chilling effect.” If the cost of engagement is a potential criminal record or a heavy fine, the rational move for any professional is to disable comments entirely. We are witnessing the death of the digital town square, replaced by a series of one-way broadcasts.

The Liability Matrix: US vs. EU Frameworks

The divergence in how different jurisdictions handle this is stark. Whereas the US has historically leaned on Section 230 of the Communications Decency Act to protect platforms (and by extension, users) from liability for third-party content, the EU is moving toward a more nuanced, yet more punitive, “Notice and Action” regime.

| Feature | Section 230 (US Model) | DSA / Editorial Model (EU/Italy) | The “Journalist’s Nightmare” |

|---|---|---|---|

| Primary Liability | Platform is generally immune. | Platform must act on “actual knowledge.” | User is treated as the primary editor. |

| Moderation Duty | Optional/Good Faith. | Mandatory for “Very Large Platforms.” | Absolute for the account holder. |

| Failure Outcome | Rarely leads to user conviction. | Platform fines (up to 6% turnover). | Personal legal conviction/fines. |

The Architectural Solution: Decentralized Moderation

If we want to protect free speech and journalistic inquiry, we need to move away from the “Centralized God-Mode” of moderation. The current model relies on a few massive companies (Meta, Google, X) providing the tools. If those tools are insufficient, the user suffers the legal consequences.

The answer lies in decentralized protocols and client-side filtering. Imagine a world where moderation isn’t a binary “delete or keep” performed by the author, but a customizable filter applied by the reader. Using decentralized storage and identity, users could subscribe to “moderation lists” curated by trusted third parties. This shifts the burden from the creator to the consumer and the community, mirroring how the early web functioned before the era of algorithmic silos.

the industry needs to standardize “Moderation APIs” for individual creators. If a journalist is legally responsible for their comments, platforms should be mandated to provide them with the same NPU-accelerated toxicity filters that the platforms use internally. You cannot hold a user to a standard of care that the platform’s own API doesn’t support.

The Bottom Line for Digital Creators

Until the law catches up with the latency of the internet, the only safe bet is a “Closed Loop” strategy. For those in high-risk jurisdictions, the advice is simple: disable comments or move to a platform that offers robust, automated moderation tools.

We are entering an era where a single unmoderated comment can be a legal liability. In the race between the speed of a troll’s keyboard and the speed of a journalist’s delete button, the law has just decided that the journalist must never lose. That is not a victory for civility; it is a victory for censorship by proxy.

For a deeper dive into the technical implementation of toxicity classifiers, I recommend exploring the latest research on arXiv regarding transformer-based sentiment analysis and the ongoing debates at the Electronic Frontier Foundation regarding digital speech.