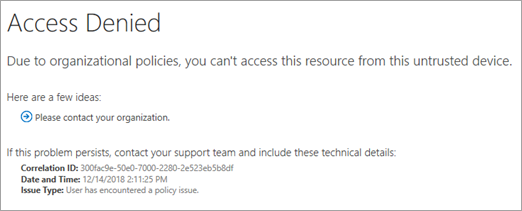

A 403 Access Blocked error on Financial Times infrastructure indicates aggressive AI-driven security filtering active as of March 2026. This incident highlights the shift toward behavioral biometrics and zero-trust architectures protecting premium content. Request ID 9e30fac51b27c4f6 suggests automated mitigation against perceived misuse, reflecting broader industry trends in adversarial testing.

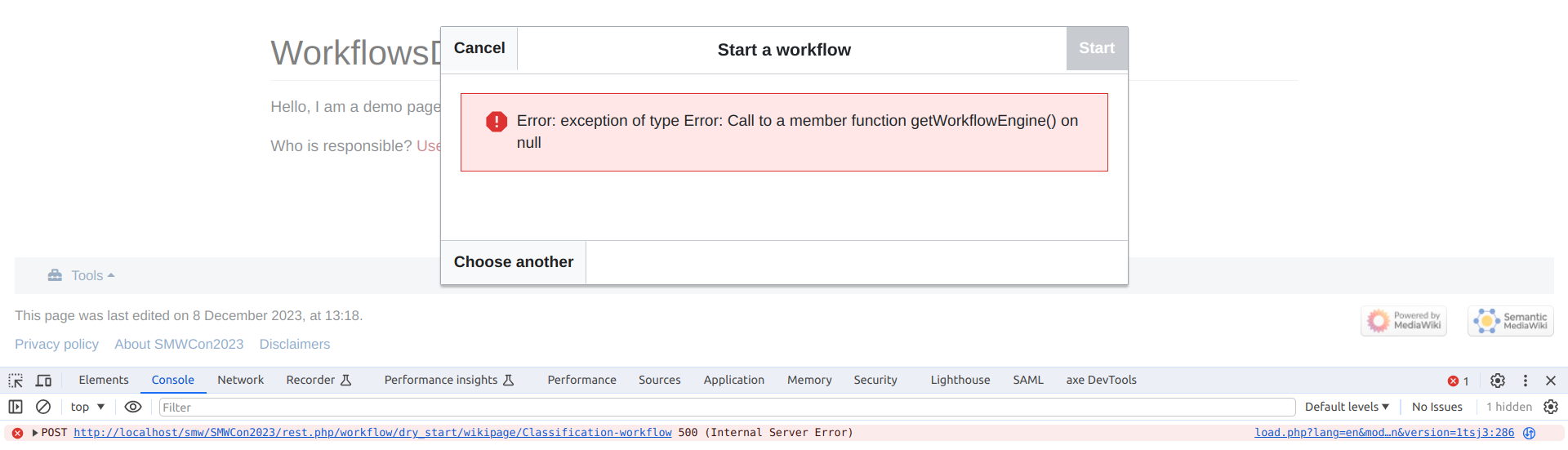

The Debug Panel as a Security Artifact

When a user encounters a standard 403 Forbidden status code, the instinct is to refresh the page. In the landscape of 2026, however, the metadata surrounding that rejection tells a more compelling story than the denial itself. The specific error payload generated by the Financial Times infrastructure reveals a debug-panel exposing the Request ID and Status Code directly to the client side. While intended for support resolution, this transparency walks a fine line between user empowerment and information leakage. In previous generations of web security, hiding server topology was paramount. Today, the confidence to expose a Request ID implies a backend robust enough to handle reconnaissance without fear of enumeration attacks.

This specific error instance, triggered on March 27, 2026, is not merely a glitch; it is a signal of the underlying security posture. The message cites “potential misuse,” a vague term that often masks the operation of heuristic algorithms analyzing user behavior in real-time. Unlike static rate-limiting of the early 2020s, modern Web Application Firewalls (WAFs) integrate session intelligence. They track cursor movement, typing cadence, and navigation patterns to distinguish between a human subscriber and an automated scraper. When the system flags “misuse,” it often means the behavioral biometrics deviated from the established baseline for a legitimate user session.

Elite Personas and the Adversarial Testing Loop

The cat-and-mouse game between security engineers and adversarial actors has evolved into a sophisticated discipline known as AI Red Teaming. As noted in recent industry analysis regarding The Elite Hacker’s Persona, the modern threat actor exhibits strategic patience, waiting for AI models to hallucinate or security protocols to drift. This patience is met by equally deliberate defense mechanisms. Security teams are no longer just patching vulnerabilities; they are simulating elite adversarial personas to harden systems before deployment.

The analysis reconstructs, through a process of logical deduction, the elite hacker’s persona to explain their strategic patience in the AI era. This de-mystification is crucial for developing countermeasures that anticipate rather than react.

This philosophical shift is visible in the hiring trends of major security firms. Roles such as the Distinguished Engineer – AI-Powered Security Analytics at Netskope emphasize architecting next-generation analytics that can parse the noise of legitimate traffic from the signal of an attack. The 403 error seen on the FT platform is likely the output of such analytics engines. It is not a static rule blocking an IP address; it is a dynamic decision made by an engine weighing hundreds of variables in milliseconds. The presence of these roles confirms that access control is now a data science problem, not just a networking configuration.

Information Leakage and the Zero Trust Boundary

Returning to the debug panel exposed in the error message: Reason: Access Blocked and Request ID: 9e30fac51b27c4f6. In a strict Zero Trust architecture, every request is verified, but the response should minimize surface area. Providing a Request ID is standard practice for tracing logs, but coupling it with a clear “Access Blocked” reason can aid adversaries in mapping the rule sets of a WAF. If an attacker receives this specific response, they know they triggered a misuse detector rather than a simple IP ban. This feedback loop allows them to adjust their scraping algorithms to mimic human behavior more closely.

Organizations like Microsoft AI are staffing Principal Security Engineer roles specifically to manage these complexities within AI ecosystems. The challenge lies in balancing usability with security. If the system is too aggressive, legitimate subscribers face friction, leading to churn. If it is too passive, content is stripped by bots. The 2026 standard appears to lean towards friction, prioritizing asset protection over seamless access when anomalies are detected. This aligns with the broader market dynamic where digital content is treated as a high-value asset requiring fortress-like protection.

Comparative Access Control Mechanisms

| Feature | Legacy WAF (2020-2023) | AI-Driven Security (2026) |

|---|---|---|

| Detection Method | Signature-based, IP Reputation | Behavioral Biometrics, Heuristics |

| Response Time | Static Rule Matching | Real-time Probabilistic Analysis |

| False Positives | High (Collateral Blocking) | Reduced via Contextual Awareness |

| Debug Info | Generic 403 Messages | Traceable Request IDs for Support |

The Human Element in Automated Defense

Despite the automation, the human element remains critical. The error page directs users to help.ft.com, indicating that final resolution often requires human intervention. This hybrid model is essential. AI can flag the anomaly, but a human support agent must verify the intent. This workflow mirrors the requirements seen in job postings for Cybersecurity Subject Matter Experts, where clearance and citizenship requirements highlight the sensitivity of managing access to critical information infrastructure. The security operations center (SOC) is no longer just watching screens; they are adjudicating the edge cases where algorithms hesitate.

the rise of adversarial testing roles, such as the AI Red Teamer, underscores the proactive nature of modern defense. These professionals attempt to bypass the very systems generating the 403 errors to identify weaknesses. Their perform ensures that the “potential misuse” detection is robust against evolving attack vectors. In 2026, security is not a state of being but a continuous process of validation and adaptation. The error page is not a dead end; it is a checkpoint in a living, breathing security ecosystem.

The 30-Second Verdict

- Incident Type: Automated Access Control Trigger (403 Forbidden).

- Primary Cause: Heuristic detection of potential misuse or anomalous behavior.

- Security Posture: High transparency (Debug Panel enabled) suggests confidence in backend tracing.

- Industry Trend: Shift from static blocking to AI-driven behavioral analysis.

- Actionable Insight: Users facing this error should avoid rapid refreshing, as it reinforces the “misuse” signal.

the access error encountered on the Financial Times platform serves as a microcosm of the 2026 cybersecurity landscape. It is a world where access is never assumed, every request is scrutinized by intelligent algorithms, and the boundary between user and attacker is defined by behavior rather than credentials. For the technologist, the debug panel offers a glimpse into the machinery of trust. For the enterprise, it represents the necessary friction of protecting intellectual property in an age of automated exploitation. The system worked as designed: it stopped, it logged, and it demanded verification. In the architecture of modern security, that is the only success metric that matters.