From YAML Frustration to Local LLM Automation: A Home Assistant Breakthrough

A veteran tech analyst successfully fine-tuned Qwen2.5-Coder-7B-Instruct on a Lenovo ThinkStation PGX to generate functional Home Assistant automations from natural language prompts, bypassing the complexities of YAML scripting. This demonstrates the viability of running sophisticated AI locally, even with modest hardware and offers a potential paradigm shift for smart home customization.

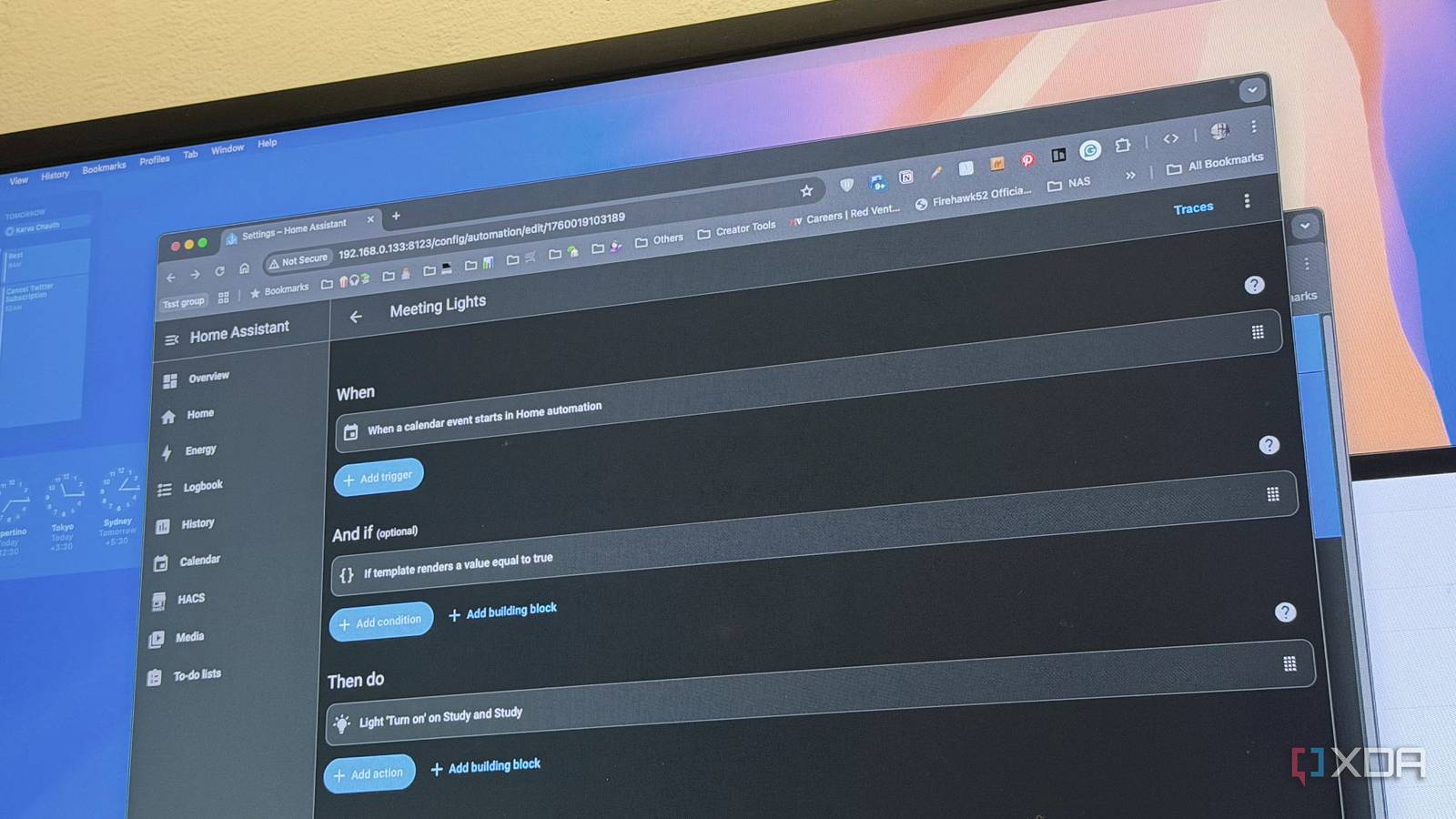

The perennial pain point for Home Assistant enthusiasts isn’t the platform’s power – it’s the configuration. YAML, whereas flexible, demands precision. Cloud-based LLMs often stumble on the nuances of Home Assistant’s entity IDs and action calls. The solution, as demonstrated by this project, isn’t necessarily *more* parameters, but targeted training. The key isn’t just having a large language model; it’s having one that understands the specific dialect of your smart home.

Why 7B Parameters Are Enough (For Now)

The choice of Qwen2.5-Coder-7B-Instruct wasn’t arbitrary. While larger models like Qwen-3.5-9B garner attention for benchmark scores, the 7B variant strikes a crucial balance between capability and accessibility. It’s a model that already exhibits strong code generation skills, crucial for YAML output. As documented on Hugging Face, Qwen2.5-Coder-7B-Instruct performs well on HumanEval, a coding benchmark, and demonstrates proficiency in structured code tasks. This pre-existing foundation significantly reduces the training burden. The “Instruct” component is equally vital; it’s pre-trained to follow instructions, making it receptive to prompts like “create an automation that…”

The hardware underpinning this project is equally noteworthy. The Lenovo ThinkStation PGX, equipped with a GB10 GPU and 128GB of unified memory, isn’t a consumer-grade machine. However, it highlights a critical point: fine-tuning benefits immensely from ample VRAM. Unlike QLoRA, which quantizes models to fit limited memory, the PGX allowed for BF16 precision training, preserving model fidelity. This is a significant advantage, as quantization can introduce artifacts that degrade performance. Even for those without a PGX, the resulting 4-bit quantized model (around 4.5GB) is accessible to users with 8GB of VRAM or more.

The Two-Stage Training Strategy: A Critical Distinction

The success of this project hinges on a two-stage training approach. Attempting to train the model on a mixed dataset of action call examples and YAML automations simultaneously proved ineffective. The YAML examples were simply outnumbered, treated as noise by the model. The first stage focused on establishing a strong understanding of Home Assistant’s domain – the entities, actions, and their relationships – using the acon96 Home-Assistant-Requests-V2 dataset. This dataset, originally designed for the home-llm project, provides a wealth of instruction-response pairs for device control.

The second stage then layered YAML generation capabilities on top of this existing knowledge. A smaller, synthetically generated dataset of 1,400 examples, created by translating Home Assistant documentation into conversational prompts and YAML responses, proved remarkably effective. This approach allowed the model to learn the *structure* of YAML automations without losing its understanding of the underlying Home Assistant ecosystem. The lower learning rate used in stage two (5e-5 vs. 2e-4) further mitigated the risk of catastrophic forgetting.

What This Means for Enterprise IT and Edge AI

This project isn’t just about simplifying smart home automation; it’s a microcosm of a larger trend: the democratization of AI. The ability to fine-tune a relatively small model on commodity hardware opens up possibilities for edge AI applications in various sectors. Imagine a manufacturing plant using a locally fine-tuned LLM to optimize machine parameters, or a security firm deploying a customized model for threat detection. The benefits are clear: reduced latency, enhanced privacy, and increased resilience.

“The move towards smaller, fine-tuned models is incredibly significant,” says Dr. Anya Sharma, CTO of SecureEdge AI. “We’re seeing a shift away from the ‘bigger is better’ paradigm. The ability to run sophisticated AI locally, without relying on cloud connectivity, is a game-changer for security-sensitive applications.”

Bridging the Ecosystem Gap: Open Source vs. Proprietary Platforms

The open-source nature of both Home Assistant and Qwen2.5 is crucial to this success story. Home Assistant’s open API allows for seamless integration with custom models, while Qwen2.5’s open weights enable researchers and developers to experiment and innovate without vendor lock-in. This contrasts sharply with closed ecosystems like Apple HomeKit, where customization options are limited. The ability to fine-tune a model to understand the specific nuances of Home Assistant is a powerful example of the benefits of open-source collaboration.

However, the reliance on synthetic data generation raises ethical considerations. While the synthetic dataset was created from existing documentation, it’s important to acknowledge the potential for bias and inaccuracies. As LLMs become more integrated into our lives, ensuring the quality and fairness of training data will be paramount.

The 30-Second Verdict

This project proves that you don’t need a supercomputer or a massive dataset to create a powerful, personalized AI assistant for your smart home. A 7B parameter model, fine-tuned on a modest amount of targeted data, can generate functional Home Assistant automations with minimal effort. This is a significant step towards a more accessible and customizable smart home experience.

The code and model weights are available on Hugging Face, allowing others to build upon this work. The project as well underscores the importance of a strategic training approach, emphasizing the value of domain-specific knowledge and a two-stage fine-tuning process.

“The future of smart home automation isn’t about pre-built routines; it’s about empowering users to create their own,” adds Ben Carter, a senior Home Assistant developer. “This project demonstrates the potential of local LLMs to make that vision a reality.”

Further research will focus on expanding the training dataset, improving the model’s ability to handle complex Jinja2 templates, and exploring the integration of voice control. The goal is to create a truly intelligent Home Assistant assistant that can understand and respond to natural language commands with accuracy and efficiency.