Gemini for Home Finally Achieves Usability: A Deep Dive into Google’s AI-Powered Smart Home Revamp

Google has fundamentally overhauled Gemini for Home, addressing long-standing complaints about rigid voice commands and device recognition. Rolling out this week’s beta, the update prioritizes natural language processing (NLP) and contextual awareness, allowing users to interact with their smart homes using conversational phrasing instead of precise syntax. This isn’t merely a cosmetic change; it represents a significant shift in Google’s approach to ambient computing, moving away from command-line interfaces and towards a truly intuitive user experience. The core of the improvement lies in a revamped LLM backend and enhanced device fingerprinting.

The LLM Parameter Scaling Problem & Google’s Solution

The initial rollout of Gemini within the Google Home ecosystem was plagued by issues stemming from the LLM’s inability to effectively handle the nuances of everyday speech. Early reports indicated a reliance on exact phrasing, leading to frustrating interactions. The problem wasn’t necessarily the size of the model – though parameter scaling is always a factor – but rather the training data and the fine-tuning process. Google appears to have addressed this by significantly expanding the dataset used to train Gemini for Home, incorporating a wider range of conversational patterns and regional dialects. Crucially, they’ve moved beyond simple keyword spotting to implement a more robust semantic understanding engine. This engine leverages a combination of transformer networks and recurrent neural networks (RNNs) to analyze the intent behind user requests, even when those requests are ambiguous or incomplete.

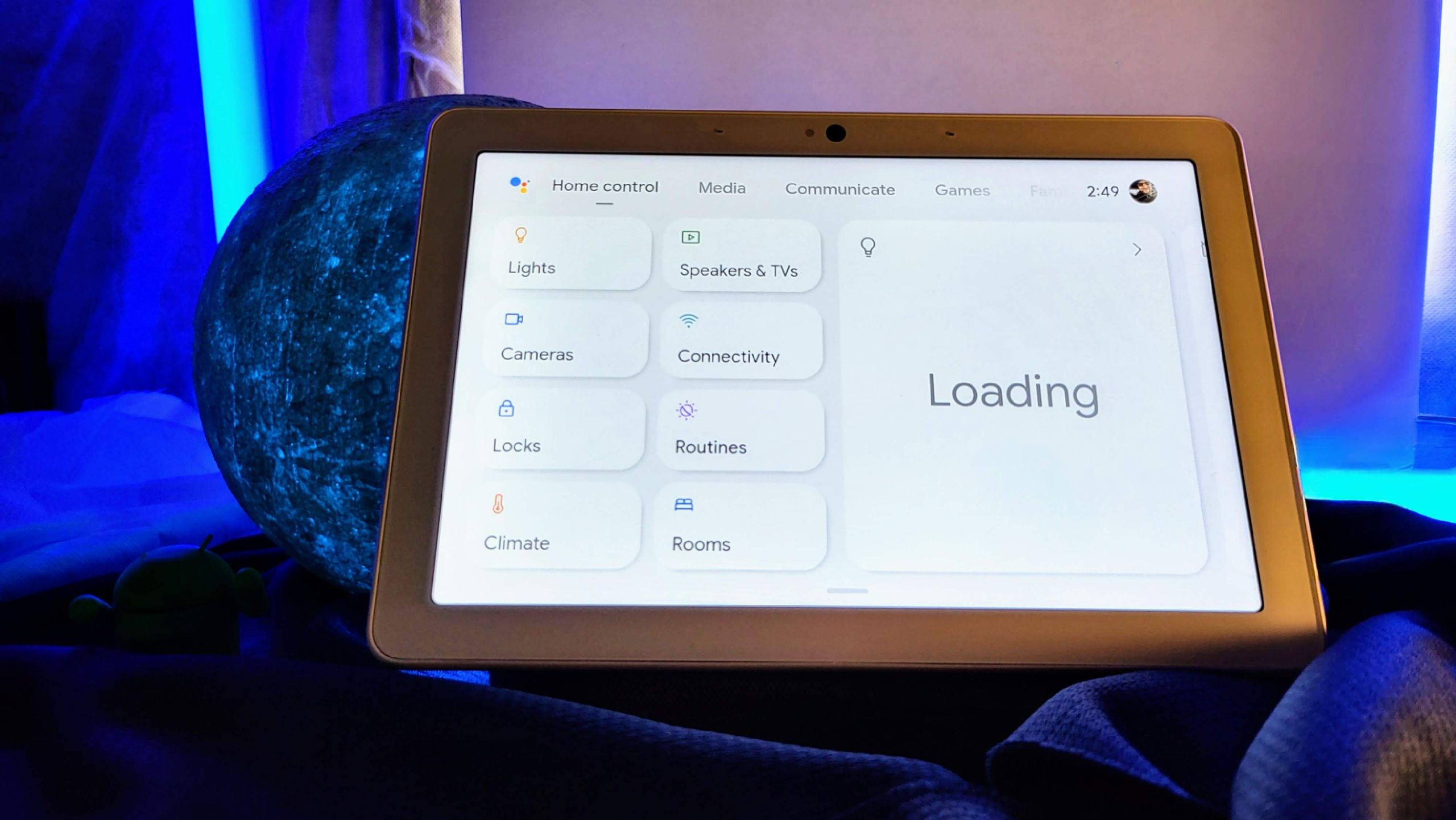

The shift is noticeable. Instead of needing to say “Turn the living room lamp to 50% brightness,” users can now simply say “Dim the lights in here a bit.” This seemingly small change represents a massive leap in usability. The system now understands “in here” as a contextual reference to the current room and “a bit” as a relative adjustment to the existing brightness level. This relies heavily on Google’s ongoing mapping of physical spaces within the home, a process that leverages data from Nest devices and user-defined room labels.

Device Recognition: Beyond Simple Naming Conventions

Another key area of improvement is device recognition. Previously, Gemini frequently confused devices with similar names – a common complaint centered around differentiating between “lamp” and “light.” Google’s solution isn’t simply about better naming conventions; it’s about building a more sophisticated device fingerprinting system. This system combines several data points, including device type, manufacturer, model number, network address, and even power consumption patterns, to create a unique identifier for each device. This allows Gemini to accurately identify devices even when users use ambiguous language.

This is particularly important in larger homes with numerous smart devices. The system now utilizes a graph database to represent the relationships between devices and their physical locations, further enhancing its ability to disambiguate requests. For example, if a user says “Turn off the light,” Gemini can consult the graph database to determine which light is most likely being referenced based on the user’s current location and the context of the conversation.

Expressive Lighting & The Rise of Semantic Control

The introduction of “expressive lighting” is a particularly compelling feature. The ability to control smart bulbs using descriptive language – “the color of the ocean,” “the glow of the moon” – represents a significant step towards a more natural and intuitive smart home experience. This functionality is powered by a new color mapping algorithm that translates semantic descriptions into specific RGB values. The algorithm leverages a vast database of color associations and user preferences to ensure that the resulting color accurately reflects the user’s intent.

“The biggest challenge wasn’t just identifying colors, but understanding the *feeling* behind the request. We had to train the model to associate descriptive phrases with specific emotional responses, and then translate those responses into appropriate color palettes,” says Dr. Anya Sharma, CTO of AmbientAI, a competing smart home AI developer. “Google’s approach, as far as I can tell from the public demos, is surprisingly nuanced.”

Beyond lighting, the update also introduces precision controls for larger appliances, allowing users to set exact humidity levels or preheat ovens with specific temperature settings. This level of granularity is crucial for users who want to automate complex tasks or optimize their home environment for specific activities.

Ecosystem Lock-In & The Open-Source Alternative

Even as these improvements are welcome, it’s important to acknowledge the broader implications for the smart home ecosystem. Google’s continued investment in Gemini for Home further solidifies its position as a dominant player in the space, potentially exacerbating concerns about vendor lock-in. Users who have invested heavily in Google’s ecosystem may be less likely to consider alternatives, even if those alternatives offer superior features or privacy protections.

This trend is fueling the growth of open-source smart home platforms like Home Assistant, which offer greater flexibility and control over user data. Home Assistant allows users to integrate devices from a wide range of manufacturers, avoiding the limitations of proprietary ecosystems. However, open-source platforms typically require more technical expertise to set up and maintain, making them less accessible to the average user. The tension between convenience and control will likely continue to shape the smart home landscape for years to approach.

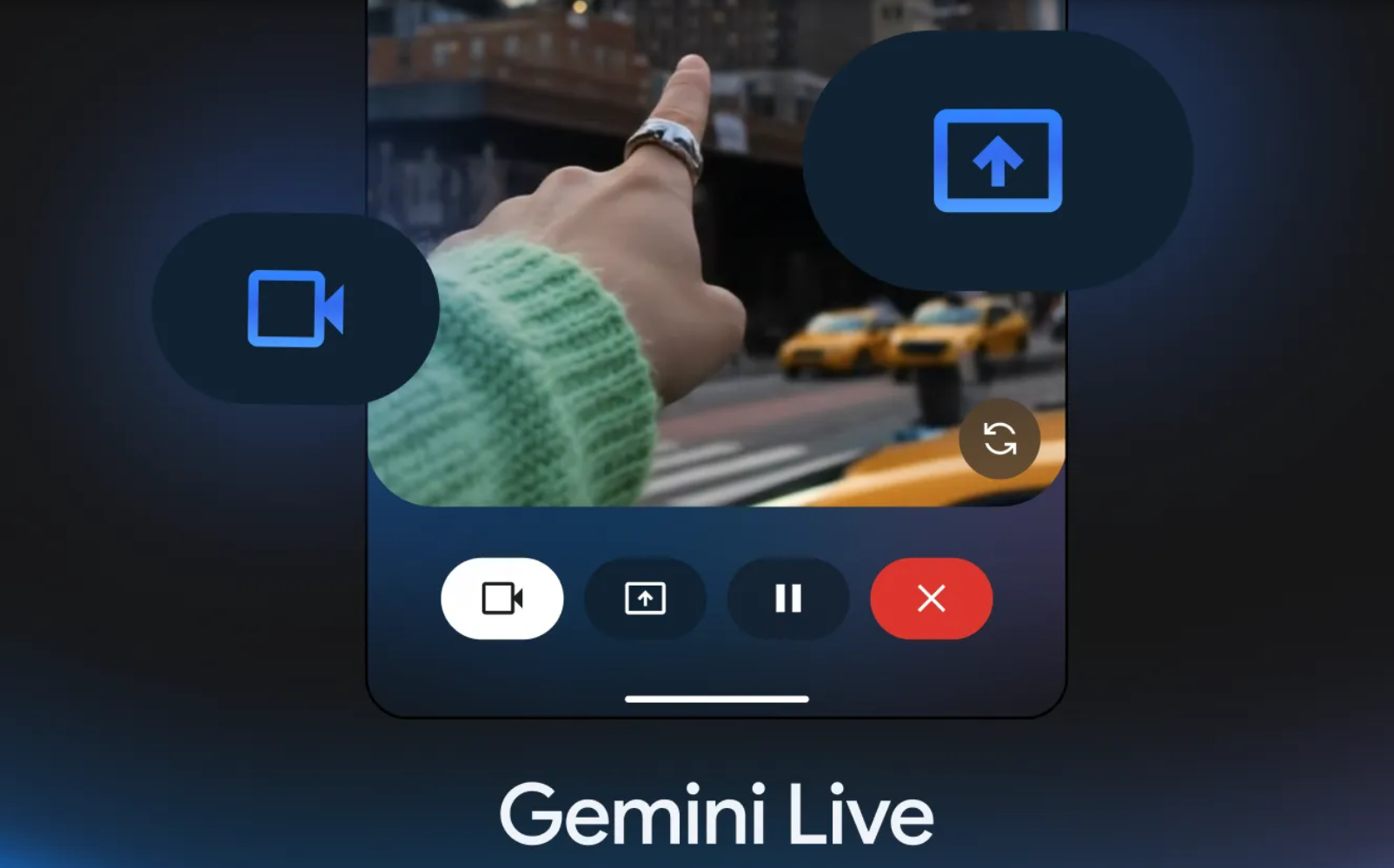

Gemini Live & The Future of Conversational AI

The enhanced Gemini Live feature, which provides more detailed and interactive news summaries, demonstrates Google’s vision for a truly conversational AI assistant. The ability to ask follow-up questions and delve deeper into specific topics transforms the news briefing from a passive experience into an active dialogue. This functionality relies on Google’s advanced natural language understanding capabilities and its access to a vast repository of information.

However, it also raises concerns about the potential for bias and misinformation. AI-powered news summaries are only as accurate as the data they are trained on, and biased data can lead to skewed or misleading results. Google will need to prioritize transparency and accountability in its AI algorithms to ensure that Gemini Live provides users with reliable and unbiased information.

Android 16 Integration & The UI/UX Evolution

The integration with Android 16, specifically the support for edge-to-edge displays and predictive back gestures, highlights Google’s commitment to a seamless user experience across its entire ecosystem. These UI/UX enhancements make the Google Home app more intuitive and visually appealing, further encouraging users to engage with Gemini for Home. The predictive back gesture, in particular, is a welcome addition, allowing users to preview their back navigation and avoid accidental exits.

What This Means for Enterprise IT

The improvements to Gemini for Home have implications beyond the consumer market. Enterprise IT departments are increasingly exploring the use of smart home technology to automate building management systems and improve employee productivity. A more reliable and intuitive AI assistant can streamline these processes, reducing the need for manual intervention and improving overall efficiency. However, security concerns remain a major obstacle to widespread adoption. Enterprise IT departments will need to carefully evaluate the security risks associated with smart home devices and implement appropriate mitigation measures.

The 30-Second Verdict: Google has finally delivered on the promise of a truly intelligent smart home assistant. Gemini for Home is now significantly more usable and intuitive, thanks to its improved natural language processing and device recognition capabilities. While concerns about ecosystem lock-in and data privacy remain, this update represents a major step forward for the future of ambient computing.

You can identify more information about Gemini and its capabilities on the official Google Gemini website and explore the underlying technology behind large language models at Attention is All You Need (the original Transformer paper).