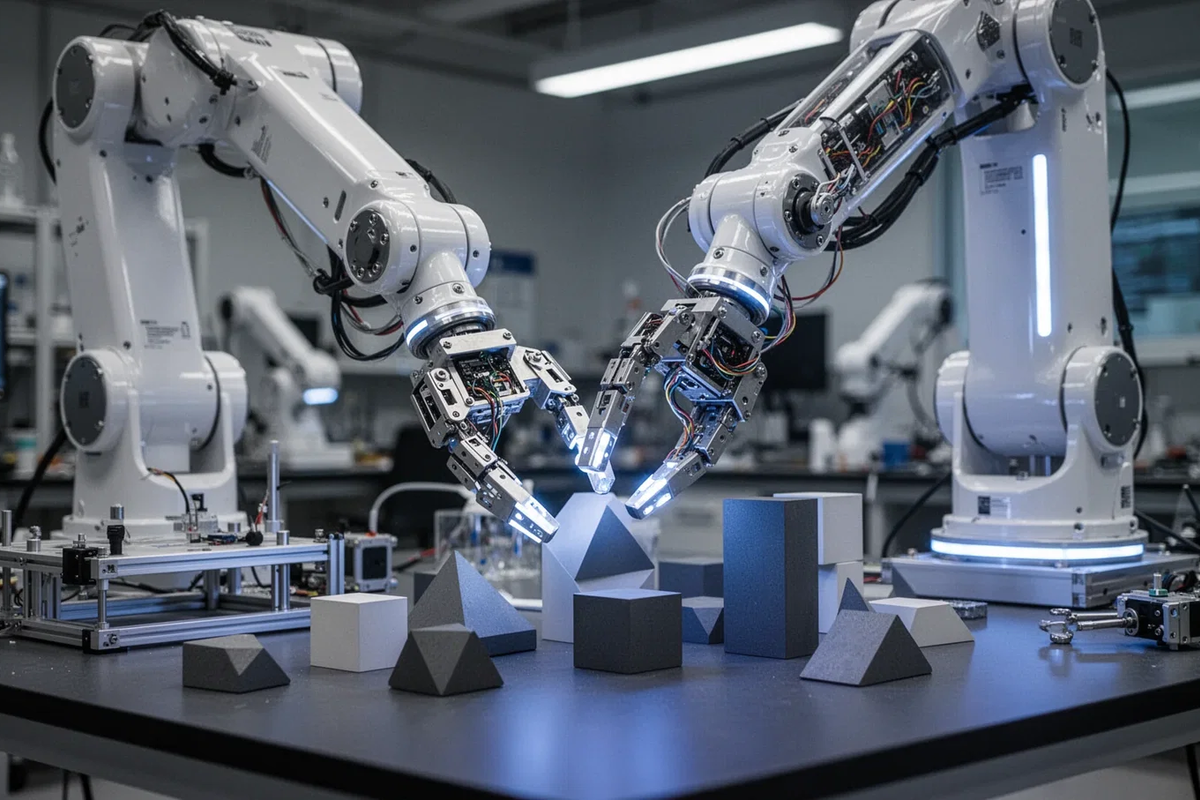

Generalist AI has unveiled GEN-1, an embodied foundation model that utilizes a physical robot head to bridge the gap between digital intelligence and sensory reality. By training on real-world physical interactions rather than static datasets, GEN-1 aims to create a “universal brain” for robotics, accelerating the path toward general-purpose automation.

For years, the AI industry has been obsessed with scaling parameters in a vacuum. We’ve seen LLMs achieve superhuman performance in coding and poetry, but the moment you put that intelligence into a chassis, it fails. Why? Because there is a fundamental disconnect between token prediction and spatial reasoning. GEN-1 is an attempt to solve the “Moravec’s Paradox”—the discovery that high-level reasoning requires exceptionally little computation, but low-level sensorimotor skills require enormous computational resources.

The “robot head” isn’t a gimmick; it’s a data collection engine. By isolating the sensory inputs—vision, depth, and tactile feedback—into a focused embodied unit, Generalist AI is creating a high-fidelity feedback loop that allows the model to learn the physics of the world without the noise of full-body locomotion instability.

The Architecture of Embodiment: Beyond the Transformer

Most current robotic systems rely on a pipeline: Perception → Planning → Action. This represents slow, brittle, and prone to “cascading errors.” If the perception layer misidentifies a coffee cup as a plastic bottle, the planning layer fails, and the robot crushes the object. GEN-1 moves toward an end-to-end architecture. Instead of discrete steps, it uses a unified latent space where visual tokens and motor commands are processed simultaneously.

To achieve this, the model likely leverages a variation of a Vision-Language-Action (VLA) model. By mapping visual inputs directly to joint trajectories, GEN-1 reduces latency and increases the fluidity of movement. We are talking about a shift from “thinking then doing” to “intuitive reaction.”

The hardware integration is where this gets gritty. To handle the massive throughput of real-time sensory data, these systems typically rely on high-bandwidth interconnects and NPUs (Neural Processing Units) capable of executing tensor operations at the edge. If GEN-1 is to scale, it will need to move away from cloud-dependency and toward edge-computing architectures to avoid the catastrophic latency of a round-trip to a data center during a precision task.

“The industry has spent a decade perfecting the ‘brain’ in a jar. GEN-1 represents the pivot toward the ‘body’ as the primary teacher. We are moving from the era of Big Data to the era of Big Experience.”

The Data Hunger: Solving the Simulation-to-Real Gap

The biggest hurdle in robotics has always been the “Sim-to-Real” gap. You can train a robot in a physics simulator for a billion iterations, but the moment it hits a real-world surface with unexpected friction or lighting, the model collapses. GEN-1 attacks this by using the physical robot head to gather “ground truth” data.

This creates a virtuous cycle:

- Physical Interaction: The robot head interacts with an object, experiencing real-world physics.

- Data Labeling: This interaction is recorded as a high-fidelity trajectory.

- Model Refinement: The foundation model is updated using this real-world “gold” data.

- Generalization: The model applies these learned physics to entirely new objects it has never seen.

This is a direct challenge to the current dominance of reinforcement learning (RL) in simulation. By prioritizing physical embodiment, Generalist AI is betting that 1,000 hours of real-world experience are more valuable than 1,000,000 hours of simulated experience.

The 30-Second Verdict: Why This Matters for the Market

GEN-1 isn’t just about a robot head; it’s about the commoditization of robotic skill. If a foundation model can truly generalize “how to touch” or “how to see,” we move away from bespoke programming for every single industrial arm. We move toward a “plug-and-play” intelligence where you download a model and your robot suddenly knows how to sort laundry or assemble a circuit board without a single line of new code.

The Ecosystem War: Open Weights vs. Walled Gardens

The rollout of GEN-1 arrives amidst a fierce battle for the robotic OS. On one side, you have the proprietary behemoths building closed ecosystems. On the other, the open-source community leveraging RT-2 and similar frameworks to democratize embodied AI.

If Generalist AI keeps GEN-1 behind a proprietary API, they risk becoming a niche player. Still, if they provide a developer SDK, they could effectively become the “Android of Robotics,” providing the base layer that thousands of third-party hardware manufacturers build upon. The real play here isn’t the robot head—it’s the weights of the model that understands the physical world.

But there is a dark side. Embodied AI introduces a massive new attack surface. A “jailbroken” LLM can hallucinate a poem; a “jailbroken” embodied model can physically destroy its environment. As we integrate these models into factories and homes, the need for “hard-coded” safety constraints—physical interlocks that override AI decisions—becomes non-negotiable.

| Feature | Traditional Robotics (Scripted) | LLM-Based Robotics (Prompted) | GEN-1 (Embodied Foundation) |

|---|---|---|---|

| Adaptability | Zero (requires reprogramming) | Moderate (via natural language) | High (via sensory generalization) |

| Latency | Ultra-Low (Deterministic) | High (Cloud Inference) | Low (Edge-Optimized VLA) |

| Learning Curve | Manual Engineering | Fine-tuning/Prompting | Continuous Physical Learning |

| Failure Mode | Predictable/Crash | Hallucination/Confusion | Physical Miscalculation |

The Bottom Line: The Death of the Specialist Robot

We are witnessing the end of the “specialist” era. For decades, we built robots that did one thing perfectly—welding a car door or vacuuming a floor. GEN-1 is a signal that the industry is pivoting toward General Purpose Embodiment.

The technical hurdle remains the energy density of batteries and the torque limits of actuators, but the software bottleneck is finally cracking. When the “brain” can finally perceive the “body” as a seamless extension of its logic, the transition from digital AI to physical AI will happen faster than anyone in the Valley is currently pricing into their portfolios.

Watch the API documentation. If Generalist AI opens up the weights for the sensory-motor mapping, the explosion in robotic productivity won’t be a gradual slope—it will be a vertical cliff.