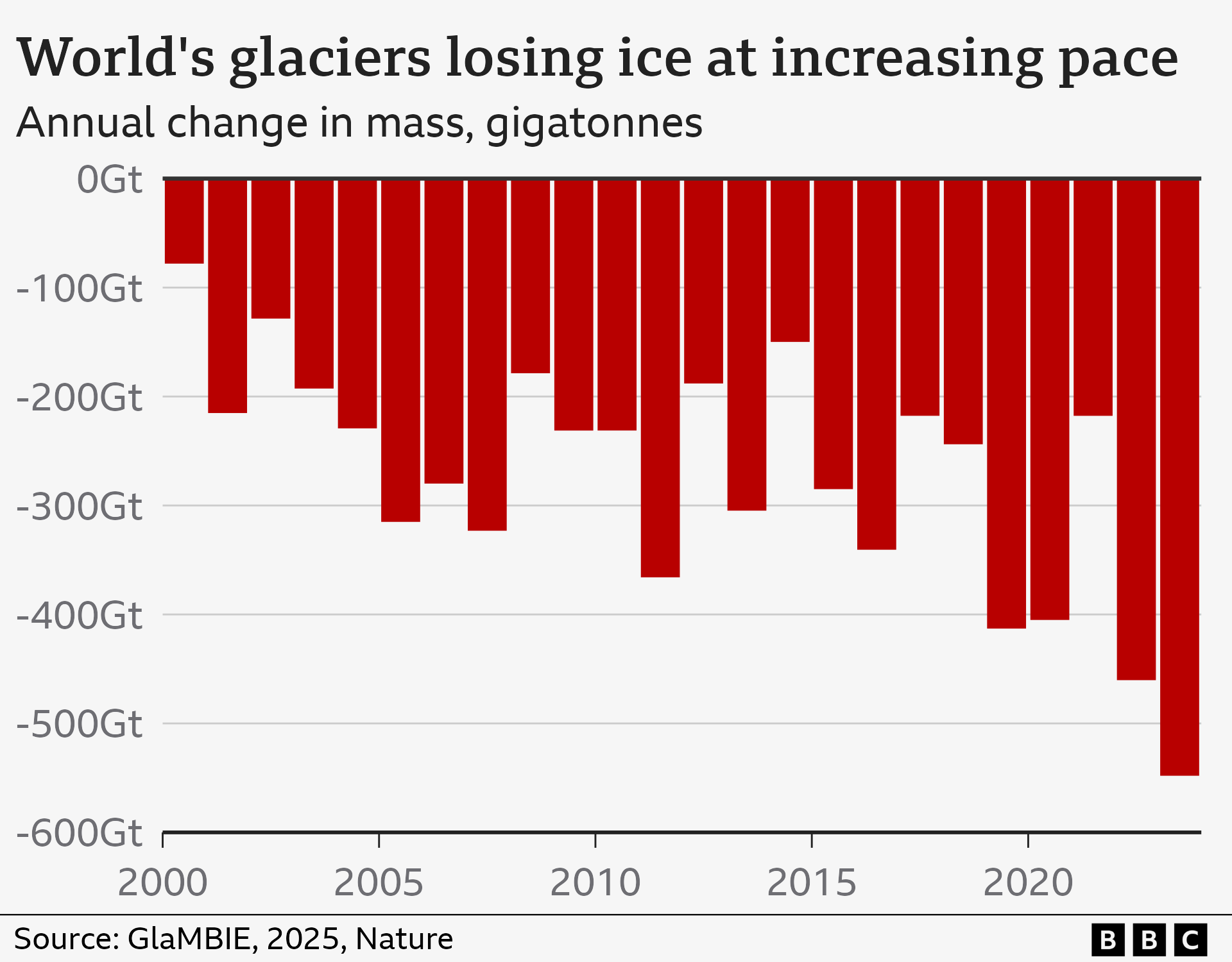

Global glaciers suffered record-breaking mass loss throughout 2025, driven by unprecedented thermal anomalies and accelerated melt cycles. This environmental collapse is now being tracked via high-resolution satellite telemetry and AI-driven predictive modeling, revealing a rate of decay that significantly outpaces previous IPCC simulations and legacy climate projections.

For those of us living in the silicon bubble, it is easy to view glacier melt as a distant, biological tragedy. It isn’t. It is a data problem. The record losses reported this year aren’t just numbers on a spreadsheet; they are the result of a massive failure in our planetary homeostasis, and the only way You can even comprehend the scale of the disaster is through the lens of extreme compute.

We are currently witnessing a pivot in how we monitor the cryosphere. We’ve moved past simple photographic evidence into the era of the “Digital Twin.” As we navigate April 2026, the focus has shifted from observing the melt to simulating it in real-time using neural networks that can process petabytes of SAR (Synthetic Aperture Radar) data in seconds.

The Geospatial Stack: From SAR to Neural Rendering

To understand how we identified the 2025 record losses, you have to look at the hardware. We aren’t just using cameras. The heavy lifting is done by European Space Agency (ESA) Sentinel satellites and NASA’s GRACE-FO missions. These systems utilize gravimetry—measuring minute changes in Earth’s gravity field to determine exactly how much ice mass has vanished.

The bottleneck has always been the processing latency. Raw radar data is noisy and computationally expensive to clean. Traditionally, this required massive CPU clusters and weeks of batch processing. Now, we are seeing a migration toward NPU-accelerated (Neural Processing Unit) pipelines. By deploying Convolutional Neural Networks (CNNs) directly on the edge—or at least in the immediate ground-station cloud—analysts can now segment ice-sheet boundaries with sub-meter precision in near real-time.

It’s an engineering marvel. It’s also a warning.

The transition from legacy numerical models to AI-driven emulation is stark. Where old models relied on rigid differential equations to predict ice flow, new “World Models” use transformer-based architectures to predict patterns based on historical anomalies. They don’t just calculate the melt; they recognize the signature of the collapse.

The 30-Second Verdict: Legacy vs. AI Modeling

| Metric | Legacy Numerical Models | AI-Driven Digital Twins (2026) |

|---|---|---|

| Processing Latency | Weeks/Months (Batch) | Near Real-Time (Streaming) |

| Spatial Resolution | Kilometer-scale | Sub-meter/Centimeter-scale |

| Compute Architecture | CPU-heavy / HPC Clusters | GPU/NPU-accelerated / Distributed |

| Predictive Method | Deterministic Physics | Probabilistic Pattern Recognition |

The Compute Paradox: Solving Heat with Heat

There is a delicious, dark irony in using H100 and B200 GPU clusters to monitor melting ice. The energy required to train the LLMs and climate models capable of predicting these record losses contributes to the very thermal load we are tracking. We are essentially burning the house down to build a better thermometer.

The scale of parameter scaling required for global climate simulations is staggering. We are no longer talking about billions of parameters; we are entering the realm of trillion-parameter models that incorporate ocean currents, atmospheric pressure, and ice-sheet viscosity into a single unified latent space. This requires an infrastructure that rivals the largest hyperscalers.

“The challenge isn’t the data collection anymore—we have more telemetry than we know what to do with. The challenge is the inference cost. Running a high-fidelity simulation of the West Antarctic Ice Sheet in real-time requires a level of FLOPs that would create a top-tier AI lab blink.” — Marcus Thorne, Lead Data Architect at CryoCompute Labs.

This has sparked a new “Climate Tech War.” We are seeing a divide between open-source climate data, championed by communities on GitHub, and proprietary, closed-loop models owned by Big Tech. When the data predicting the rise of sea levels is locked behind a corporate API, the “Information Gap” becomes a matter of national security.

Algorithmic Fragility and the “Black Swan” Melt

The 2025 data reveals a terrifying trend: non-linear acceleration. In engineering terms, we’ve hit a feedback loop where the system is no longer responding linearly to input (temperature). This is where our AI models struggle. Most ML models are trained on historical data, but we are now entering a “regime shift”—a state where the past is no longer a reliable predictor of the future.

This is the “Black Swan” of climate tech. If the model is trained on 20th-century melt rates, it will consistently under-predict the 2026 reality. To fix this, developers are implementing “Physics-Informed Neural Networks” (PINNs). Instead of relying solely on data, PINNs embed the laws of thermodynamics directly into the loss function of the neural network.

Essentially, we are teaching the AI that it cannot ignore the laws of physics, even if the data looks anomalous.

For the developers and architects building these systems, the goal is end-to-end encryption of telemetry data to prevent state-actor manipulation of climate reports. As glaciers disappear, the land beneath them becomes a geopolitical prize. Whoever controls the most accurate map of the new coastline controls the future of maritime trade.

Why This Matters for the Tech Ecosystem

- Hardware Demand: The surge in climate modeling is driving a secondary market for specialized AI accelerators beyond LLMs.

- Open Data Sovereignty: There is a growing push for “Climate Open Source,” ensuring that the models used to predict disaster aren’t proprietary.

- Edge Integration: We are seeing a rise in ruggedized IoT sensors deployed in extreme environments, utilizing LoRaWAN for long-range, low-power data transmission from the heart of glaciers.

The Final Trace: A Systemic Reset

The record losses of 2025 are a hardware failure of the planet. We can build the most sophisticated IEEE-standard sensors and deploy the most efficient transformer models, but telemetry is not a solution; it is a diagnostic.

We have the code to see the end. We just don’t have the patch to stop it.

As we move further into 2026, the industry must decide if it will continue to optimize the observation of collapse or if it will pivot its compute power toward the mitigation of it. Until then, we are simply documenting the crash in high definition.