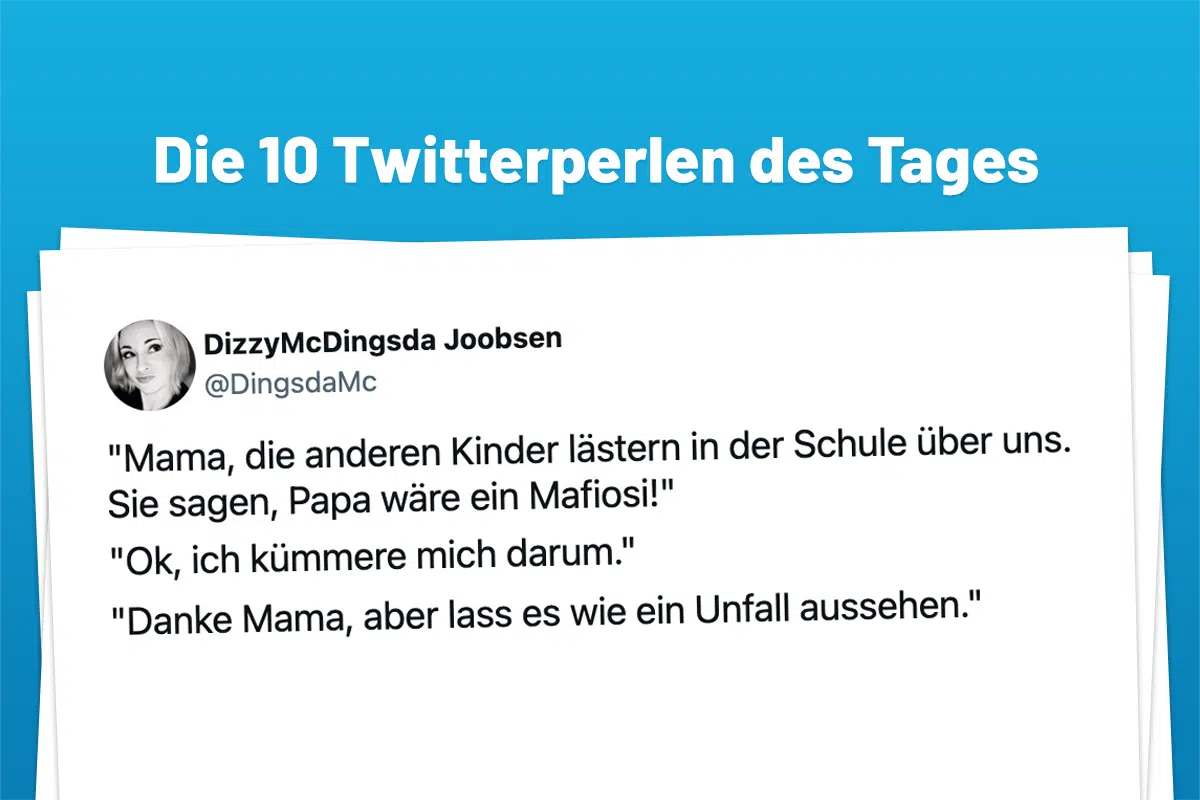

On April 3, 2026, the viral “Easter Bunny Accident” trend on X highlights the intersection of generative AI-driven social curation and algorithmic humor. This phenomenon demonstrates how automated content aggregators like Twitterperlen leverage real-time LLM sentiment analysis to monetize micro-trends within the evolving, decentralized social web landscape.

Let’s be clear: the “Easter Bunny” meme is a distraction. The real story isn’t the joke; it’s the pipeline. We are witnessing the maturation of the “Curation Layer”—a sophisticated middle-ware of AI agents that scan billions of data points to extract “pearls” of human irony and repackage them for mass consumption in milliseconds. This isn’t just a collection of funny tweets; it is a demonstration of high-velocity sentiment harvesting.

For the uninitiated, this process relies on a complex stack of Retrieval-Augmented Generation (RAG) and vector databases. To surface a specific trend like the “Osterhase” accidents, an aggregator doesn’t just search for keywords. It utilizes embeddings—mathematical representations of meaning—to identify clusters of irony, sarcasm, and cultural resonance. When a specific semantic cluster (in this case, the juxtaposition of a festive symbol with catastrophic failure) hits a critical mass, the system triggers a curation event.

The Architecture of Algorithmic Curation

The technical leap from 2023 to 2026 has been the shift from centralized scraping to Agentic Workflows. Modern curation bots now operate as autonomous agents with specific goals: find the highest engagement-to-word-count ratio. They leverage LangChain-style orchestration to chain together multiple LLM calls—one for filtering noise, one for sentiment scoring, and one for final editorial formatting.

The latency involved here is staggering. We are talking about a pipeline that moves from a user’s “Post” button to a curated list in under 300 milliseconds. This is made possible by the widespread adoption of NPUs (Neural Processing Units) at the edge, allowing the initial filtering to happen closer to the data source rather than routing every single tweet through a massive, power-hungry GPU cluster in a centralized data center.

It’s an efficiency play.

By utilizing Minor Language Models (SLMs) for the initial “triage” phase, these platforms avoid the massive token costs associated with frontier models like GPT-5 or Claude 4. They only escalate a “candidate pearl” to the larger, more nuanced model when the sentiment score crosses a specific threshold of “virality potential.” This tiered architecture minimizes API overhead while maximizing the precision of the output.

“The shift we’re seeing in 2026 is the move from search to synthesis. We are no longer looking for information; we are looking for the distilled essence of a cultural moment. The ‘Curation Layer’ is effectively the novel search engine.”

Latency, LLMs, and the Velocity of Viral Sentiment

When we analyze the “Twitterperlen” model, we have to appear at the underlying data integrity. The challenge with automating irony is that LLMs historically struggle with sarcasm—the “semantic gap” where the literal meaning is the opposite of the intended meaning. Though, the 2026 iteration of these models utilizes multi-modal context windows. They aren’t just reading the text; they are analyzing the attached images, the user’s historical “irony coefficient,” and the real-time reaction of the network.

Consider the following comparison of curation methodologies:

| Metric | Legacy Curation (2020-2023) | Agentic Curation (2026) |

|---|---|---|

| Detection Method | Keyword/Hashtag Matching | Vector Embedding Clustering |

| Processing Time | Minutes to Hours | Sub-second (Real-time) |

| Contextual Awareness | Surface level (Text only) | Deep (Multi-modal & Behavioral) |

| Scaling | Linear (Human-dependent) | Exponential (Agent-driven) |

This transition has fundamentally altered the “Attention Economy.” In the past, a “pearl” took time to be discovered, polished, and shared. Now, the polishing is automated. The moment a joke is funny, it is already indexed, categorized, and served to an audience that the algorithm knows will appreciate that specific brand of humor.

The 30-Second Verdict for Developers

- The Stack: Vector DB (e.g., Pinecone/Milvus) $rightarrow$ SLM Triage $rightarrow$ LLM Synthesis $rightarrow$ Automated Distribution.

- The Moat: The competitive advantage is no longer the AI model (which is commoditized), but the proprietary “Sentiment Weights” used to define what constitutes a “pearl.”

- The Risk: Over-optimization leads to “algorithmic blandness,” where the AI only selects jokes that fit a safe, predictable pattern of virality, killing true organic humor.

The Decentralized Social Graph: Why “Pearls” Still Matter

Despite the automation, the “Twitterperlen” phenomenon persists as of the inherent human desire for curation. In an era of infinite content, the filter is more valuable than the source. As X continues its evolution into an “Everything App,” the integration of payment rails and AI agents means that these curation services are moving toward a micro-payment model. Imagine a world where you pay 0.001 cents in crypto to receive a daily “distilled” feed of the internet’s best irony, curated by an agent that knows your humor better than your best friend does.

This brings us to the broader “chip wars.” The ability to run these curation agents at scale depends entirely on the availability of high-bandwidth memory (HBM3e) and the efficiency of the ARM-based architectures powering the next generation of cloud servers. Without the hardware leap in NPU integration, the “real-time” nature of this curation would collapse under the weight of its own latency.

We are seeing a convergence of IEEE-standardized edge computing and consumer-facing AI. The “Easter Bunny” is just the mascot for a much larger shift in how information is filtered.

The Ethics of Automated Irony

There is a darker side to this. When an AI decides what is “funny” or “pearl-worthy,” it effectively creates a feedback loop that shapes human expression. Users begin to write “for the algorithm,” crafting their irony to be more easily detectable by the vector clusters. We are essentially training ourselves to speak in a way that an LLM can categorize as “high-value content.”

This is the ultimate platform lock-in. It’s not about the software; it’s about the cognitive alignment between the user and the curator. If you seek your “pearl” to be found, you must adhere to the latent space of the model.

For those tracking the evolution of the web, the “Twitterperlen” of April 3, 2026, is a marker. It marks the point where the curation of human culture became a fully automated, low-latency industrial process. The bunny may be having accidents, but the machinery behind the scenes is running with terrifying precision.

To understand where this goes next, maintain an eye on the Ars Technica reports on decentralized identity (DID). Once the curator knows not just what you like, but who you are across multiple platforms, the “pearls” will stop being general and start being surgically precise.