Google’s Gemini 1.5 Pro Now Available with 1 Million Token Context Window, But at What Cost?

Google has officially rolled out Gemini 1.5 Pro, its latest large language model (LLM), to a wider audience via AI Studio and Vertex AI. The headline feature? A staggering 1 million token context window – a leap beyond OpenAI’s GPT-4 Turbo’s 128k context. This expansion promises to revolutionize how developers interact with LLMs, enabling analysis of entire books, lengthy codebases, and hours of audio/video transcripts in a single prompt. However, the practical implications, cost structure, and architectural trade-offs deserve a far deeper examination than the initial marketing suggests.

The sheer scale of a 1 million token context window is…significant. For context, most LLMs operate comfortably within the 4k-8k token range. Increasing this to 1 million isn’t simply a matter of adding more memory; it fundamentally alters the computational demands of attention mechanisms, the core of how these models process information. Google claims to have achieved this through a novel architecture called Mixture-of-Experts (MoE), dynamically activating only a subset of the model’s parameters for each input. This is a clever workaround, but it introduces its own complexities.

The MoE Trade-Off: Speed vs. Accuracy

MoE architectures, even as efficient in terms of compute, can sometimes exhibit inconsistencies in output quality depending on which experts are activated. Early reports from developers experimenting with the 1 million token context window suggest that while the model *can* process the entire context, maintaining coherence and accuracy across that entire span remains a challenge. The longer the input, the greater the potential for “context drift,” where the model loses track of earlier information. This isn’t a fatal flaw, but it’s a critical consideration for applications requiring precise recall across vast datasets.

the cost of utilizing this expanded context window is substantial. Google’s pricing structure, as of late March 2026, reflects this: input tokens are priced at $0.00075 per 1K tokens, while output tokens remain at $0.0015 per 1K tokens. Processing a full 1 million token input, even without generating a lengthy response, will cost $7.50. For high-volume applications, this can quickly turn into prohibitive. Google’s official pricing page details the tiered structure, but doesn’t fully account for the increased latency associated with larger contexts.

Beyond the Token Limit: Architectural Deep Dive

Gemini 1.5 Pro isn’t just about the token count. It represents a significant evolution in Google’s LLM strategy, moving away from monolithic models towards a more modular, adaptable approach. The underlying architecture leverages the Tensor Processing Units (TPUs) developed in-house by Google, specifically the v5e variant. These TPUs are optimized for the sparse matrix operations inherent in MoE models, providing a substantial performance advantage over traditional GPUs. However, this as well creates a degree of platform lock-in. Running Gemini 1.5 Pro efficiently requires access to Google Cloud infrastructure.

The model itself is reportedly trained on a massive dataset encompassing text, code, images, and audio. Google has been notably tight-lipped about the specifics of this dataset, raising concerns about potential biases and ethical implications. The lack of transparency is a recurring theme in the LLM space, and Gemini 1.5 Pro is no exception. Research on Mixture-of-Experts models highlights the challenges of ensuring fairness and preventing the amplification of harmful stereotypes in these systems.

What In other words for Enterprise IT

For enterprises, Gemini 1.5 Pro presents both opportunities and challenges. The ability to analyze large documents, such as legal contracts or financial reports, in a single pass is a game-changer. Similarly, developers can now feed entire code repositories into the model for automated code review and bug detection. However, the cost and potential accuracy issues must be carefully considered. A phased rollout, starting with smaller-scale pilot projects, is highly recommended.

The integration with Vertex AI also allows for fine-tuning of the model on custom datasets, which can improve accuracy and reduce bias. However, fine-tuning requires significant expertise and computational resources. Google’s Vertex AI documentation provides a comprehensive overview of the platform’s capabilities, but the learning curve is steep.

The Ecosystem Impact: Open Source vs. Closed Gardens

Google’s move further solidifies the trend towards closed, proprietary LLMs. While open-source alternatives like Llama 3 are rapidly improving, they currently lack the scale and performance of Gemini 1.5 Pro. This creates a competitive disadvantage for open-source communities and raises concerns about vendor lock-in. The long-term implications for innovation are significant.

“The race to larger context windows is fascinating, but it’s not the only metric that matters. We’re seeing a bifurcation in the LLM landscape: highly capable, but closed models like Gemini, and increasingly powerful, but more accessible open-source options. The choice will depend on your specific needs and risk tolerance.”

– Dr. Anya Sharma, CTO, SecureAI Solutions

The API capabilities of Gemini 1.5 Pro are robust, offering a range of features including text generation, translation, and code completion. However, the API is subject to Google’s terms of service and usage policies, which could change at any time. This is a risk that developers must be aware of.

The 30-Second Verdict

Gemini 1.5 Pro’s 1 million token context window is a technical marvel, but it’s not a silver bullet. The cost, potential accuracy issues, and platform lock-in are significant drawbacks. It’s a powerful tool for specific apply cases, but it’s not a replacement for careful planning and thoughtful implementation.

The current landscape is a complex interplay of hardware advancements (TPUs), architectural innovations (MoE), and strategic platform decisions. Google is clearly betting big on its closed ecosystem, and Gemini 1.5 Pro is a key component of that strategy. The next few months will be crucial in determining whether this bet pays off. The open-source community, meanwhile, continues to push the boundaries of what’s possible, offering a compelling alternative for those who prioritize flexibility and control.

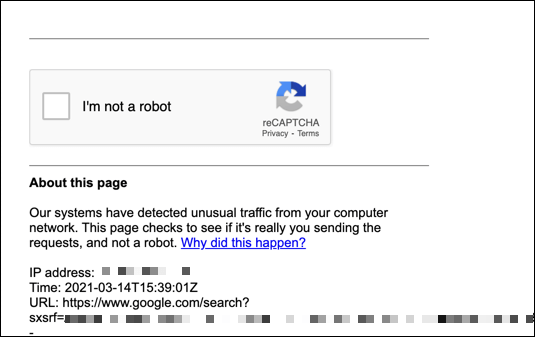

The incident reported on March 27th, 2026, where access to the YouTube video demonstrating Gemini 1.5 Pro was temporarily blocked due to suspected automated traffic, underscores the ongoing battle against malicious actors attempting to exploit these powerful models. Distributed Denial of Service (DDoS) attacks are becoming increasingly sophisticated, and LLMs are a potential target for both attack and defense.

the success of Gemini 1.5 Pro will depend on its ability to deliver tangible value to developers and enterprises, while addressing the ethical and security concerns that inevitably accompany such powerful technology.