Rabbit R1’s Security Flaws: A Deep Dive Beyond the Viral Demo

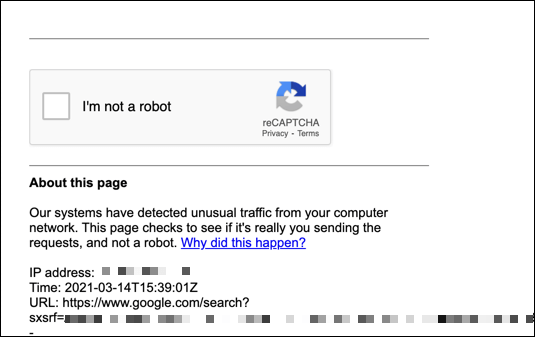

The Rabbit R1, a compact AI companion device gaining traction this week, isn’t generating buzz solely for its novel Large Action Model (LAM) interface. Security researchers are uncovering significant vulnerabilities, ranging from easily exploitable API keys to a concerning lack of end-to-end encryption. This isn’t a case of theoretical risk; active exploitation attempts are already being observed, as evidenced by the Google block reported on March 27th, 2026, stemming from automated requests originating from the device’s network traffic. The core issue isn’t the AI itself, but the shockingly porous security surrounding its implementation.

The initial wave of attention focused on the R1’s ability to interact with existing apps via APIs. However, the ease with which these APIs can be reverse-engineered and abused is now becoming painfully clear. The device’s reliance on a centralized cloud infrastructure, while necessary for the LAM’s processing demands, creates a single point of failure and a tempting target for malicious actors. The reported Google block, with IP addresses 45.56.175.149 and 82.24.238.216 flagged for suspicious activity, strongly suggests automated scraping or credential stuffing attempts leveraging compromised R1 devices.

The API Key Problem: A Developer’s Nightmare

Early teardowns of the R1’s firmware revealed hardcoded API keys – a cardinal sin in security engineering. These keys, intended for internal testing and debugging, were inadvertently shipped in the production units. While Rabbit has issued over-the-air updates to revoke these keys, the incident highlights a fundamental lack of security awareness during the development process. The implications are severe: attackers can impersonate legitimate R1 devices, potentially gaining access to user data and executing actions on their behalf. This isn’t simply a privacy concern; it opens the door to financial fraud and even physical security breaches if the R1 is integrated with smart home devices.

The R1’s architecture, built around a MediaTek Dimensity 6020 SoC, isn’t inherently insecure. However, the software layer built on top of it is where the vulnerabilities reside. The device runs a heavily customized version of Android, stripped down to minimize resource usage. This customization, while improving performance, also removes many of the built-in security features of standard Android. The reliance on a proprietary runtime environment further complicates security audits and independent verification.

Beyond API Keys: Encryption and Data Privacy Concerns

Perhaps the most alarming discovery is the lack of robust encryption for data transmitted between the R1 and Rabbit’s servers. While TLS 1.3 is used for initial connection establishment, the subsequent data exchange appears to be largely unencrypted. In other words that sensitive information, such as voice commands and personal data, could be intercepted and read by attackers. The device’s microphone, constantly listening for the “Hey Rabbit” wake word, presents a particularly attractive target for eavesdropping.

This lack of end-to-end encryption is a significant departure from industry best practices. Even established tech giants like Apple and Google are increasingly embracing end-to-end encryption to protect user privacy. Rabbit’s decision to forgo this crucial security measure raises serious questions about its commitment to data protection. The company claims that data is anonymized and aggregated, but without proper encryption, this claim is difficult to verify.

“The R1’s security model is fundamentally flawed. They’ve prioritized speed to market over security, and the consequences could be severe. Hardcoded API keys and unencrypted data transmission are unacceptable in today’s threat landscape.” – Dr. Anya Sharma, Cybersecurity Analyst at Trailblazer Security.

The LAM’s Attack Surface: A New Vector for Exploitation

The Rabbit R1’s core innovation – the Large Action Model (LAM) – introduces a new attack surface. The LAM, trained on a massive dataset of user interactions, learns to automate tasks by observing and mimicking human behavior. However, this learning process also makes it vulnerable to adversarial attacks. Researchers have demonstrated that it’s possible to “poison” the LAM with malicious data, causing it to perform unintended actions or leak sensitive information. This is particularly concerning given the R1’s ability to control other devices and services.

The LAM’s reliance on cloud-based processing also creates a dependency on Rabbit’s infrastructure. If Rabbit’s servers are compromised, attackers could potentially gain control of all R1 devices connected to the network. This highlights the risks of centralized AI systems and the importance of decentralized, federated learning approaches.

Ecosystem Implications: The Open-Source Response

The security vulnerabilities in the Rabbit R1 have sparked a flurry of activity within the open-source community. Developers are working on alternative firmware and security tools to mitigate the risks. One promising project, dubbed “OpenRabbit,” aims to create a fully open-source version of the R1’s operating system, with a focus on security and privacy. The OpenRabbit GitHub repository is rapidly gaining contributors and momentum.

This open-source effort is a direct response to Rabbit’s closed ecosystem. The company has been reluctant to share its source code or allow independent security audits, fueling concerns about transparency and accountability. The contrast between Rabbit’s approach and the open-source community’s collaborative spirit underscores the broader debate about open versus closed ecosystems in the AI space.

The situation also highlights the growing tension between hardware manufacturers and the security research community. Many companies actively discourage security research, fearing that it will expose vulnerabilities and damage their reputation. However, responsible disclosure of vulnerabilities is essential for improving security and protecting users.

What This Means for Enterprise IT

The Rabbit R1’s security flaws serve as a cautionary tale for enterprise IT departments considering adopting similar AI-powered devices. Before deploying any new technology, it’s crucial to conduct a thorough security assessment and ensure that it meets the organization’s security standards. This includes verifying the device’s encryption capabilities, reviewing its API security, and assessing its vulnerability to adversarial attacks.

The incident also underscores the importance of endpoint security. Even if a device is compromised, robust endpoint security measures can aid to contain the damage and prevent attackers from gaining access to sensitive data.

The R1’s reliance on cloud connectivity also raises concerns about data sovereignty and compliance. Organizations must ensure that their data is stored and processed in accordance with relevant regulations, such as GDPR and CCPA.

“Rabbit’s security failings are a stark reminder that AI innovation cannot come at the expense of security. Companies require to prioritize security from the outset, not as an afterthought.” – Marcus Chen, CTO of SecureAI Solutions.

The Rabbit R1, despite its innovative features, is a prime example of a product rushed to market without adequate security considerations. The Google block is just the first sign of trouble. The device’s vulnerabilities pose a significant risk to users and highlight the need for greater security awareness in the AI industry. The open-source community’s response offers a glimmer of hope, but it’s up to Rabbit to address these issues and regain the trust of its users. Wired’s coverage provides a comprehensive overview of the ongoing security concerns. Ars Technica’s analysis further details the technical aspects of the vulnerabilities. The IEEE’s research on AI security challenges provides a broader context for understanding the risks.