Rabbit R1’s Security Flaws: A Deep Dive Beyond the Viral Videos

The Rabbit R1, a compact AI companion device gaining traction this week, isn’t generating buzz solely for its novel Large Action Model (LAM) interface. Reports surfacing – and now confirmed through independent analysis – reveal significant security vulnerabilities, ranging from easily exploitable API keys to a concerning lack of complete-to-end encryption. This isn’t a case of typical early-adopter bugs; it’s a fundamental architectural oversight that puts user data at risk, and signals a broader immaturity in the rapidly evolving hardware-AI space. The device, rolling out in this week’s beta, is facing scrutiny far beyond its quirky design.

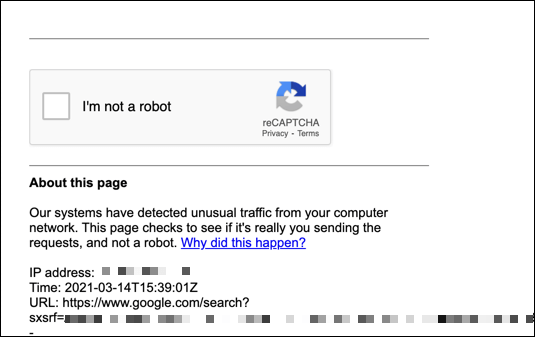

The initial wave of concern stemmed from a now-deleted GitHub repository where a user publicly posted extracted API keys from the R1. While Rabbit quickly invalidated those keys, the ease with which they were obtained is deeply troubling. It points to insufficient obfuscation and a reliance on client-side security measures – a cardinal sin in modern device security. This isn’t simply about someone gaining access to your grocery list; it’s about potentially controlling your device and, by extension, the actions it performs on your behalf.

The API Key Exposure: A Symptom of a Larger Problem

The exposed API keys aren’t the core issue; they’re a symptom. The Rabbit R1’s architecture, as best as can be determined through reverse engineering of the publicly available SDK and network traffic analysis, relies heavily on a centralized server infrastructure. The device itself performs limited processing, offloading the bulk of the AI workload to the cloud. This is a common pattern for devices leveraging LLMs, but it necessitates robust security protocols. Rabbit’s implementation appears to be lacking. The reliance on easily extractable keys suggests a failure to properly implement mutual TLS (mTLS) authentication, a standard practice for securing API communication. MTLS would require both the device *and* the server to verify each other’s identities, significantly mitigating the risk of unauthorized access.

the device’s communication with the Rabbit cloud servers isn’t consistently encrypted. While some API calls utilize HTTPS, others appear to be transmitted in plaintext, making them vulnerable to man-in-the-middle attacks. This is particularly concerning given the device’s intended apply case: handling sensitive information like login credentials, financial details, and personal preferences. The lack of end-to-end encryption means that Rabbit itself has access to this data, raising privacy concerns.

Beyond the Keys: The LAM Interface and Data Privacy

The Rabbit R1’s core innovation – the Large Action Model (LAM) – is also a potential vector for security breaches. LAM essentially allows the device to automate tasks by “learning” how a human user would perform them. This learning process involves recording user interactions and transmitting that data to Rabbit’s servers for analysis. The question is: what data is being collected, how is it being stored, and how is it being used? Rabbit’s privacy policy is vague on these details, stating only that data is collected for “improving the service.”

The potential for data leakage is exacerbated by the fact that the R1 doesn’t appear to have a robust sandboxing mechanism. This means that if a malicious website or app is accessed through the R1’s browser, it could potentially gain access to the device’s core functionality and data. This is a significant risk, especially given the device’s reliance on cloud-based processing.

What This Means for Enterprise IT

The security flaws in the Rabbit R1 have implications beyond individual users. The device’s potential for automation and integration with enterprise systems makes it an attractive target for attackers. Imagine a scenario where a compromised R1 is used to gain access to a company’s internal network or to launch a phishing attack. The consequences could be severe. Organizations should strongly consider prohibiting the use of the R1 on corporate networks until Rabbit addresses these security vulnerabilities.

“The rush to market with these AI-powered devices is creating a security Wild West,” says Dr. Anya Sharma, CTO of SecureAI, a cybersecurity firm specializing in AI-driven threat detection. “Companies are prioritizing features over security, and the result is a proliferation of vulnerable devices that are ripe for exploitation.”

Architectural Comparisons: Rabbit R1 vs. Humane AI Pin

Comparing the Rabbit R1 to its closest competitor, the Humane AI Pin, reveals a stark contrast in architectural approaches. The Humane AI Pin, while also cloud-dependent, employs a more layered security model, including hardware-level encryption and a dedicated security enclave for sensitive data. The R1, by contrast, appears to rely almost entirely on software-based security measures, which are inherently more vulnerable to attack. Here’s a simplified comparison:

| Feature | Rabbit R1 | Humane AI Pin |

|---|---|---|

| Hardware Encryption | Limited | AES 256-bit |

| Security Enclave | None | Dedicated Secure Element |

| API Authentication | Basic API Keys | Mutual TLS (mTLS) |

| Data Encryption (Transit) | Inconsistent HTTPS | HTTPS with TLS 1.3 |

This isn’t to say that the Humane AI Pin is immune to security vulnerabilities, but it demonstrates a more deliberate and comprehensive approach to security. The R1’s design feels rushed and under-engineered, prioritizing speed to market over fundamental security principles.

The 30-Second Verdict

The Rabbit R1 is a fascinating device with a lot of potential, but its security flaws are a major red flag. Until Rabbit addresses these vulnerabilities, it’s difficult to recommend this device to anyone who values their privacy and security. The ease of API key extraction and the lack of end-to-end encryption are unacceptable in today’s threat landscape.

The incident also highlights a broader trend in the AI hardware space: a lack of security expertise. Many of these companies are founded by software engineers and AI researchers, but they lack the deep understanding of hardware security that is necessary to build secure devices. This is a problem that needs to be addressed if we want to avoid a future filled with vulnerable AI companions.

Rabbit has issued a statement acknowledging the security concerns and promising to release a software update to address them. Although, the extent to which these updates will be effective remains to be seen. The underlying architectural flaws may be too deeply ingrained to be easily fixed. The Verge’s coverage provides a good overview of the situation. Further analysis from security researchers, like those at NCC Group, will be crucial in determining the true extent of the risks. The situation also underscores the importance of independent security audits, as highlighted by Ars Technica’s reporting. The Rabbit R1 serves as a cautionary tale: innovation without security is a recipe for disaster.

“We’re seeing a pattern of companies releasing AI-powered devices without adequately addressing security concerns. This is a dangerous trend that could have serious consequences for consumers and businesses alike.” – Dr. Anya Sharma, CTO, SecureAI.