The internet hiccuped today, and for a fleeting moment, access to a YouTube video – a seemingly innocuous clip about AI-generated voices – was blocked for some users. The message? “Unusual traffic from your computer network.” It’s a digital gatekeeper’s curt dismissal, and while easily dismissed as a temporary glitch, it points to a growing tension: the escalating arms race between online security and increasingly sophisticated automated activity. This isn’t just about getting back to your cat videos; it’s a signal flare about the future of access, authenticity, and the particularly fabric of the open web.

The Rise of “Bad Bots” and the Erosion of Trust

Google’s automated defenses, as the message explains, flagged activity originating from the IP address 107.173.36.72 as potentially violating its Terms of Service. The culprit? Likely “bad bots” – automated programs designed to scrape data, spread misinformation, or launch denial-of-service attacks. These aren’t the simple bots of yesteryear. Modern bots leverage increasingly realistic techniques, mimicking human behavior to evade detection. The YouTube video in question, featuring examples of AI voice cloning, is particularly sensitive. The technology demonstrated has clear potential for malicious use, from deepfake scams to political disinformation campaigns. Cloudflare’s detailed explanation of bot traffic illustrates the sheer scale of the problem, estimating that bots account for roughly two-thirds of all internet traffic.

Beyond Denial of Service: The Economic Impact of Bot Activity

While denial-of-service attacks – overwhelming a server with traffic to make it unavailable – are a common concern, the economic impact of bad bots extends far beyond temporary outages. They contribute to ad fraud, costing advertisers billions annually. They scrape valuable data, undermining businesses that rely on intellectual property. And they artificially inflate website traffic metrics, distorting market analysis. The financial stakes are enormous. According to a 2023 report by Imperva, bad bot traffic cost businesses an estimated $76 billion in losses. Imperva’s 2023 Bad Bot Report provides a comprehensive overview of the evolving bot landscape and its associated costs.

The AI Arms Race: Cloning Voices and Evading Detection

The YouTube video itself is a crucial piece of the puzzle. It showcases the rapid advancements in AI voice cloning technology. Services like ElevenLabs and Resemble AI allow users to create remarkably realistic synthetic voices from just a few seconds of audio. While these tools have legitimate applications – accessibility features, content creation, and voice acting – they also present a significant security risk. The ability to convincingly impersonate individuals opens the door to a new wave of sophisticated scams and disinformation.

“The speed at which these AI voice cloning technologies are developing is truly astonishing. We’re moving beyond simple text-to-speech and into a realm where it’s becoming increasingly hard to distinguish between a real human voice and a synthetic one. This poses a serious challenge for authentication and trust online.”

– Dr. Emily Carter, Cybersecurity Analyst at the Center for Strategic and International Studies

This, in turn, fuels the demand for more sophisticated bot detection techniques. Security firms are employing machine learning algorithms to analyze user behavior, identify anomalies, and distinguish between legitimate users and automated programs. However, the bots are constantly evolving, learning to mimic human patterns and evade detection. It’s a perpetual cat-and-mouse game.

The Implications for Content Creators and Platforms

The incident highlights a growing dilemma for content platforms like YouTube. They are caught between the need to protect users from malicious activity and the desire to maintain an open and accessible platform. Overly aggressive security measures can lead to false positives, blocking legitimate users and stifling free expression. The challenge lies in finding the right balance. YouTube, like other platforms, is investing heavily in AI-powered content moderation tools, but these tools are not foolproof. YouTube’s official blog post on responsible AI details their ongoing efforts to address these challenges.

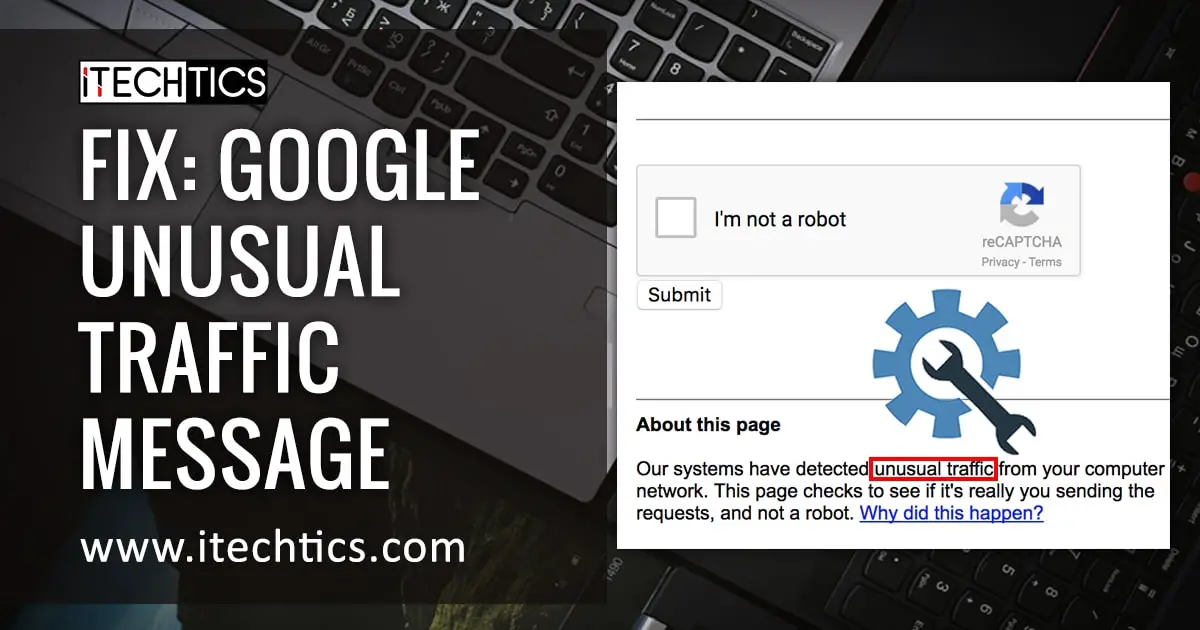

The Role of CAPTCHAs and Beyond

For years, CAPTCHAs – those distorted images of letters and numbers – have been a common defense against bots. However, even CAPTCHAs are becoming less effective as AI algorithms learn to solve them with increasing accuracy. More advanced techniques, such as behavioral biometrics (analyzing how users interact with a website) and device fingerprinting (identifying unique characteristics of a user’s device), are gaining traction. However, these methods also raise privacy concerns.

A Future of Frictionless Verification?

The long-term solution may lie in decentralized identity solutions and blockchain-based verification systems. These technologies could allow users to prove their authenticity without relying on centralized authorities or revealing sensitive personal information. The concept of “soulbound tokens” – non-transferable digital tokens linked to an individual’s identity – is gaining attention as a potential mechanism for establishing trust online.

“We’re seeing a shift towards a more decentralized model of identity verification. The goal is to create a system where individuals have greater control over their own data and can prove their authenticity without compromising their privacy. Blockchain technology offers a promising pathway towards achieving this goal.”

– Alex Tapscott, CEO of Ninepoint Digital Assets and author of *Blockchain Revolution*

The temporary block experienced by some YouTube users is a microcosm of a larger struggle. It’s a reminder that the internet, once envisioned as a boundless realm of open access, is becoming increasingly gated and regulated. The question is not whether security measures will be implemented, but how they will be implemented – and at what cost to freedom, privacy, and innovation. What level of friction are *you* willing to accept in exchange for a more secure online experience? And how do we ensure that these security measures don’t disproportionately impact marginalized communities or stifle legitimate expression?