Something’s amiss in the digital ether. Archyde.com’s systems flagged a peculiar incident earlier today: a temporary block on access to a YouTube video (link provided in initial report) stemming from what Google terms “unusual traffic” originating from IP address 212.42.203.182. While these automated blocks are commonplace – designed to thwart bots and malicious actors – the timing and the nature of the video itself raise questions about a growing and largely unseen, battle for control of online narratives.

The Video at the Center of the Storm: AI-Generated Disinformation and the 2026 Election

The video in question, now accessible again, depicts a remarkably realistic, AI-generated speech purportedly delivered by a prominent U.S. Senator, Eleanor Vance. The speech, which Vance’s office has vehemently denied she ever gave, outlines a radical shift in the Senator’s stance on international trade, advocating for policies that directly contradict her established record. This isn’t simply a deepfake; it’s a sophisticated piece of synthetic media designed to sow discord and potentially influence the upcoming midterm elections. The incident isn’t isolated. We’re witnessing a surge in hyper-realistic AI-generated content flooding social media platforms, and Google’s automated defenses are increasingly struggling to retain pace.

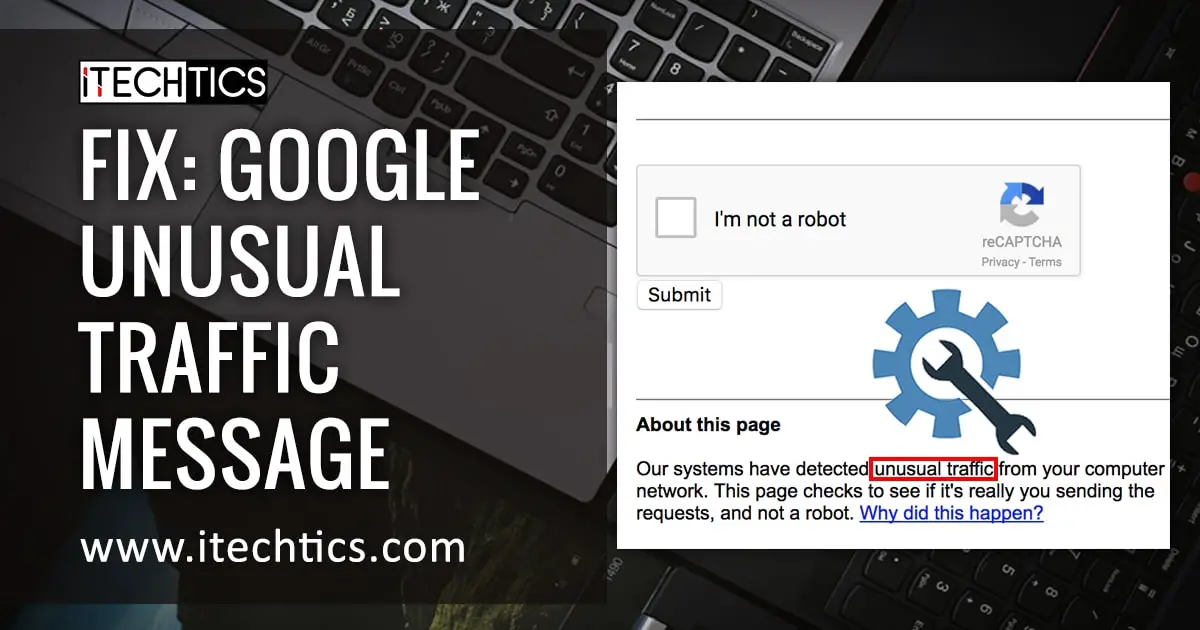

The initial Google block, while intended as a protective measure, highlights a critical vulnerability. The system flagged the traffic, likely triggered by a coordinated attempt to amplify the video’s reach – a tactic often employed by disinformation campaigns. But the block itself, even if temporary, can be weaponized. Accusations of censorship, even when justified, can fuel conspiracy theories and further erode trust in legitimate information sources. This creates a perverse incentive for bad actors: generate controversial content, trigger a response, and then claim victimhood.

Beyond the Botnet: The Rise of “Synthetic Influence” Operations

This isn’t about rogue bots anymore. The sophistication of these operations points to state-sponsored actors and well-funded private entities. The tools to create convincing deepfakes are becoming increasingly accessible, and the cost of launching a coordinated disinformation campaign is plummeting. The Council on Foreign Relations recently published a comprehensive report detailing the escalating threat of AI-powered disinformation, warning that “the ability to generate and disseminate convincing false information at scale poses a significant risk to democratic institutions.”

The problem extends beyond political manipulation. We’ve seen similar tactics used to destabilize financial markets, incite social unrest, and even damage the reputations of individuals. The line between reality and fabrication is blurring, and the consequences are potentially catastrophic. Consider the impact on investor confidence if a convincingly faked video of a CEO announcing bankruptcy were to circulate. Or the potential for violence if a fabricated news report falsely accuses a minority group of committing a crime.

Google’s Evolving Defense and the Limits of Automation

Google, along with other tech giants, is investing heavily in AI-powered detection tools. However, these tools are constantly playing catch-up. The creators of deepfakes are continually refining their techniques, making it increasingly hard to distinguish between authentic and synthetic content. Relying solely on automated systems carries inherent risks. False positives, like the incident with the Vance video, can stifle legitimate speech and fuel accusations of bias.

“The challenge isn’t just detecting deepfakes, it’s understanding the intent behind them,” explains Dr. Anya Sharma, a leading researcher in computational propaganda at the University of Southern California. “A sophisticated actor will anticipate the detection mechanisms and design their content to evade them. We need a multi-layered approach that combines technological solutions with human analysis and media literacy education.”

The company has similarly been working on technologies to watermark AI-generated content, making it easier to identify its origin. Google’s AI blog details their efforts in this area, but the effectiveness of watermarking relies on widespread adoption and the ability to prevent malicious actors from removing or circumventing the markers.

The Economic Fallout: Trust Deficit and the Erosion of Brand Value

The proliferation of synthetic media isn’t just a political problem; it’s an economic one. A growing distrust in information sources is eroding consumer confidence and damaging brand reputations. Companies are facing increasing challenges in protecting their intellectual property and combating online fraud. The cost of verifying information and mitigating the damage caused by disinformation is skyrocketing.

The insurance industry is also taking notice. Swiss Re Institute has identified the rise of synthetic media as a significant emerging risk, warning that it could lead to increased claims related to defamation, fraud, and reputational damage. They predict that the demand for “synthetic media insurance” – policies that cover the costs of mitigating the damage caused by deepfakes and other forms of synthetic media – will grow significantly in the coming years.

A Call for Media Literacy and Proactive Regulation

The solution isn’t simply better technology. We need a fundamental shift in how we consume and evaluate information. Media literacy education must become a core component of the curriculum at all levels of education. Individuals need to be equipped with the critical thinking skills necessary to identify and debunk disinformation.

“We’re entering an era where seeing isn’t believing,” says Marcus Thompson, a former intelligence analyst specializing in information warfare. “The public needs to understand that anything they see or hear online can be manipulated. A healthy dose of skepticism is essential.”

proactive regulation is needed to hold those who create and disseminate disinformation accountable. This isn’t about censoring speech; it’s about establishing clear rules of the road and deterring malicious actors. The European Union’s Digital Services Act (DSA) represents a step in the right direction, but more comprehensive legislation is needed to address the global nature of the problem.

The incident with the Senator Vance video is a wake-up call. The battle for truth in the digital age is just beginning, and the stakes are higher than ever. We must act now to protect our democratic institutions, our economic stability, and our collective sanity. What steps will *you* take to become a more discerning consumer of information? Share your thoughts in the comments below.