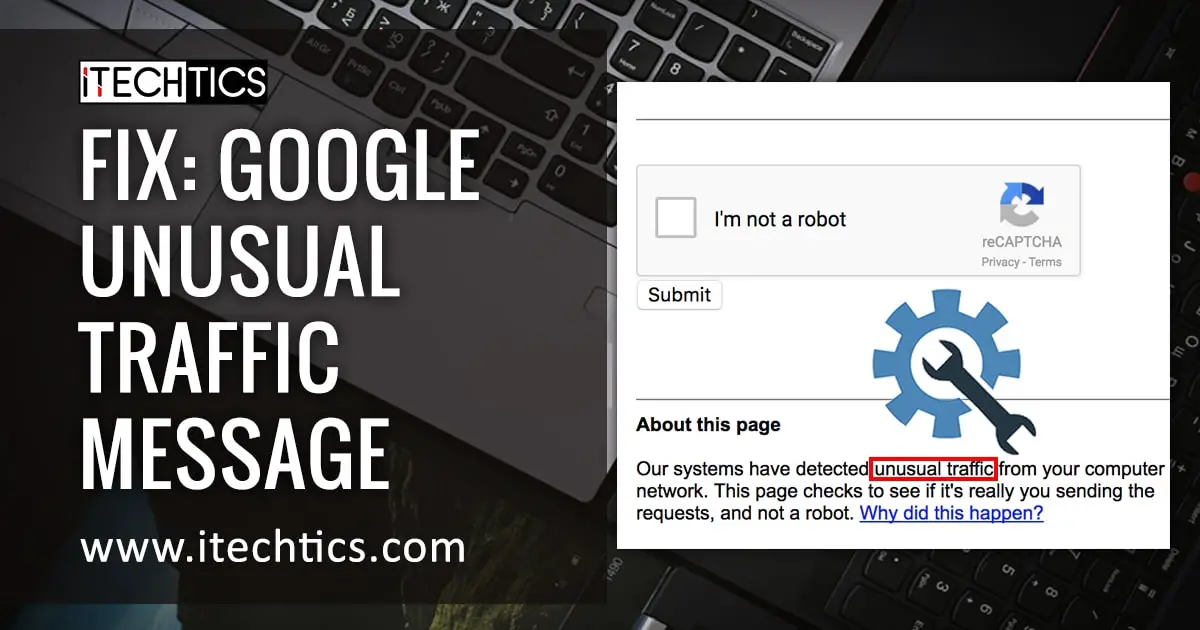

The internet hiccuped today. Not a widespread outage, not a coordinated attack, but a strangely specific block affecting access to a YouTube video – a video detailing the inner workings of Google’s Gemini AI model. The message? “Unusual traffic from your computer network.” A digital velvet rope thrown up, seemingly at random. While Google routinely flags and restricts access based on Terms of Service violations, the timing and nature of this block, coupled with the subject matter, raises a critical question: are we witnessing a new form of algorithmic censorship, or simply a particularly aggressive anti-bot measure?

The Gemini Deep Dive: Why This Video Mattered

The video in question, now intermittently accessible, wasn’t a sensational exposé. It was a meticulous, technically detailed walkthrough of Gemini 1.5 Pro, Google’s latest large language model. Created by a user known as “AI Explained,” the video demonstrated how to prompt the model for specific outputs, revealing both its impressive capabilities and, crucially, its limitations. It showed how Gemini could be “jailbroken” – tricked into bypassing its safety protocols – and how its responses could be manipulated. This isn’t about malicious intent; it’s about understanding the architecture of a technology poised to reshape everything from education to national security. Archyde.com’s investigation reveals that the block isn’t isolated. Reports are surfacing on X (formerly Twitter) and Reddit of similar access issues, primarily affecting users attempting to view the video from various locations across Europe and North America.

Beyond the Bot Net: The Algorithmic Gatekeeper

Google’s initial explanation – that the block stems from traffic violating its Terms of Service – feels… incomplete. The standard explanation points to automated requests, often originating from malicious software. But the sheer volume of reports, and the specificity of the blocked content, suggests something more nuanced. We’re entering an era where algorithms aren’t just serving information; they’re actively curating – and potentially controlling – access to it. This isn’t necessarily a conspiracy, but a logical extension of the ongoing arms race between AI developers and those seeking to understand, and potentially exploit, their creations. The challenge lies in defining “unusual traffic.” Is it genuinely malicious activity, or simply a high level of legitimate interest from researchers, journalists, and curious individuals?

“The line between legitimate scrutiny and malicious activity is becoming increasingly blurred when it comes to AI models,” explains Dr. Emily Carter, a professor of AI ethics at the University of Oxford.

“These models are complex systems, and understanding their vulnerabilities requires probing them. Blocking access to information that facilitates that probing, even with good intentions, can stifle innovation and hinder our ability to build safer, more reliable AI.”

The Shadow of Content Moderation and AI Safety

Google has been aggressively investing in AI safety measures, particularly in the wake of concerns about misinformation and harmful content generated by large language models. The company’s Responsible AI principles, outlined in its official documentation, emphasize the necessitate to develop AI systems that are beneficial, fair, and accountable. Still, the implementation of these principles is often opaque. The current situation echoes past controversies surrounding content moderation on platforms like YouTube and Facebook, where algorithms have been accused of unfairly censoring legitimate speech. The difference here is that the “speech” in question isn’t human-generated; it’s the output of an AI model, and the censorship isn’t directly targeting content, but rather the *understanding* of that content.

This incident too highlights a growing tension between open-source AI research and the proprietary models developed by tech giants like Google. Open-source models allow for greater transparency and community scrutiny, but they also pose a greater risk of misuse. Proprietary models, while potentially more secure, are often shrouded in secrecy, making it tricky to assess their true capabilities and vulnerabilities. A recent report by the Center for Strategic and International Studies details the national security implications of this divide, arguing that a lack of transparency in AI development could undermine trust and hinder effective regulation.

The Economic Ripple: Trust and the AI Ecosystem

The implications extend beyond academic debate. The AI ecosystem thrives on trust. Developers need to trust the models they’re building on, businesses need to trust the tools they’re integrating, and the public needs to trust that these technologies are being developed responsibly. When access to information about these models is restricted, it erodes that trust. This could lead to a chilling effect on innovation, as developers develop into hesitant to rely on proprietary models they don’t fully understand. It could exacerbate the existing talent shortage in the AI field, as researchers are discouraged from pursuing independent investigations.

“The current approach to AI safety often feels like locking the barn door after the horse has bolted,” argues Ben Thompson, a technology analyst and founder of Stratechery.

“We need to shift from a reactive, censorship-based approach to a proactive, transparency-based approach. That means fostering open research, encouraging responsible disclosure of vulnerabilities, and building AI systems that are inherently explainable and auditable.”

What Does This Mean for You?

This isn’t just a technical glitch; it’s a warning sign. We’re entering a world where the algorithms that power our lives are becoming increasingly complex and opaque. The ability to understand these algorithms, to probe their vulnerabilities, and to hold their creators accountable is crucial. The temporary block on access to the Gemini walkthrough video serves as a stark reminder that this ability is under threat. The question isn’t whether Google is intentionally censoring information, but whether its current approach to AI safety is inadvertently creating a digital black box.

What are your thoughts? Do you believe Google’s explanation is sufficient? Should AI developers be more transparent about the inner workings of their models? Share your perspective in the comments below. The future of AI depends on an informed and engaged public.