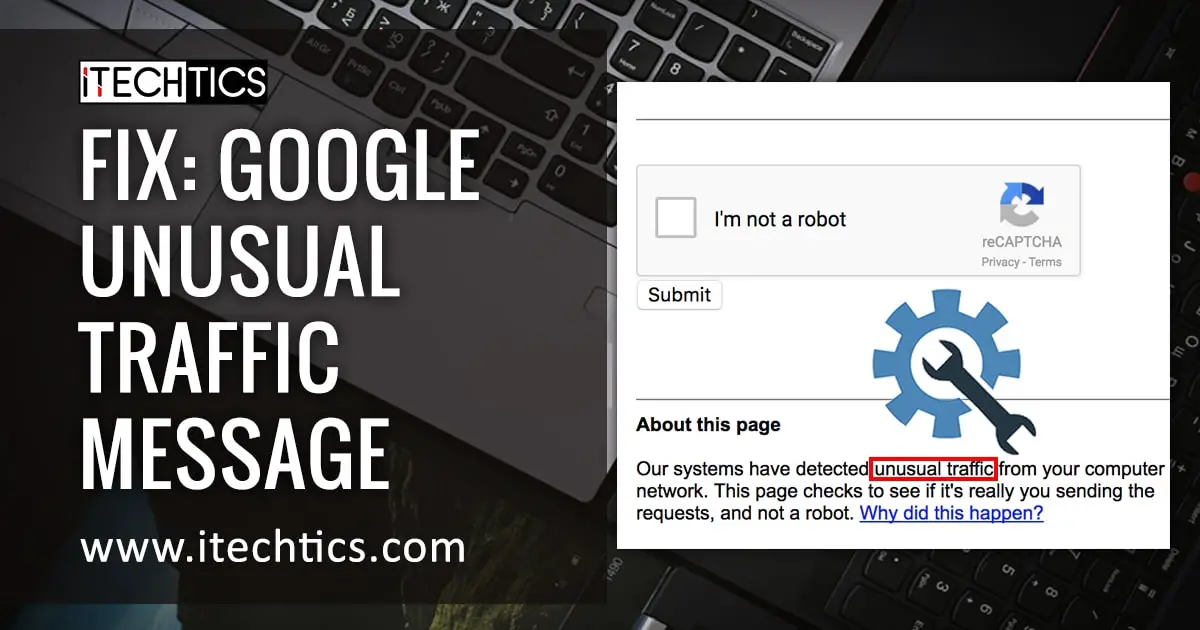

The internet hiccuped today, and for a fleeting moment, a rather ominous message appeared for some users attempting to access a YouTube video: a Google-branded block citing “unusual traffic” and potential violations of their Terms of Service. While the issue seems to have resolved itself quickly – the page now loads normally – the incident underscores a growing tension between automated security measures and legitimate user activity, and hints at a larger, largely unseen battle against sophisticated bot networks.

This wasn’t a widespread outage, and Google hasn’t issued a formal statement beyond the automated message itself. But the fact that it happened at all, and that the message specifically pointed to potential Terms of Service violations, is noteworthy. It’s a reminder that the internet isn’t a seamless, always-available space; it’s a complex system constantly under siege from malicious actors, and increasingly, caught in the crossfire are everyday users.

The Rise of “Scraping as a Service” and its Impact on Content Platforms

The core issue isn’t necessarily Google’s security protocols, but the escalating sophistication of web scraping. Traditionally, scraping – the automated extraction of data from websites – was the domain of individual developers or small teams. Now, a burgeoning “Scraping as a Service” (SaaS) industry has emerged, offering readily available tools and infrastructure to anyone willing to pay. These services often mask their activity through rotating IP addresses, sophisticated bot simulations, and even the use of compromised devices, making them incredibly difficult to detect and block.

This isn’t about harmless data collection. While some scraping is used for legitimate purposes – like price comparison or academic research – a significant portion fuels artificial intelligence (AI) model training, particularly in the rapidly expanding field of large language models (LLMs). These models *require* massive datasets, and scraping provides a cheap and readily available source. The YouTube video in question, featuring commentary on current events, likely became a target for such data harvesting. Wired Magazine recently detailed the legal battles surrounding the use of scraped data for AI training, highlighting the ethical and legal gray areas.

Google’s Balancing Act: Security vs. User Experience

Google, like other major platforms, employs a multi-layered defense against scraping. These include rate limiting (restricting the number of requests from a single IP address), CAPTCHAs, and behavioral analysis to identify bot-like activity. However, these measures aren’t foolproof. Aggressive blocking can inadvertently flag legitimate users, as appears to have happened in this instance. The challenge is finding the right balance between security and user experience. Too much security, and the internet becomes frustratingly inaccessible. Too little, and the platform is vulnerable to abuse.

The IP address flagged in the error message – 45.249.59.243 – is registered to a hosting provider in Moldova. While not inherently suspicious, it’s a common location for hosting bot networks due to its relatively lax regulations and low cost. Cloudflare’s detailed explanation of bot management illustrates the complexities of identifying and mitigating malicious bot traffic.

The Economic Implications of the Scraping Arms Race

The escalating arms race between platforms and scrapers has significant economic implications. Platforms are forced to invest heavily in security infrastructure, diverting resources from innovation. Content creators, whose work is being scraped to train AI models, are largely excluded from the economic benefits. This raises fundamental questions about intellectual property rights and the future of content creation.

“The current situation is unsustainable. Content creators are essentially subsidizing the development of AI models that could ultimately displace them. We need a framework that ensures fair compensation and protects intellectual property rights in the age of AI.”

– Dr. Emily Carter, Professor of Digital Law, Stanford University

The impact extends beyond individual creators. The proliferation of AI-generated content, trained on scraped data, could devalue original reporting and analysis. Archyde.com, for example, relies on the painstaking work of investigative journalists. If AI can cheaply replicate similar content, the economic viability of such journalism is threatened. Reuters recently reported on the rise of AI “content farms” and their potential to erode trust in online information.

The Role of Browser Extensions and User Awareness

Users aren’t entirely powerless. Malicious browser extensions and scripts can often be the source of unwanted automated requests. Regularly reviewing and removing unused extensions is a crucial step in protecting your online activity. Being mindful of the websites you visit and the links you click can assist prevent accidental exposure to malicious software.

The incident also highlights the importance of understanding how platforms like YouTube operate. While the automated message was alarming, it was ultimately a temporary measure designed to protect the platform from abuse.

Looking Ahead: Towards a More Sustainable Web Ecosystem

The Google block, though brief, serves as a warning. The current trajectory – unchecked scraping, escalating security measures, and a growing distrust of online information – is unsustainable. A more collaborative approach is needed, involving platforms, content creators, and policymakers. This could include developing standardized protocols for data access, implementing robust attribution mechanisms for AI-generated content, and strengthening intellectual property laws to protect creators.

“We’re entering a new era of digital rights management. The old models are simply not equipped to handle the scale and complexity of AI-driven data extraction. We need to rethink how we value and protect information in the 21st century.”

– Alex Thompson, Cybersecurity Analyst, Rand Corporation

The internet’s future hinges on our ability to navigate these challenges. The brief disruption experienced by some YouTube users today is a small glimpse into a larger, more complex battle for the soul of the web. What steps will *you* grab to protect your online experience and support a more sustainable digital ecosystem?