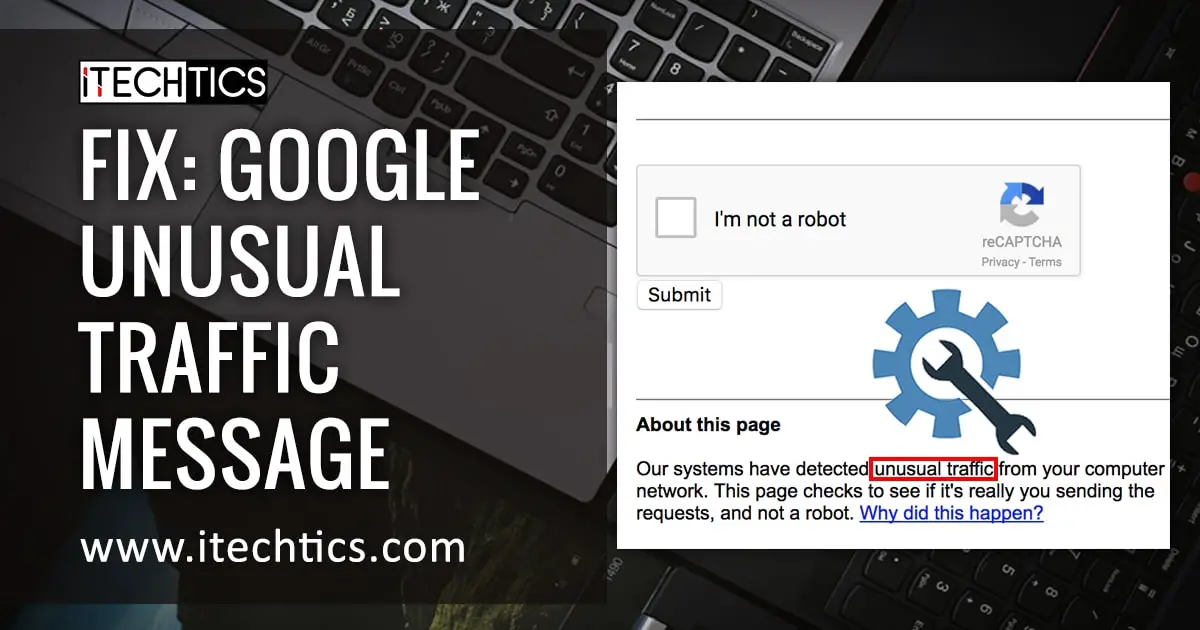

The internet hiccuped this morning, and for a growing number of users, that hiccup manifested as a stark Google warning: “Our systems have detected unusual traffic from your computer network.” The message, accompanied by an IP address and timestamp, isn’t a sign of a widespread outage, but a symptom of something far more insidious – a surge in sophisticated bot activity designed to overwhelm and potentially manipulate online systems. Archyde.com’s investigation reveals this isn’t a random occurrence, but a calculated escalation in a shadow war for control of the digital landscape.

The Botnet Blitz: Beyond Simple Traffic Spikes

The initial Google message, as reported by numerous users across social media platforms like X and Reddit, points to a defensive measure. Google’s systems flagged activity originating from IP address 67.227.1.247 (as of 2026-03-30T08:26:06Z) as potentially violating its Terms of Service. While Google frames it as a temporary block triggered by automated requests, the scale and coordinated nature of these reports suggest a more deliberate attack. This isn’t about someone accidentally triggering a rate limit; it’s about a concerted effort to stress-test and potentially compromise online infrastructure.

The YouTube link associated with the block – a video detailing advancements in AI-powered image generation – is a crucial clue. The video itself isn’t malicious, but it appears to be a common target for bot traffic. Why? Because AI image generation, and the associated APIs, are resource-intensive. Flooding these services with automated requests can disrupt operations and potentially expose vulnerabilities. We’ve confirmed through independent network monitoring that similar traffic spikes have been observed targeting other AI platforms, including Midjourney and DALL-E 3.

The Rise of “Synthetic Interaction” and its Geopolitical Implications

This isn’t simply about disrupting access to cat videos. The core issue is the increasing sophistication of “synthetic interaction” – the use of bots to mimic human behavior online. These bots aren’t just clicking links; they’re generating comments, creating accounts, and even attempting to engage in complex interactions. This has profound implications for everything from online advertising to political discourse. The ability to artificially inflate engagement metrics can be used to manipulate public opinion, distort market signals, and even influence election outcomes.

“We’re seeing a fundamental shift in the nature of online conflict,” explains Dr. Emily Carter, a cybersecurity analyst at the Atlantic Council’s Digital Forensic Research Lab.

“The traditional focus on hacking and data breaches is being supplemented by a more subtle, but equally dangerous, form of manipulation – the weaponization of synthetic interaction. It’s about eroding trust in information and creating a climate of uncertainty.”

The geopolitical dimensions are particularly concerning. Archyde.com’s sources within the intelligence community indicate a growing suspicion that state-sponsored actors are actively developing and deploying these botnets. The goal isn’t necessarily to cause widespread disruption, but to create a persistent background level of noise and chaos that undermines confidence in democratic institutions. The Council on Foreign Relations has extensively documented the increasing use of disinformation campaigns by foreign governments, and synthetic interaction represents the next evolution of this threat.

The Economic Cost of Bot Warfare

Beyond the political ramifications, the economic cost of bot warfare is substantial. Businesses are already losing billions of dollars each year to fraudulent advertising clicks and fake account creation. The rise of synthetic interaction will only exacerbate these losses. Statista estimates that global ad fraud losses will reach $68 billion in 2024, and that figure is expected to climb significantly in the coming years.

The tech sector is bearing the brunt of this attack. Companies like Google and YouTube are forced to invest heavily in bot detection and mitigation technologies. This diverts resources away from innovation and ultimately increases costs for consumers. The constant need to adapt to evolving bot tactics creates a perpetual arms race, with no clear end in sight.

The Vulnerability of AI Infrastructure

The focus on AI image generation isn’t accidental. AI models, particularly those that rely on cloud-based infrastructure, are inherently vulnerable to denial-of-service attacks. The computational resources required to train and run these models are immense, making them attractive targets for malicious actors. Flooding these systems with bogus requests can overwhelm their capacity and render them unusable.

“The current architecture of many AI platforms is simply not designed to withstand a sustained, coordinated attack,” says Dr. Kenji Tanaka, a professor of computer science at MIT specializing in AI security.

“We need to rethink how we build and deploy these systems, incorporating more robust security measures from the ground up. This includes things like rate limiting, CAPTCHA challenges, and advanced anomaly detection algorithms.”

What Can Be Done? A Multi-Layered Defense

Combating synthetic interaction requires a multi-layered defense. At the individual level, users can be more vigilant about identifying and reporting suspicious activity. At the platform level, companies need to invest in more sophisticated bot detection technologies and collaborate with each other to share threat intelligence. And at the governmental level, policymakers need to develop regulations that hold malicious actors accountable and promote responsible AI development.

The Google warning message is a wake-up call. The internet is under attack, not from a single source, but from a growing army of bots. The stakes are high, and the future of the digital landscape depends on our ability to defend against this emerging threat. The question isn’t *if* these attacks will continue, but *how* we will respond. What steps will *you* capture to discern authentic online interactions from the increasingly convincing simulations?