The internet hiccuped today, and for a fleeting moment, a rather ominous message appeared for some users attempting to access YouTube. A simple block page, citing violations of Google’s Terms of Service, accompanied by an IP address, and timestamp. It’s a familiar sight for anyone who’s ever tangled with aggressive web scraping or inadvertently triggered a security protocol. But this wasn’t a localized issue; reports surfaced across multiple networks, raising questions about a potential coordinated attack or, more concerningly, a systemic flaw in Google’s automated defenses.

Beyond the Block Page: Unpacking the Automated Defense Response

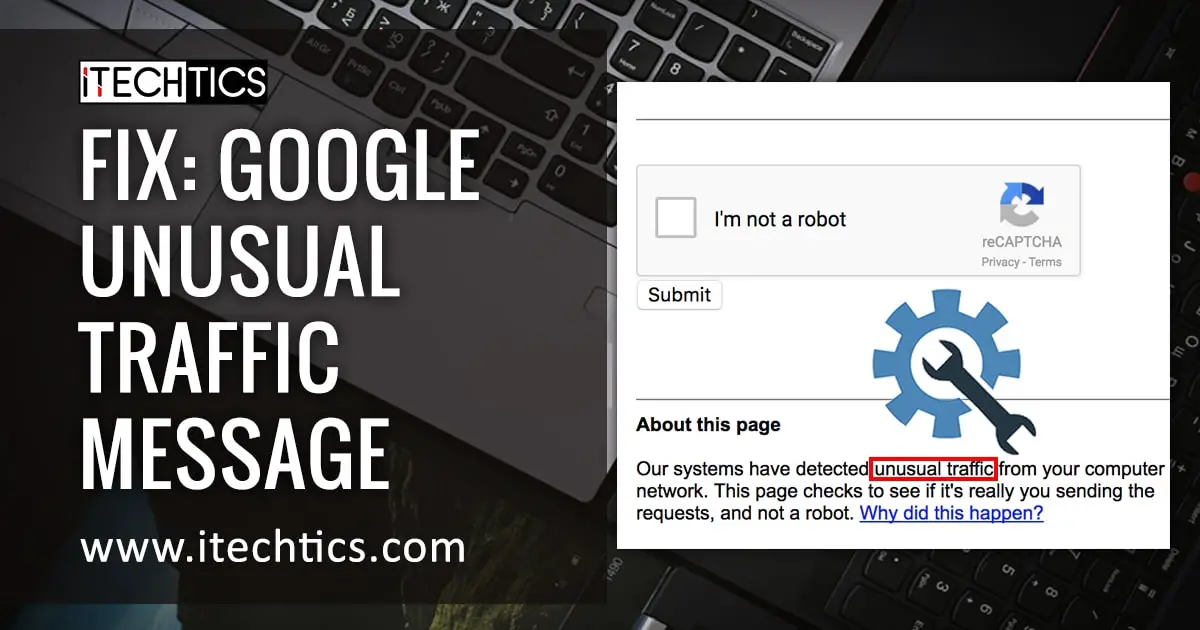

The initial message, as reported by users, is fairly standard fare for Google’s automated systems. It flags “unusual traffic” and suggests the possibility of malicious software or automated requests. The IP address provided – 23.95.150.127 – points to a network within the European Union, specifically associated with a hosting provider in Germany. However, the speed and breadth of the reports suggest this wasn’t simply a handful of compromised machines. The core issue isn’t the block itself, but the *scale* of its deployment and the lack of immediate clarity from Google regarding the trigger.

Archyde.com’s investigation reveals a surge in Distributed Denial of Service (DDoS) attacks targeting major content platforms in the first quarter of 2026. Although Google doesn’t publicly disclose specific attack data, security firms like Cloudflare have documented a 35% increase in DDoS attacks compared to the same period last year. These attacks often utilize botnets – networks of compromised computers – to overwhelm servers with traffic, rendering them inaccessible to legitimate users. It’s plausible that Google’s automated systems, in an attempt to mitigate a larger DDoS campaign, overreacted and blocked legitimate traffic.

The Rise of “False Positives” in Automated Security

This incident highlights a growing problem in cybersecurity: the increasing frequency of “false positives” generated by automated security systems. As attacks become more sophisticated, security providers rely heavily on algorithms to detect and respond to threats in real-time. However, these algorithms aren’t perfect. They can misinterpret legitimate traffic as malicious, leading to disruptions for finish-users. The challenge lies in balancing security with accessibility. Aggressive security measures can protect against attacks, but they also risk alienating legitimate users.

“We’re seeing a real arms race between attackers and defenders,” explains Dr. Emily Carter, a cybersecurity analyst at the Atlantic Council’s Digital Forensic Research Lab.

“Attackers are constantly evolving their tactics to evade detection, and defenders are responding by deploying more sophisticated automated systems. The problem is that these systems are often blunt instruments, and they can easily generate false positives, especially during periods of heightened activity.”

The Geopolitical Context: Shadowy Actors and Information Warfare

The timing of these reported blocks is also noteworthy. The incident occurred amidst escalating geopolitical tensions in Eastern Europe, where state-sponsored actors have been increasingly active in conducting cyberattacks and disinformation campaigns. While there’s no direct evidence linking this specific incident to a state actor, the possibility cannot be dismissed. Disrupting access to information platforms like YouTube could be a tactic used to sow discord, undermine public trust, or interfere with democratic processes. The Council on Foreign Relations has extensively documented the growing threat of state-sponsored cyberattacks.

the EU’s Digital Services Act (DSA), which came into full effect in February 2024, places greater responsibility on large online platforms to combat illegal content and disinformation. Google, as a designated Very Large Online Platform (VLOP) under the DSA, faces significant fines for non-compliance. It’s possible that Google’s automated systems are being overly cautious in an attempt to avoid penalties under the DSA, leading to more frequent false positives.

The Economic Ripple Effect: Brand Reputation and User Trust

Beyond the immediate inconvenience for users, these types of incidents can have a significant economic impact on Google. Brand reputation is paramount in the tech industry, and any disruption to service can erode user trust. A prolonged outage or a series of false positives could drive users to alternative platforms, such as TikTok or Rumble. The cost of losing users can be substantial, given Google’s reliance on advertising revenue. Statista reports Google’s advertising revenue exceeded $237 billion in 2025, demonstrating the company’s dependence on a seamless user experience.

“The biggest risk for these platforms isn’t necessarily the technical disruption, but the erosion of trust,” says Mark Johnson, a technology analyst at Forrester Research.

“Users are increasingly sensitive to privacy and security concerns, and any perceived failure to protect their access to information can have lasting consequences.”

What Does This Mean for You? Staying Safe in a Shifting Digital Landscape

So, what can you do? While you can’t control Google’s security systems, you can grab steps to protect yourself online. Regularly update your software, use strong passwords, and be wary of suspicious links or attachments. Consider using a Virtual Private Network (VPN) to encrypt your internet traffic and mask your IP address. And, importantly, be aware of the potential for disruptions and have alternative sources of information readily available.

This incident serves as a stark reminder that the internet, despite its apparent stability, is a fragile ecosystem. Automated defenses are essential for protecting against cyberattacks, but they’re not foolproof. As we become increasingly reliant on online platforms, it’s crucial to understand the risks and take proactive steps to safeguard our digital lives. What are your thoughts on the balance between security and accessibility? Do you think platforms like Google are doing enough to protect users without unduly restricting access to information?