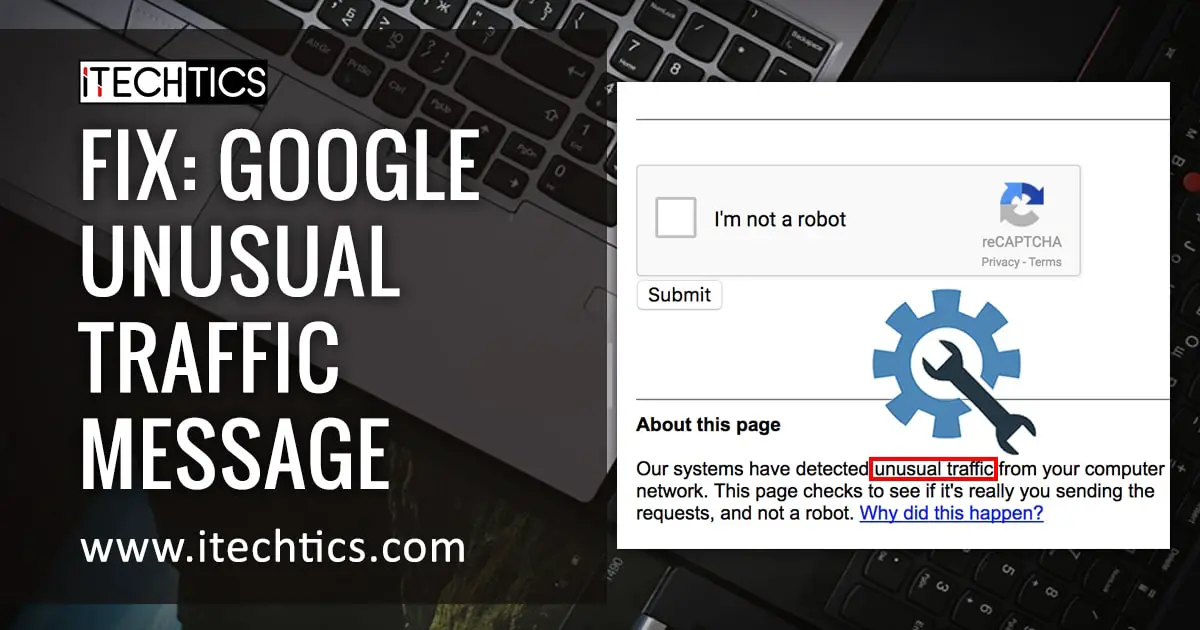

It started with a simple click. A request for information. Instead of the story, I got a wall. A sterile, gray box from Google informing me that my network traffic looked “unusual,” that I might be a robot, or worse, a threat. The URL was dead on arrival, blocked by an algorithmic gatekeeper deciding I wasn’t human enough to see the truth. In March 2026, this isn’t just a technical glitch; it is a metaphor for the state of information itself. We are building systems so complex, so opaque, that even the journalists tasked with monitoring them are being locked out by the very infrastructure we rely on.

As the Senior News Editor at Archyde, I spend my days peeling back layers of corporate secrecy. But when the digital door slams shut via a CAPTCHA challenge, it signals a broader fracture. We are navigating an era where access to reality is mediated by algorithms that detect “violations” without explaining them. This barrier reminded me of the other black boxes we face daily—the automated trading loops shaping our economy and the hidden service charges draining tenants’ wallets. The inability to access a single video link is minor compared to the systemic opacity gripping our financial and housing sectors.

The Algorithmic Gatekeepers

When Google detects requests that appear to violate its Terms of Service, it silentely blocks the path. The error page I encountered cited “malicious software” or “automated requests” as potential culprits. But what happens when the automation isn’t coming from a bot, but from the institutions we trust? The irony is palpable. We worry about scripts sending requests too quickly, yet we allow high-frequency trading firms to send millions of financial requests per second without blinking.

.png)

Consider the recent developments in quantitative trading. While I was blocked from viewing specific media, my investigation into the sector reveals a surge in fully integrated AI loops. James Carter Leads BC Capital in Building a Fully Integrated AI Quantitative Trading Loop highlights a shift toward systems that operate beyond human speed. These aren’t just tools; they are ecosystems. When these systems interact, they create market conditions that no single human regulator can observe in real-time. The “unusual traffic” Google fears is child’s play compared to the data floods moving through Wall Street’s backend.

Ethical AI in a Closed Room

The push for transparency isn’t just about accessing a website; it’s about understanding the logic behind the decisions that affect our livelihoods. There is a growing movement to inject ethics into these closed loops. In April 2025, a press release noted the mission of Reinventing Wall Street with Ethical AI and Financial Inclusion. The goal is to ensure that artificial intelligence serves the public good rather than just optimizing profit margins in the dark.

“In the ever-evolving landscape of fintech and artificial intelligence, the priority must remain on financial inclusion and ethical standards that protect the consumer,” the initiative stated, emphasizing the need for human oversight in automated decision-making.

This sentiment is crucial. If we allow AI to dictate credit scores, trading volumes, or even news distribution without a clear audit trail, we risk creating a society where the rules are written by code no one can read. The Google block I experienced is a microcosm of this issue: a decision was made about my access, but the reasoning was obscured behind a generic warning about IP addresses and network connections.

The Housing Transparency Crisis

The lack of transparency extends beyond tech and finance into the bricks and mortar of our communities. My work specializing in UK Housing Law has uncovered similar patterns of obfuscation. Housing associations, tasked with providing affordable homes, often operate like black boxes regarding service charges. Tenants receive bills they cannot verify, managed by entities that resist scrutiny.

Just as Google flags “unusual traffic,” housing managers often flag tenant inquiries as troublesome. The Service Charge Scandal is not merely about money; it is about power. When residents cannot see the breakdown of costs, they are subject to the same arbitrary authority that blocked my research today. Whether it is a housing association in Peterborough or a trading firm in New York, the mechanism is the same: concentrate information at the top, and depart the user guessing.

Reclaiming the Right to Know

So, how do we move forward when the doors are locked? We must demand canonical access. In journalism, we bypass RSS feeds and broken links to find the original publisher’s direct URL. In finance, we must demand auditable AI. In housing, we need legislated openness. The technology exists to make these systems transparent; the will is what is missing.

My connection was restored after the block expired, but the lesson remains. We cannot accept “unusual traffic” warnings as a substitute for explanation. Whether you are a tenant reviewing a service charge statement or an investor looking at an AI trading loop, the question must always be: Who controls the switch? If the answer is hidden behind a terms of service agreement or a proprietary algorithm, then the system is failing you.

At Archyde, we commit to keeping the lines open. We will not let the algorithms decide what story gets told. If a link is broken, we find the source. If a system is opaque, we shine a light. The truth is not a privilege granted by a network administrator; it is a right. And today, despite the gray boxes and the IP address mismatches, we continue to knock on the door until it opens.

What about you? Have you encountered digital walls that stopped your research or your daily work? Share your experience. The more we talk about these barriers, the harder they become to maintain.