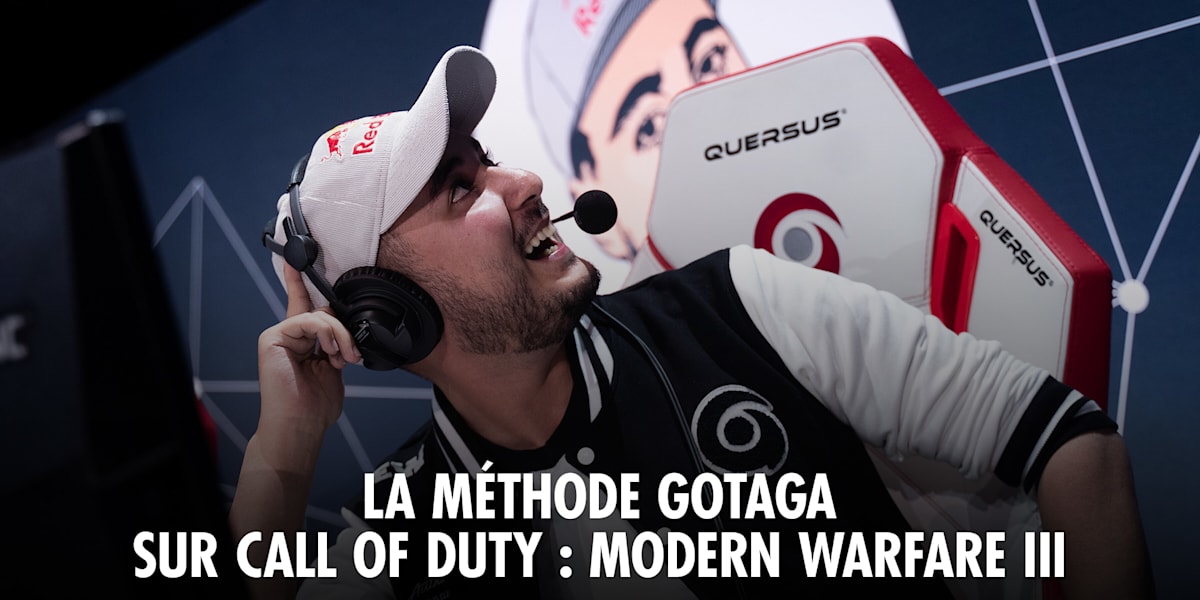

French creator Gotaga triggered a metadata volatility event on YouTube this week with a clickbait title, stress-testing 2026’s AI moderation pipelines. This incident highlights critical latency gaps in agentic deployment models and reveals how elite content creators exploit algorithmic patience. Platform security architectures must now prioritize real-time semantic analysis over reactive human review to mitigate engagement farming risks.

The Algorithmic Provocation as a Security Vector

When a top-tier influencer like Gotaga deploys a title such as Je stream en slip ???, This proves not merely content; it is a stress test for the platform’s content safety classifiers. In the 2026 landscape, we are no longer dealing with simple keyword filtering. We are facing adversarial machine learning where creators act as ethical hacking participants against the recommendation engine. The title itself serves as a probe, measuring the time-to-action for automated moderation agents. If the system flags the content too aggressively, reach is throttled. If it waits too long, the engagement spike solidifies before mitigation. Here’s the “Strategic Patience” described in recent security analyses, where actors—whether malicious hackers or attention economists—wait for the perfect window to exploit system latency.

The infrastructure behind these streams relies on complex Agentic Deployment models. Unlike traditional static servers, modern content delivery networks (CDNs) now utilize autonomous agents to manage load balancing and policy enforcement simultaneously. However, as industry veteran Jason Lemkin noted recently, the gap between capability and execution is widening.

“To Thrive today, you have to become an Agentic Deployment Expert. But So, So Few Actually Are.”

This quote, originally regarding SaaS infrastructure, applies equally to content platforms. YouTube’s backend must deploy moderation agents with the same precision as a cloud architect deploying security patches. When a viral event occurs, the failure point is rarely bandwidth; it is the decision latency of the AI governing the stream.

Backend Architecture and Traffic Spikes

From a network engineering perspective, a viral stream launch resembles a distributed denial-of-service (DDoS) attack, albeit a legitimate one. The sudden influx of concurrent connections requires dynamic scaling that traditional auto-scaling groups often miss. In 2026, we expect security analytics platforms, similar to those sought by Netskope for their distinguished engineer roles, to be integrated directly into consumer video platforms. The architecture must distinguish between a genuine traffic surge and a coordinated botnet amplification.

Consider the data pipeline. The video stream is encoded, likely using AV1 or H.266 VVC codecs to maximize bandwidth efficiency. Simultaneously, the metadata—including the provocative title—is parsed by natural language processing (NLP) models. These models must understand context. “Slip” in French translates to underwear, but in a technical context, it could mean something else. The ambiguity is the exploit. If the NLP model lacks cultural nuance, it either false positives (censoring legitimate content) or false negatives (allowing policy violations). This is where end-to-end encryption of user data conflicts with the need for deep packet inspection to ensure safety. The tension between privacy and moderation is the defining conflict of this era.

The 30-Second Verdict on Platform Stability

- Latency Risk: AI moderation agents must operate under 200ms to prevent viral lock-in of policy-violating content.

- Scaling Logic: Agentic systems must predict traffic spikes based on creator velocity, not just historical data.

- Human-in-the-Loop: Despite AI advances, principal cybersecurity engineers remain essential for edge-case adjudication.

Will AI Replace the Moderation Engineer?

The industry is currently debating whether senior individual contributors in security engineering will be displaced by autonomous AI systems. Job tracking data suggests that while entry-level monitoring is automating, principal-level roles are evolving. The question Will AI Replace Principal Cybersecurity Engineer Jobs? is relevant here. In the context of content moderation, AI can handle the 99% of clear-cut cases. However, the 1%—the nuanced, culturally specific, or adversarial cases like Gotaga’s metadata probe—require human intuition. The AI can flag the anomaly, but the engineer must define the threshold.

We are seeing a shift toward Zero Trust architectures within content platforms. Every stream is treated as untrusted until verified by multiple heuristic models. This requires significant compute power, often leveraging NPUs (Neural Processing Units) on the edge to reduce cloud dependency. The cost of running these models is non-trivial. If a platform spends more on moderation compute than it earns from the ad revenue of the stream, the economic model breaks. This is the hidden cost of viral clickbait.

Enterprise Implications of Consumer Tech Wars

What happens on YouTube today dictates enterprise security policy tomorrow. The tools used to moderate a streamer’s title are the same tools enterprises use to prevent data exfiltration via shadow IT. If a consumer platform cannot effectively manage agentic deployment for content safety, how can an enterprise trust them with sensitive data? The convergence of open-source security tools and proprietary AI models is creating a new standard. Companies like Hewlett Packard Enterprise are already hiring distinguished technologists to bridge HPC and AI security, recognizing that high-performance computing is required to process these massive data streams in real-time.

The “Elite Hacker” persona is no longer limited to cybersecurity threats; it extends to the creator economy. These creators understand the system’s logic better than the engineers who built it. They exploit the recommendation algorithm’s reward functions. To counter this, platforms must adopt a defensive posture that assumes adversarial intent from all inputs. In other words moving away from reactive moderation to predictive containment. The technology exists, but the deployment strategy remains the bottleneck.

the incident serves as a reminder that in 2026, content is code. A title is a function call. A view is a request. And the platform is the operating system. If the OS cannot handle the function call without crashing or compromising security, the architecture needs a refactor. We are not just watching videos; we are witnessing the live debugging of the social web’s underlying kernel.