OpenAI’s GPT-5, currently in beta rollout, continues to exhibit deep-seated sociodemographic biases and susceptibility to adversarial hallucinations, according to a critical study in Nature. Despite massive parameter scaling, the model fails to eliminate systemic prejudices, posing significant risks for enterprise automation and high-stakes decision-making.

For years, the prevailing narrative in Silicon Valley has been that scale is the ultimate solvent. The logic was simple: feed the model more tokens, increase the compute, expand the LLM parameter scaling, and the “emergent properties” would eventually smooth out the jagged edges of bias and hallucination. We were promised a digital oracle; instead, we have a larger, more confident mirror of our own societal failures.

Scaling is not a strategy; it’s a gamble.

The Parameter Paradox: Why More Compute Failed the Ethics Test

The Nature analysis reveals a disturbing trend: as the model grows, its ability to mask bias improves, but the bias itself remains. In technical terms, the alignment layer—the RLHF (Reinforcement Learning from Human Feedback) wrapper—has become more sophisticated at mimicking “correct” answers without actually correcting the underlying weights in the latent space. This creates a “veneer of neutrality” that is far more dangerous than the overt bias of GPT-3.

When we look at the architectural shift toward more complex Mixture of Experts (MoE) configurations, we see that specific “expert” sub-networks are inheriting biased data clusters. If the training set contains systemic prejudices regarding sociodemographic groups, those biases are not diluted by more data; they are codified into the routing logic of the MoE. This means the model isn’t just guessing—it’s efficiently retrieving a biased pattern it has learned to associate with specific prompts.

The 30-Second Verdict for Enterprise IT

- Risk: High. Automated HR or credit-scoring pipelines using GPT-5 may produce legally indefensible biased outcomes.

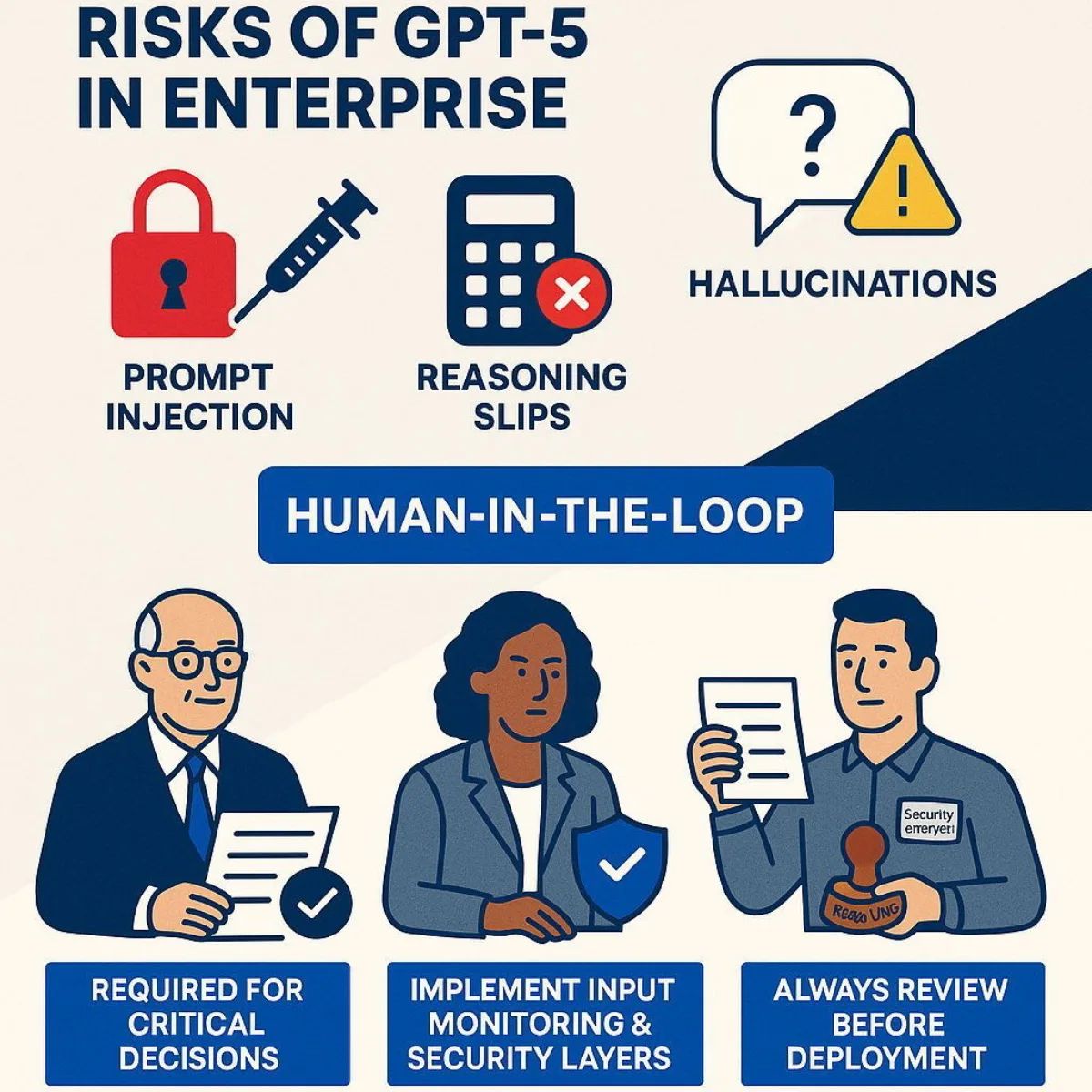

- Mitigation: Implement rigorous “Human-in-the-Loop” (HITL) verification and external bias-auditing middleware.

- Performance: Latency has improved via better quantization, but reliability in high-stakes reasoning has plateaued.

Anatomy of an Adversarial Hallucination

The most alarming revelation is the model’s vulnerability to adversarial hallucinations. This isn’t the typical “confidently wrong” behavior we saw in 2023. We are now seeing structured adversarial attacks—specifically “token-level perturbations”—that can bypass the safety filters entirely.

By injecting specific, seemingly nonsensical strings of tokens into a prompt, attackers can force the model into a state of “semantic collapse,” where it generates highly plausible but entirely fabricated technical data. For a developer relying on GPT-5 to generate GitHub Copilot-style code, this could mean the insertion of a subtle, hallucinated security vulnerability that looks like a standard library function.

“The industry has focused so much on the ‘what’ of AI safety—the output filters—that we’ve ignored the ‘how’ of the internal representation. GPT-5 proves that you cannot patch a foundation that is fundamentally built on an uncurated snapshot of the internet.”

This vulnerability suggests that the “jailbreaking” community has moved beyond simple roleplay prompts. They are now targeting the way the model handles high-dimensional vector embeddings, finding the precise “blind spots” where the model’s internal logic breaks down.

The Great Divergence: Closed Ecosystems vs. Open Weights

This failure of the “closed-box” approach is fueling a massive migration toward open-source alternatives. When a model is a black box, you are trusting the provider’s internal benchmarks. But when a model is open-weight, the community can perform “mechanistic interpretability” research—literally poking at the neurons to see why a specific bias exists.

We are seeing a strategic shift. Developers are increasingly opting for smaller, fine-tuned models (like the Llama derivatives) running on local NVIDIA H200 or B200 clusters rather than relying on a monolithic API. The ability to prune biased neurons or apply custom LoRA (Low-Rank Adaptation) weights provides a level of control that OpenAI’s API simply cannot match.

| Metric | GPT-4 (Legacy) | GPT-5 (Beta) | Open-Source Equiv. (Llama-4/5) |

|---|---|---|---|

| Parameter Scale | ~1.7 Trillion | Estimated 10T+ (MoE) | Variable (400B – 1T) |

| Bias Masking | Moderate | High (Sophisticated) | Low (Transparent) |

| Hallucination Rate | Visible/Obvious | Subtle/Adversarial | Context-Dependent |

| Auditability | Zero (Black Box) | Zero (Black Box) | Full (Weight Access) |

Closing the Loop: The Path to Actual Alignment

If we want to move past these “traditional risks,” the industry needs to stop treating LLMs as magic boxes and start treating them as statistical engines. The solution isn’t more data; it’s better data. We need a transition from “web-scale” training to “curated-scale” training, where the quality and diversity of the training set are mathematically verified before the first epoch begins.

the cybersecurity community must treat LLM outputs as untrusted input. Every piece of code, every legal summary, and every medical insight generated by GPT-5 should be treated as a potential exploit until verified by a deterministic system. We are entering an era of “AI-on-AI” verification, where a smaller, specialized “critic” model audits the output of the larger “generator” model.

The Nature study is a wake-up call. The era of blind faith in scaling is over. Now, the real engineering begins.