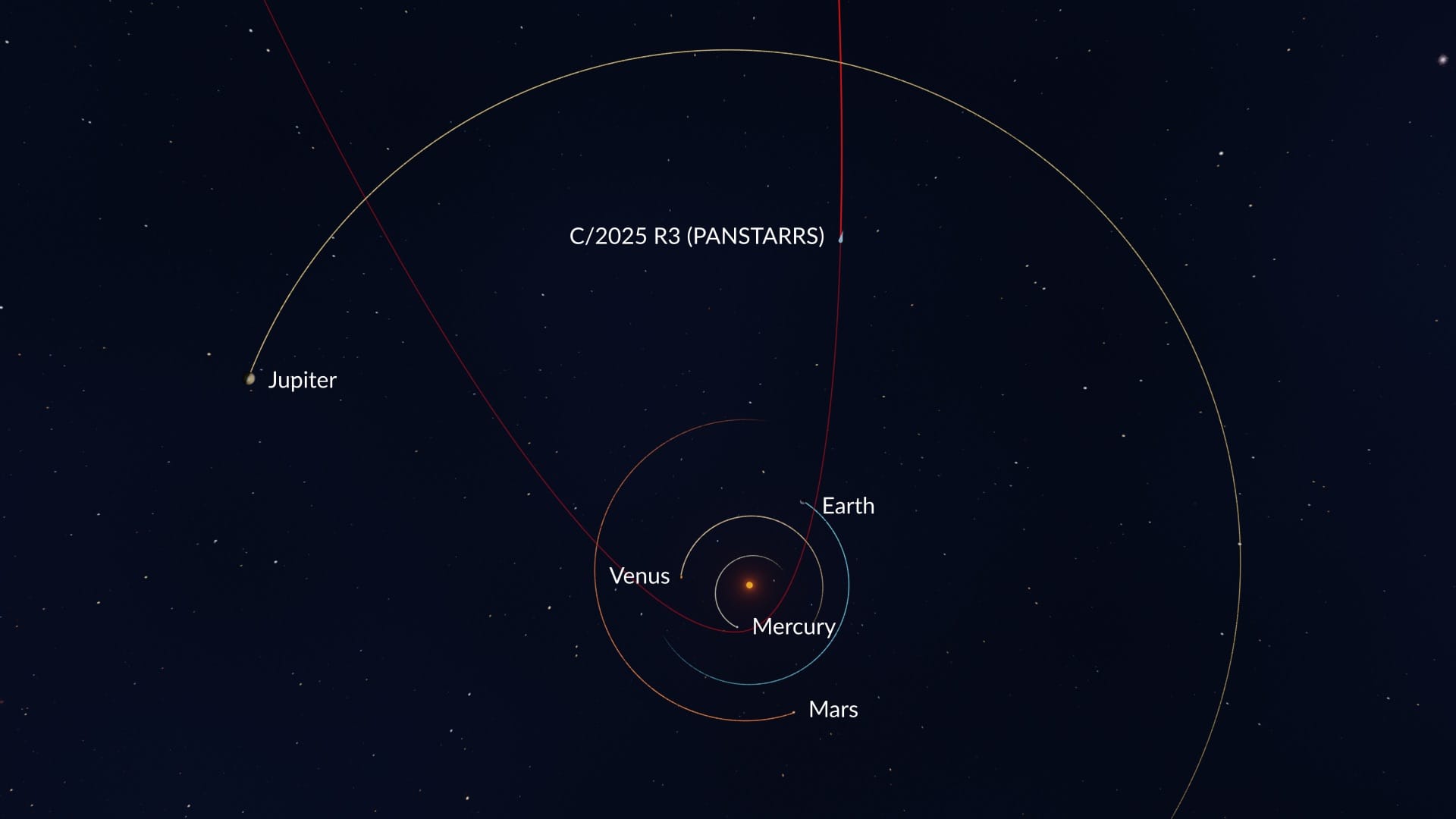

Comet Pan-STARRS becomes visible this Monday, April 13, 2026, positioned near a crescent moon. Visible to the naked eye in dark-sky locations, this rare visitor—seen for the first time in 170,000 years—is tracked via the Pan-STARRS automated survey system using high-cadence, wide-field digital imaging and orbital telemetry.

For the casual observer, What we have is a “look up and wonder” moment. For those of us obsessed with the stack, it is a demonstration of the sheer computational power required to find a needle in a galactic haystack. The discovery of Pan-STARRS wasn’t a stroke of luck by a lone astronomer with a telescope; it was the result of a massive, automated data-ingestion pipeline designed to scan the sky and flag “transients”—objects that move or change brightness against a static background of stars.

We are witnessing the convergence of big data and celestial mechanics. The Pan-STARRS (Panoramic Survey Telescope and Rapid Response System) isn’t just a mirror; it is a high-throughput imaging engine that generates terabytes of raw data per night. The “magic” happens in the differencing algorithms: the system takes an image, subtracts a reference image of the same sky area, and isolates the delta. If that delta moves in a consistent orbital arc, the system flags it. This is essentially a real-time anomaly detection problem scaled to the size of the observable universe.

The Hardware Stack: From CCDs to sCMOS

If you are planning to capture this event on Monday, you aren’t just fighting light pollution; you are fighting the physics of your sensor. For decades, the gold standard for this kind of work was the Charge-Coupled Device (CCD), prized for its linear response and high quantum efficiency. However, the industry has shifted toward sCMOS (scientific Complementary Metal-Oxide-Semiconductor) sensors.

The shift is driven by read noise. While CCDs are excellent, they are unhurried. SCMOS allows for massive parallelization of the readout process, enabling high-frame-rate imaging without sacrificing the dynamic range needed to capture a faint comet tail against a dark void. When you’re tracking a comet near a crescent moon, the moon acts as a massive source of “light leak,” flooding your sensor with photons that can wash out the subtle signal of the comet’s coma.

To get a clean shot, you need to manage your signal-to-noise ratio (SNR). This involves a rigorous calibration stack: dark frames to map thermal noise, flat frames to correct for vignetting and dust on the sensor, and bias frames to remove the base electronic offset. It is a tedious process of subtraction and division that turns a grainy, purple-tinted mess into a scientific image.

The Sensor Comparison: Astronomy Grade

| Feature | Traditional CCD | Modern sCMOS | Consumer CMOS (Mirrorless) |

|---|---|---|---|

| Read Noise | Moderate to High | Ultra-Low | Low to Moderate |

| Readout Speed | Slow (Serial) | Rapid (Parallel) | Remarkably Fast |

| Quantum Efficiency | Very High | High | Moderate to High |

| Cooling | Thermoelectric (Peltier) | Integrated/External | Passive/Limited |

Algorithmic Hunting and the AI Shift

The “Tracker” part of the comet’s story is where the software gets compelling. Tracking a comet requires solving for six orbital elements—parameters that define the shape, size, and orientation of the orbit in 3D space. Traditionally, this was done using Gaussian methods of orbit determination. Today, we are seeing the integration of Machine Learning (ML) to filter out “false positives.”

Space debris, satellite constellations (looking at you, Starlink), and cosmic ray hits on the sensor all look like transients. The current frontier in survey astronomy is the use of Convolutional Neural Networks (CNNs) to classify these objects in real-time. By training models on millions of known asteroids and comets, the pipeline can now ignore a tumbling piece of rocket fairing and alert astronomers to a long-period comet with far greater precision.

“The bottleneck in modern astronomy is no longer the aperture of the telescope, but the throughput of the data pipeline. We are moving from a world of ‘observing’ to a world of ‘mining’ the sky.”

This transition mirrors the broader trend in AI: moving from manual feature engineering to automated pattern recognition. The tools used to find Pan-STARRS are conceptually similar to the Astropy ecosystem, where Python-based libraries handle the heavy lifting of coordinate transformations and FITS file manipulation.

The Ecosystem Gap: Open Data vs. Proprietary Surveys

There is a simmering tension in the astronomical community regarding data democratization. While Pan-STARRS provides vital data, the “golden hour” of discovery often happens within proprietary pipelines before the data is released to the public via the Minor Planet Center (MPC). This creates a hierarchy of discovery where those with the fastest compute clusters and the most optimized arXiv-published algorithms claim the glory.

However, the rise of citizen science platforms like Zooniverse has bridged this gap. By gamifying the identification of transients, researchers can leverage thousands of human “neural networks” to verify what the AI might have missed. It is a hybrid approach: AI for the initial sweep, humans for the nuance, and high-end hardware for the confirmation.

For the amateur, the “tech” is now accessible. You no longer need a PhD to track a comet. Apps like Stellarium use the same orbital elements provided by the MPC to render the comet’s position on your screen in real-time, utilizing your phone’s gyroscope and magnetometer to overlay the object’s position on the sky.

The 30-Second Verdict for Monday

If you want to see Pan-STARRS this Monday, don’t rely on a zoom lens on a shaky tripod. The comet is a low-contrast object. To maximize your chances, get away from city lights—light pollution is essentially “noise” that destroys your SNR. Use a tripod, a wide-angle lens (between 14mm and 35mm), and set your ISO to a level that doesn’t introduce excessive thermal noise (usually 800-1600 on modern full-frame sensors).

Look for the crescent moon first; the comet will be in the vicinity, appearing as a fuzzy, non-twinkling star with a faint atmospheric haze. If you’re using a DSLR, strive a 2-to-5 second exposure. Any longer and the stars will start to trail due to the Earth’s rotation, unless you’re using an equatorial mount to counteract the planetary spin.

This isn’t just a celestial event; it is a victory for the automated survey. The fact that we can predict the arrival of a rock that hasn’t visited our neighborhood in 170,000 years is a testament to the power of the silicon-based eyes we’ve pointed at the dark.

For more on the technical specifications of wide-field surveys, check the IEEE Xplore digital library for papers on CMOS sensor arrays or dive into the documentation of Ars Technica’s deep dives into space infrastructure.