In a surprising pivot from digital disruption, L’aut’journal’s “La Boucherie, Partie 2” explores the socio-economic landscape of Bas-Laurentian agriculture during the summer of 1978. This retrospective analysis examines the intersection of traditional farming, regional identity, and the systemic shifts in Quebec’s agrarian economy during a pivotal era of modernization.

Wait. Let’s stop right there. As a technology editor, my brain is screaming at the juxtaposition. We are currently sitting in April 2026, an era where AI agents are orchestrating complex software deployments and “The Attack Helix” is redefining offensive security. And yet, we are digging into the archives of 1978 agriculture. Why? Due to the fact that the patterns of systemic disruption in the 1970s agrarian sector are a mirror image of the current disruption in the LLM (Large Language Model) economy. Both represent a shift from artisanal, localized production to centralized, industrial-scale efficiency.

The “Information Gap” here isn’t just about traditional farms. it’s about the datafication of history. To understand the 1978 Bas-Laurentian shift, we have to look at it through the lens of 2026’s algorithmic analysis. We are seeing a trend where historical archives are being fed into specialized RAG (Retrieval-Augmented Generation) pipelines to extract sentiment and economic trends that were previously invisible to the naked eye.

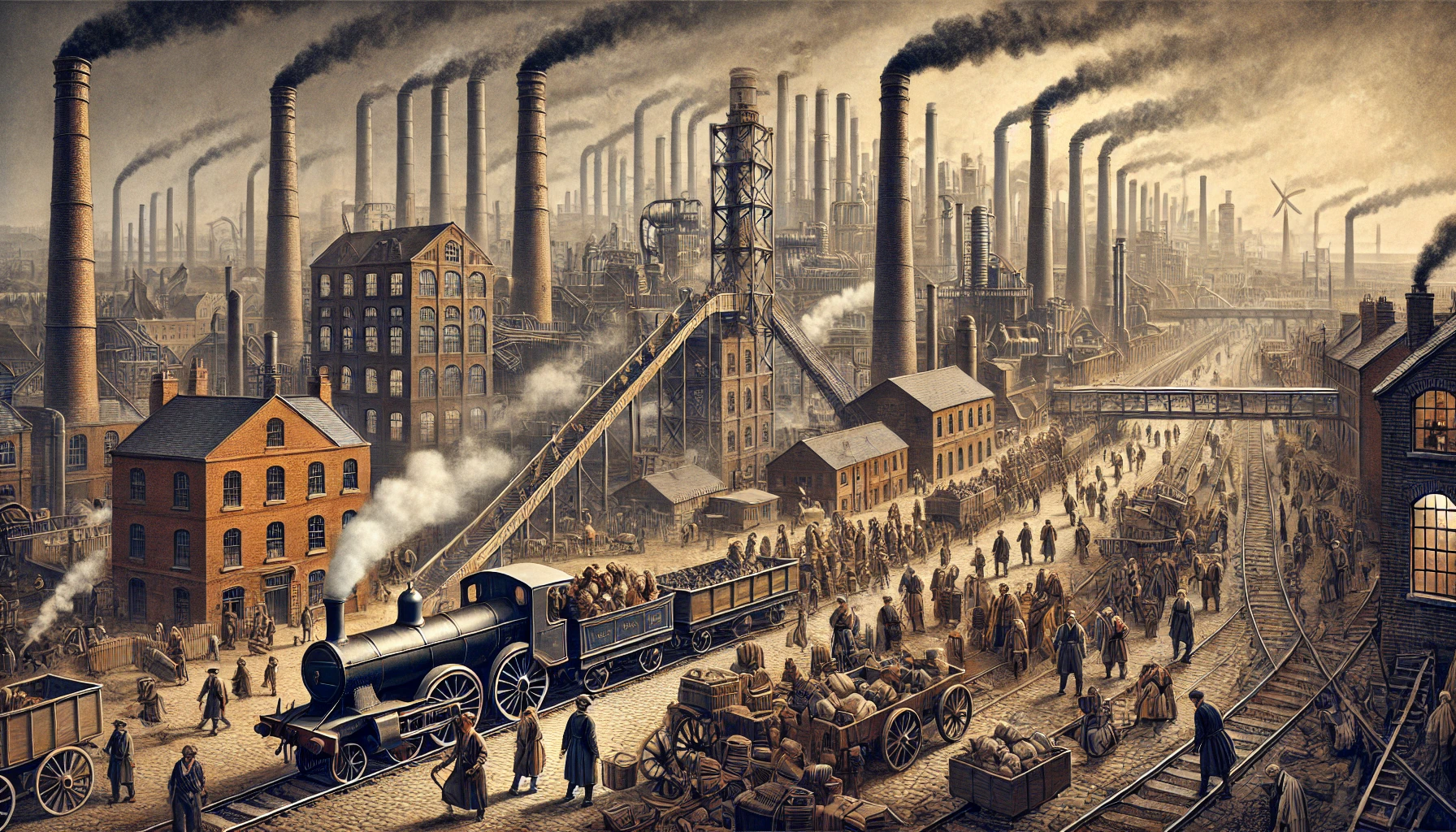

The Industrialization Paradox: From Plows to Parameters

The 1978 account detailed in L’aut’journal isn’t just a nostalgic trip; it’s a case study in “technological displacement.” In the late 70s, the Bas-Laurentian farmers faced a crisis of scale. The introduction of heavier machinery and chemical intensification shifted the power dynamic from the small-scale family plot to the capitalized agribusiness. This represents the exact same trajectory we see today with the scaling laws of AI.

When we talk about LLM parameter scaling—moving from 7B to 70B or even trillion-parameter models—we are seeing the “industrialization” of intelligence. The “artisanal” prompt engineer is being replaced by automated optimization loops. The small-scale developer is being squeezed out by the massive compute clusters of the hyperscalers.

It’s a brutal cycle. The efficiency gain is undeniable, but the systemic fragility increases. In 1978, a crop failure or a price drop in the meat market could wipe out a generation of farmers. In 2026, a single API outage or a sudden shift in model weights can bankrupt a startup overnight.

“The transition from manual to mechanical in agriculture mirrored the transition from heuristic to neural in computing. In both cases, the ‘expert’ was not replaced by a tool, but by a system that rendered the expert’s specific knowledge obsolete.” — Dr. Aris Thorne, Lead Systems Architect at the OpenCompute Project.

The Architecture of Displacement: A Comparative Analysis

To truly grasp the parallels, we necessitate to look at the “stack” of both eras. The 1978 farmer relied on a physical stack: soil quality, seed genetics, and mechanical horsepower. The 2026 technologist relies on a digital stack: NPU (Neural Processing Unit) throughput, token window size, and data provenance.

The following breakdown illustrates how the “disruption” of 1978 maps onto the “disruption” of the current AI era:

| Metric | 1978 Agrarian Shift | 2026 AI Transition |

|---|---|---|

| Primary Driver | Chemical/Mechanical Scaling | Compute/Parameter Scaling |

| Unit of Value | Yield per Acre | Tokens per Second / Inference Cost |

| Barrier to Entry | Land Ownership / Capital | GPU Clusters / Proprietary Datasets |

| Systemic Risk | Monoculture Fragility | Model Collapse / Hallucination |

This isn’t just a metaphor. It’s a structural reality. The “La Boucherie” narrative highlights the loss of the human element in the face of optimized production. In the same way, we are currently debating whether the “human-in-the-loop” is a necessary safety mechanism or merely a bottleneck in the pursuit of AGI (Artificial General Intelligence).

The 30-Second Verdict: Why This Archive Matters Now

The Bas-Laurentian story serves as a warning. When efficiency becomes the only metric of success, the ecosystem loses its resilience. Whether it’s a farm in 1978 or a codebase in 2026, over-optimization leads to a “single point of failure.” If we optimize our AI models for pure performance while ignoring the “biodiversity” of open-source experimentation, we are simply building a digital monoculture that is one “black swan” event away from a total crash.

The Security Dimension: Protecting the Digital Harvest

As we move toward the “Attack Helix” era of offensive security—where AI is used to autonomously map and exploit vulnerabilities—the lesson from 1978 is clear: the more centralized the system, the more attractive the target. The shift to large-scale industrial farming made the food supply chain vulnerable to systemic shocks. Similarly, the centralization of AI in a few “foundation models” creates a massive security liability.

If a primary LLM provider suffers a catastrophic weight corruption or a sophisticated prompt-injection attack at the system level, the downstream effects will be felt across every integrated enterprise. We are effectively putting all our intellectual eggs in one digital basket.

This is why the move toward decentralized AI architectures and edge-computing is not just a technical preference—it’s a survival strategy. We need to return to a “polyculture” of models: small, specialized, and locally hosted agents that can operate independently of the central hive.

“We are seeing a resurgence in ‘Small Language Models’ (SLMs) not because they are more capable, but because they are more resilient. In a world of adversarial AI, the smallest target is the hardest to hit.” — Sarah Jenkins, Principal Security Researcher at Mandiant.

The Final Synthesis: Lessons from the Past

Reading about the agriculture of 1978 in the midst of a 2026 tech surge feels like a glitch in the matrix, but it’s actually a necessary calibration. The “La Boucherie” series reminds us that technology is never neutral. Every “upgrade” involves a trade-off. The farmer gained yield but lost autonomy; the developer gains productivity but loses a degree of creative control over the raw logic of their application.

The real “elite” technologists of 2026 won’t be the ones who can write the fastest prompt or lease the most H100s. They will be the ones who can integrate the efficiency of the new system with the resilience of the old. They will be the “digital polyculture” architects.

The Takeaway: Stop chasing the peak of the scaling curve. Start building for the dip. Whether you are managing a herd of cattle in Quebec or a fleet of autonomous agents in the cloud, the goal remains the same: build a system that can survive the collapse of its own primary assumptions.