Astronomers have detected ultra-high-energy gamma rays from LS I +61 303, a binary system featuring a Be star and a compact object. This discovery, driven by advanced ground-based Cherenkov telescopes, challenges current particle acceleration models and highlights the intersection of high-energy astrophysics and petabyte-scale data processing in early April 2026.

For the uninitiated, LS I +61 303 isn’t just a coordinate in the sky; it is a cosmic particle accelerator. We are looking at a high-mass X-ray binary (HMXB) where a massive star and a compact companion—either a neutron star or a black hole—dance in an eccentric orbit. The result is a violent exchange of energy that manifests as Very High Energy (VHE) gamma rays. But the real story isn’t just the physics; it is the computational nightmare required to isolate these signals from the noise of the universe.

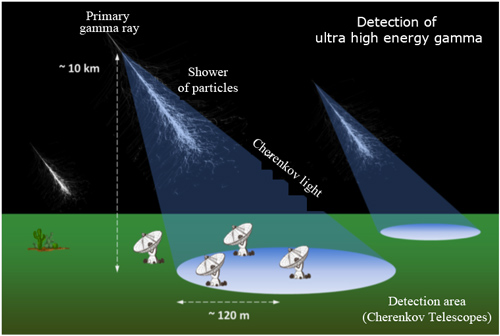

Detecting a TeV (teraelectronvolt) photon is the ultimate “needle in a haystack” problem. These photons don’t reach the ground; they slam into the upper atmosphere, triggering a cascade of secondary particles known as an Extensive Air Shower (EAS). The telescopes we use, Imaging Atmospheric Cherenkov Telescopes (IACTs), don’t “see” the gamma ray—they see the faint, nanosecond-long flash of blue Cherenkov light produced by these particles.

The Computational Bottleneck of Cherenkov Reconstruction

The raw data coming off an IACT array is chaotic. To turn a flash of blue light into a verified gamma-ray event, the system must execute complex image cleaning and parameterization in real-time. This is where the “raw code” of astrophysics meets high-performance computing. Traditionally, this relied on Hillas parameters—essentially calculating the ellipse of the light shower to determine the origin and energy of the primary photon.

However, the LS I +61 303 mystery pushes these legacy pipelines to their limit. Because the system is periodic, the signal-to-noise ratio (SNR) fluctuates wildly depending on the orbital phase. We are no longer just looking for a steady source; we are looking for a transient, modulated signal buried under a mountain of hadronic background noise (cosmic rays that look like gamma rays but aren’t).

This is why the industry is shifting toward deep learning for event reconstruction. By replacing manual parameterization with Convolutional Neural Networks (CNNs) running on dedicated NPU (Neural Processing Unit) clusters, researchers can better differentiate between a gamma-ray shower and a proton-induced shower. It is a transition from heuristic-based filtering to pattern recognition at scale.

The Data Throughput Gap: Current vs. Next-Gen

The current generation of telescopes (MAGIC, H.E.S.S., and VERITAS) provided the foundation, but the upcoming Cherenkov Telescope Array (CTA) is where the real architectural shift occurs. We are moving from a few telescopes to a global array, increasing the data volume by orders of magnitude.

| Metric | Current IACT Arrays | CTA (Expected) | Technical Impact |

|---|---|---|---|

| Data Rate | Gigabytes per hour | Terabytes per hour | Requires edge-computing triggers |

| Angular Resolution | ~0.1 degrees | ~0.02 degrees | Higher precision spatial mapping |

| Energy Threshold | ~50 GeV to 100 TeV | ~20 GeV to 300 TeV | Expanded spectral coverage |

| Processing Logic | Heuristic/Hillas | ML/Deep Learning | Shift to GPU-accelerated pipelines |

The Microquasar vs. Pulsar Wind Debate

The “mystery” of LS I +61 303 boils down to a fundamental disagreement over the system’s architecture. Is it a microquasar—a stellar-mass black hole accreting matter and launching relativistic jets—or is it a non-accreting pulsar whose wind clashes with the stellar wind of its companion?

From a systems engineering perspective, these are two entirely different “engines” for particle acceleration. A microquasar uses an accretion disk and magnetic collimation to accelerate particles (Fermi acceleration), even as a pulsar wind nebula uses a relativistic shock front. The extremely energetic gamma rays detected recently suggest an acceleration mechanism that is far more efficient than previously modeled. If the pulsar model holds, it implies the pulsar’s wind is capable of pushing particles to TeV energies even within the dense environment of a Be star’s circumstellar disk.

“The challenge in these binary systems is that the ‘accelerator’ is moving. We aren’t dealing with a static source, but a dynamic environment where the geometry changes every few days. This requires a temporal resolution in our data pipelines that was unthinkable a decade ago.”

This isn’t just a physics debate; it’s a modeling challenge. Researchers are now utilizing Gammapy, an open-source Python package, to standardize the analysis of VHE gamma-ray data. By moving away from proprietary, closed-source analysis scripts, the community is accelerating the verification of these models through collaborative, reproducible code.

Ecosystem Bridging: From Deep Space to Local Compute

The pursuit of LS I +61 303 is effectively a stress test for the broader scientific computing ecosystem. The transition to open-source frameworks like arXiv for pre-print distribution and GitHub for analysis pipelines mirrors the shift we’ve seen in AI development. The “black box” era of astrophysics is ending.

the hardware requirements for processing these gamma-ray events are driving innovations in FPGA (Field Programmable Gate Array) design. To handle the nanosecond timing required for Cherenkov detection, the triggers must be hard-coded into the silicon. This is the same low-latency engineering used in High-Frequency Trading (HFT) on Wall Street—where every microsecond of latency equals lost value. In this case, latency equals lost photons.

We are seeing a convergence of disciplines. The same IEEE standards for high-speed data transmission are being applied to telescope arrays in the deserts of La Palma and Namibia. The “tech war” here isn’t between companies, but between the limits of our current silicon and the sheer energy of the cosmos.

The 30-Second Verdict

- The Event: Detection of ultra-high-energy gamma rays from the LS I +61 303 binary system.

- The Tech: Shift from Hillas-based heuristics to NPU/GPU-accelerated deep learning for signal reconstruction.

- The Conflict: Microquasar (accretion) vs. Pulsar Wind (shock) acceleration models.

- The Takeaway: The discovery is as much a victory for signal processing and open-source data science as it is for astrophysics.

As we integrate more AI-driven analysis into the CTA pipeline, the mystery of LS I +61 303 will likely be solved not by a bigger telescope, but by a smarter algorithm. The universe is shouting in gamma rays; we are finally building a processor fast enough to listen.