Microsoft Threat Intelligence has confirmed that cybercriminals are now integrating artificial intelligence across every phase of the attack lifecycle. From automated reconnaissance and sophisticated phishing to the rapid development of polymorphic malware, AI is shifting the offensive landscape from manual execution to scalable, machine-driven exploitation globally.

Let’s be clear: we aren’t talking about a few script kiddies using ChatGPT to write a basic Python script. We are witnessing the industrialization of the exploit. The “barrier to entry” for high-level cybercrime has collapsed. By leveraging Large Language Models (LLMs) and specialized offensive AI architectures, attackers are compressing the time between vulnerability discovery and weaponization—a window that used to take weeks and now takes minutes.

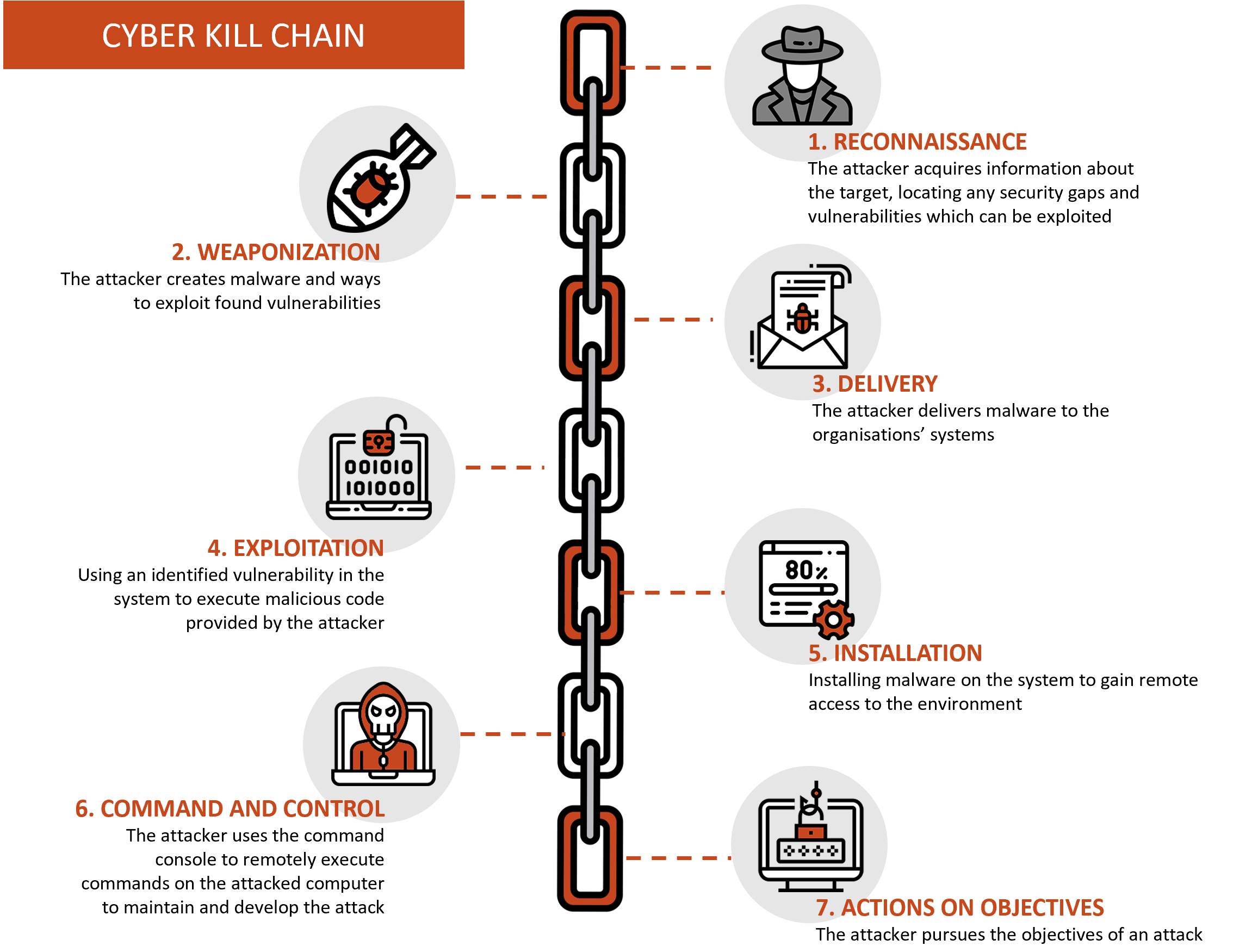

The Automation of the Kill Chain: From Recon to Exfiltration

The traditional “Cyber Kill Chain” is being rewritten. In the past, the reconnaissance phase required tedious manual scraping and social engineering. Now, attackers utilize LLMs to automate the collection of OSINT (Open Source Intelligence) from LinkedIn, GitHub and corporate directories, synthesizing this data into hyper-personalized phishing lures that bypass traditional “spot the typo” training. This isn’t just spam; it’s precision-engineered psychological manipulation at scale.

More concerning is the shift toward polymorphic code generation. By using AI to rewrite the signature of a piece of malware on the fly, attackers can evade signature-based detection systems. If a security tool identifies a specific byte sequence in a payload, the AI simply regenerates the code to perform the same function but with a different binary footprint. This renders traditional antivirus software practically obsolete.

The technical bridge here is the move toward automated vulnerability research. Attackers are employing AI to scan binaries for buffer overflows or memory corruption bugs—essentially doing the work of a senior security researcher in a fraction of the time. When you combine this with the rapid scaling of LLM parameters, you get an adversary that can iterate on an exploit faster than a human patch-management cycle can respond.

“The asymmetry of cyber warfare has reached a breaking point. We are no longer fighting humans with tools; we are fighting autonomous agents that can pivot through a network faster than a SOC analyst can open a ticket.”

The 30-Second Verdict: Why This Is a Paradigm Shift

- Speed: The “Time-to-Exploit” has plummeted.

- Scale: One attacker can now manage thousands of unique, AI-driven campaigns simultaneously.

- Stealth: AI-generated phishing and polymorphic code make detection a game of “whack-a-mole.”

The Rise of the “Attack Helix” and Offensive Architectures

We are seeing a transition from general-purpose AI to specialized “Offensive AI” architectures. The industry is beginning to see the emergence of frameworks—similar to the conceptual “Attack Helix”—where AI is not just a chatbot but a structural layer of the offensive operation. These systems integrate LLMs for social engineering, NPUs (Neural Processing Units) for rapid local decryption, and autonomous agents for lateral movement within a network.

This creates a dangerous feedback loop. As defenders deploy AI-powered security analytics—like those seen in the latest open-source security frameworks—attackers employ those same defensive benchmarks to train their models on how to remain invisible. It is an adversarial arms race where the attacker only needs to discover one hole, even as the defender must plug every single one.

Consider the impact on the ARM vs. X86 landscape. With the rise of AI-optimized hardware, attackers are targeting the specific memory architectures of these chips. If an AI can identify a zero-day in a specific NPU driver or a flaw in how a SoC (System on a Chip) handles secure enclaves, the entire hardware root of trust is compromised.

| Attack Phase | Manual Method (Pre-AI) | AI-Enhanced Method (2026) | Impact |

|---|---|---|---|

| Reconnaissance | Manual OSINT / Scraping | Automated LLM Synthesis | Hyper-personalized lures |

| Weaponization | Hand-coded exploits | AI-generated polymorphic code | Bypasses signature detection |

| Delivery | Generic Phishing | Deepfake Audio/Video/Text | Extreme increase in trust/click rate |

| Lateral Movement | Manual pivoting / Scanning | Autonomous AI agents | Rapid network compromise |

The Ecosystem Fallout: Open Source and the “Security Debt”

This trend exposes a critical vulnerability in the global software supply chain. Most enterprise software relies on a sprawling web of open-source libraries. AI-driven attackers are now targeting these dependencies, inserting subtle, AI-generated vulnerabilities into popular repositories that look like legitimate bug fixes. This is “poisoning the well” on a global scale.

For developers, this means the “trust but verify” model is dead. We are entering an era of Zero Trust Code. Every single commit, regardless of the contributor’s reputation, must be scrutinized by AI-driven static analysis tools. Yet, if the tool used to verify the code is based on the same LLM architecture used to create the exploit, we have a systemic failure point.

The “chip wars” play into this as well. As companies scramble for H100s and next-gen NPUs to power their defensive AI, the scarcity of compute power creates a gap. Smaller firms cannot afford the compute required to run real-time, LLM-based threat hunting, leaving them vulnerable to attackers who are utilizing leaner, distilled models specifically tuned for exploitation.

Mitigation: Moving Beyond the Perimeter

To survive this, enterprises must move away from “perimeter defense” and toward behavioral telemetry. Since AI can mimic a legitimate user’s voice, writing style, and even their typical login patterns, the only way to detect an intrusion is to analyze the intent of the action. Why is a marketing manager suddenly querying a production database via an API call at 3 AM? That is a behavioral anomaly that an AI attacker cannot easily mask.

Implementing end-to-end encryption (E2EE) and hardware-backed identity verification (like FIDO2) is no longer optional—it is the baseline. We must shift from “detecting the malware” to “assuming the breach” and focusing on blast-radius containment.

The bottom line? The “Elite Hacker” is no longer a lone wolf in a hoodie; they are an operator of an AI swarm. If your security strategy is still based on 2023’s playbook, you aren’t just behind—you’re already compromised.