Nvidia has integrated Auto Shader Compilation into the latest Nvidia app update, effectively eliminating the “Optimizing Shaders” loading screens that plague modern PC gaming. By leveraging driver-level background processing and advanced caching, Nvidia reduces game launch latency and prevents mid-game stuttering, particularly following GPU driver updates.

For the uninitiated, shader compilation is the silent killer of the “smooth” gaming experience. When you launch a title built on modern APIs like DirectX 12 or Vulkan, the game doesn’t just run; it has to translate high-level shader code into machine code that your specific GPU architecture understands. This process is computationally expensive. Traditionally, developers forced this into a loading screen, or worse, let it happen in real-time, resulting in “shader stutter”—those jarring micro-freezes when you enter a new area or see a new effect for the first time.

Nvidia’s Auto Shader Compilation isn’t magic; it’s a sophisticated exercise in cache management and asynchronous execution. By moving the compilation process to the background via the Nvidia app, the system preemptively handles the translation of Pipeline State Objects (PSOs) before the game engine even requests them. It essentially turns a synchronous bottleneck into an asynchronous background task.

The End of the “Optimizing Shaders” Purgatory

The technical brilliance here lies in how Nvidia handles cache invalidation. Historically, every time you updated your GPU driver, your existing shader cache became obsolete. The driver version changed, the compiler changed, and suddenly, you were back to that 10-minute loading screen in *Call of Duty* or *The Last of Us*.

Auto Shader Compilation mitigates this by utilizing a more intelligent versioning system. Instead of a scorched-earth wipe of the cache, the app can identify which shaders remain compatible and prioritize the re-compilation of critical assets in the background. This is a massive win for the user experience, but it also signals a shift in where the “intelligence” of the gaming pipeline resides. We are moving away from game-engine-led optimization and toward driver-led orchestration.

It is an aggressive move.

By taking control of the shader pipeline, Nvidia is reducing the burden on game developers, who have struggled for years to implement efficient PSO caching. Whereas, this further entrenches the “Nvidia Tax”—not in dollars, but in ecosystem dependency. When the driver handles the optimization better than the engine, developers may stop optimizing for non-Nvidia hardware, widening the gap between GeForce users and the rest of the market.

“The industry has been fighting shader stutter since the transition to low-overhead APIs. By shifting the compilation burden to the driver level through background automation, we are essentially removing the last major friction point between the user and the game engine.”

The 30-Second Verdict

- The Win: No more staring at progress bars for 5 minutes after a driver update.

- The Tech: Asynchronous PSO compilation and intelligent cache versioning.

- The Catch: Increased reliance on proprietary Nvidia software rather than open API standards.

DLSS 4.5: Moving Beyond Linear Frame Generation

While the shader update is the “quality of life” hero, the real architectural heavy lifting is happening with DLSS 4.5’s Dynamic Multi-frame Generation. To understand this, we have to look at the evolution of the DLSS architecture. Previous iterations focused on generating a single intermediate frame to double the perceived framerate. DLSS 4.5 moves toward a “dynamic” model.

Dynamic Multi-frame Generation doesn’t just insert one frame; it analyzes the scene’s temporal complexity and the GPU’s current thermal headroom to determine the optimal number of generated frames. In a slow-paced cinematic sequence, it might lean heavily on generation to push 240Hz. In a high-twitch competitive shooter, it scales back to minimize the input latency penalty associated with frame interpolation.

This requires a tight feedback loop between the GPU’s Tensor cores and the NPU (Neural Processing Unit). The system is essentially predicting motion vectors with higher precision, reducing the “ghosting” artifacts that plagued early versions of frame generation. We are seeing a convergence where the AI isn’t just upscaling pixels, but is actively managing the temporal flow of the render pipeline.

The Latency Floor: A Technical Comparison

The critical metric for any frame-generation tech is the “latency floor”—the point where the visual smoothness no longer compensates for the input lag. Below is a breakdown of how the new pipeline compares to the previous standard.

| Feature | DLSS 3.0 (Standard) | DLSS 4.5 (Dynamic) | Impact on User |

|---|---|---|---|

| Frame Generation | Static 1:1 Interpolation | Adaptive Multi-frame | Smoother motion in complex scenes |

| Shader Handling | Game-Engine Dependent | Driver-Level Auto-Compile | Elimination of launch-time stutters |

| Input Latency | Fixed Reflex Offset | Dynamic Latency Scaling | Lower lag in high-action sequences |

| Cache Logic | Full Wipe on Update | Intelligent Versioning | Instant play after driver updates |

The Driver-Level Power Grab and Ecosystem Lock-in

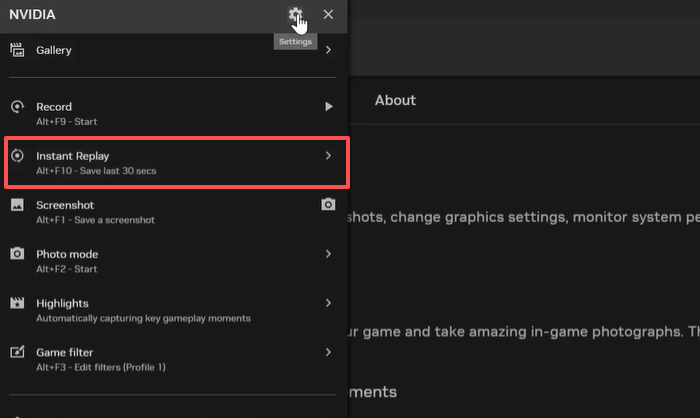

From a macro-market perspective, this is a strategic masterstroke. Nvidia is no longer just selling silicon; they are selling a software layer that makes the silicon feel faster than the raw specs suggest. By integrating these features into a single, streamlined “Nvidia App” (replacing the archaic Control Panel), they are creating a cohesive OS-within-an-OS.

This creates a significant hurdle for open-source initiatives and competitors. When the “industry standard” for a smooth experience is a proprietary driver feature, the incentive for developers to adhere to strict Khronos Group standards diminishes. We are seeing the emergence of a “walled garden” in the GPU space, where the hardware is the key, but the software is the lock.

Is this a problem? For the average gamer, no. The result is a faster, smoother experience. But for the architecture of PC gaming, it’s a pivot toward a consolidated power structure where Nvidia dictates the terms of performance optimization.

The Bottom Line

Auto Shader Compilation is a surgical strike against one of the most annoying aspects of modern PC gaming. Combined with the adaptive nature of DLSS 4.5, Nvidia is effectively insulating the user from the inefficiencies of game engines. You aren’t just buying a GPU anymore; you’re buying a sophisticated AI-driven middleware that cleans up the mess left by developers. Just don’t be surprised when the “smoothness” of your experience becomes entirely dependent on a proprietary app update.