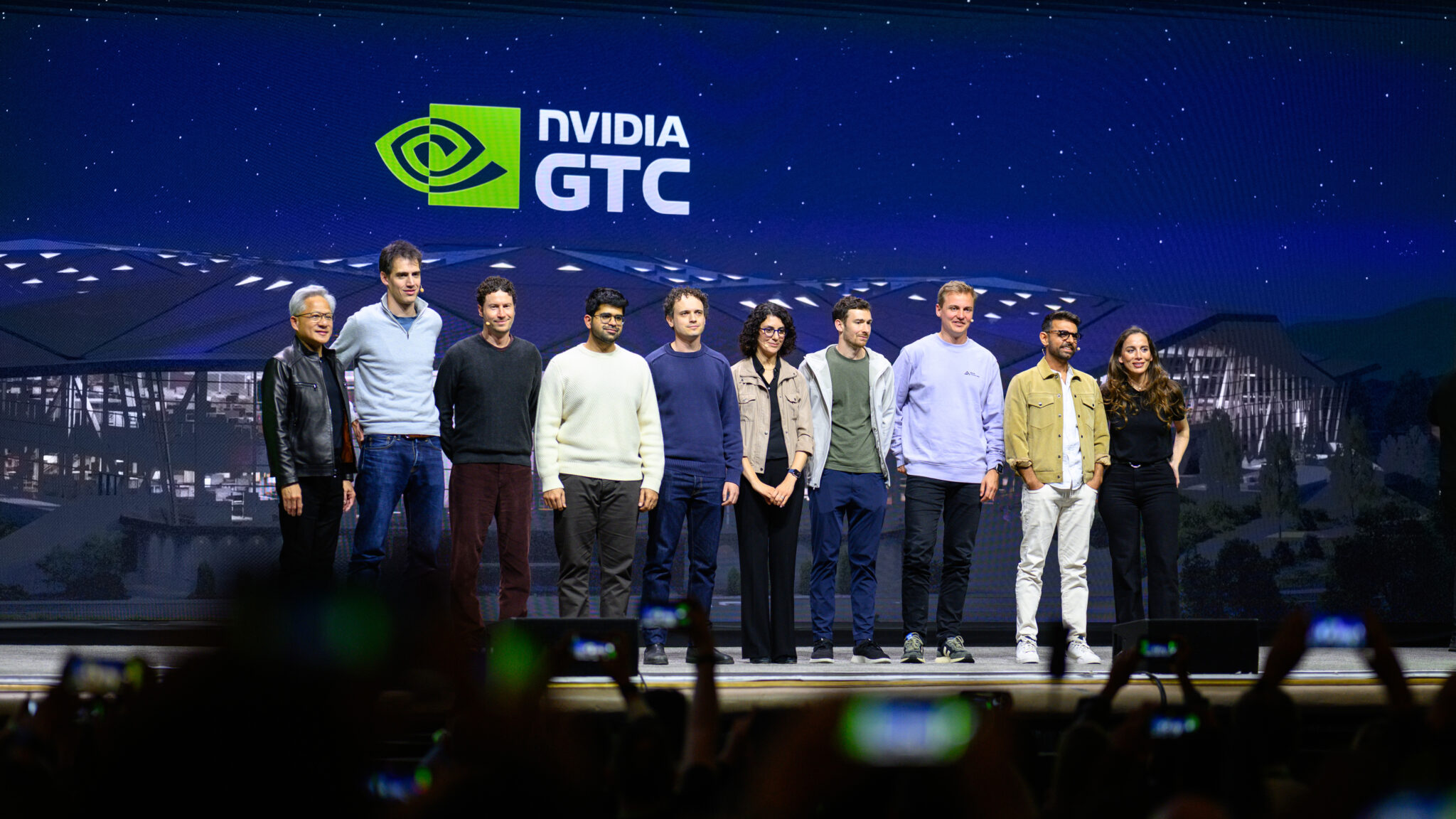

The AI landscape is fracturing – and that’s a good thing. NVIDIA’s GTC conference this week underscored a critical shift: the future isn’t about choosing between open and proprietary AI models, but embracing both. This convergence, driven by the need for specialized AI across diverse industries, is fostering a collaborative ecosystem where organizations can leverage foundational models and tailor them with proprietary data for unique competitive advantages. The rise of the NVIDIA Nemotron Coalition exemplifies this trend, signaling a move towards orchestrated systems of models rather than monolithic, general-purpose AI.

The Demise of the Monolith: Why Orchestration is the Novel Paradigm

For years, the narrative centered on the race to build the largest, most capable Large Language Model (LLM). The assumption was that scale equated to intelligence. While LLM parameter scaling continues to yield improvements, the reality is far more nuanced. Every sector – healthcare, finance, manufacturing, even creative arts – operates with unique data structures, workflows, and regulatory constraints. A single, massive model simply cannot address this heterogeneity effectively. Instead, we’re witnessing the emergence of “systems of models,” a concept championed by NVIDIA CEO Jensen Huang. This isn’t about stacking LLMs; it’s about intelligently routing tasks to the *right* model for the job. Reckon of it as a distributed cognitive architecture, where specialized agents handle specific functions. This requires robust APIs and orchestration layers – a space where companies like LangChain are rapidly innovating. The key is composability; the ability to seamlessly integrate models from different sources, whether open-source or commercially licensed.

What This Means for Enterprise IT

Forget rip-and-replace AI strategies. The future is hybrid. Enterprises will increasingly adopt a “bring your own model” approach, fine-tuning open-source foundations with their internal data to create bespoke AI solutions. This reduces vendor lock-in and allows for greater control over data privacy and security.

NVIDIA’s Nemotron Coalition: A Strategic Play in the Open-Source Arena

NVIDIA’s commitment to open-source AI isn’t merely altruistic; it’s a shrewd strategic move. Becoming the largest organization on Hugging Face, with nearly 4,000 team members contributing to the platform, solidifies NVIDIA’s position at the center of the AI ecosystem. The Nemotron Coalition, a collaboration with Mistral AI and other leading labs, is designed to accelerate the development of open, frontier-level foundation models. The initial project, a base model co-developed with Mistral, will be shared with the open ecosystem and underpin the next generation of NVIDIA Nemotron models. This isn’t just about code; it’s about data. Access to high-quality, diverse datasets is the critical bottleneck in AI development. The Coalition aims to pool resources and expertise to overcome this challenge. The architecture of these models is leaning heavily into Mixture of Experts (MoE) designs, allowing for increased capacity without a proportional increase in computational cost. The original MoE paper details the benefits of this approach, and we’re seeing it implemented across the board, from Google’s Switch Transformers to open-source alternatives.

The Trust Factor: Openness and the Rise of AI Agents

One of the most compelling arguments for open AI isn’t just innovation speed, but trust. As AI agents become increasingly capable “coworkers,” capable of handling complex tasks autonomously, the need for transparency and auditability becomes paramount. “At the conclude of the day, you’re delegating trust…and it’s much easier to trust an open system,” notes Anjney Midha, founder of AMP PBC. This sentiment is echoed by developers building long-running AI agents. The ability to inspect the code, understand the data provenance, and verify the model’s behavior is crucial for building confidence and mitigating risks. What we have is particularly vital in regulated industries like finance and healthcare, where compliance is non-negotiable. The trend towards open-weight models, where the model parameters are publicly available, is a direct response to this demand for transparency. Yet, it’s important to note that open-weight doesn’t necessarily equate to open-source; licensing terms still apply.

The 30-Second Verdict

Open and proprietary AI aren’t competitors; they’re complementary forces. Expect a future dominated by orchestrated systems of models, tailored to specific industry needs, and built on a foundation of open collaboration and transparent governance.

Beyond the Hype: Technical Considerations and API Realities

While the vision of intelligent AI orchestration is compelling, the technical challenges are significant. Interoperability between models from different sources requires standardized APIs and data formats. Currently, the landscape is fragmented, with each vendor offering its own proprietary solutions. ONNX (Open Neural Network Exchange) is an attempt to address this issue, providing a common format for representing machine learning models. However, adoption remains uneven. Latency is another critical concern. Orchestrating multiple models introduces overhead, potentially slowing down response times. Optimizing the routing and execution of tasks is crucial for delivering a seamless user experience. The cost of running these complex systems can be substantial. API pricing models vary widely, and enterprises need to carefully evaluate the total cost of ownership. For example, OpenAI’s GPT-4 API charges per token, while other providers offer subscription-based pricing. The choice depends on the specific use case and the volume of requests.

“The biggest challenge isn’t building the models themselves, it’s building the infrastructure to deploy and manage them at scale. We need better tools for model monitoring, version control, and automated scaling.” – Dr. Emily Carter, CTO of AI-driven drug discovery startup, BioSynth.

The Geopolitical Dimension: Chip Wars and AI Sovereignty

The shift towards a more distributed AI ecosystem has significant geopolitical implications. The “chip wars” between the US and China are intensifying, with both countries vying for dominance in the AI hardware market. The US government’s restrictions on the export of advanced semiconductors to China are aimed at slowing down China’s AI development. However, these restrictions are also driving China to invest heavily in its own domestic chip industry. The rise of open-source AI could potentially mitigate the impact of these restrictions, allowing China to develop its own AI capabilities without relying on US technology. However, access to high-quality data remains a critical challenge for China. The concept of “AI sovereignty” – the ability of a nation to control its own AI infrastructure and data – is gaining traction. Countries are increasingly concerned about the potential for foreign governments to access sensitive data or manipulate AI systems. This is driving demand for localized AI solutions and increased investment in data privacy and security technologies.

The future of AI isn’t a monolithic vision of a single, all-powerful model. It’s a complex, dynamic ecosystem of open and proprietary technologies, orchestrated to solve specific problems and driven by the need for trust, transparency, and control. The NVIDIA Nemotron Coalition is a significant step in this direction, but the journey is just beginning.