Patricia Cornwell’s Social Media Encounter: A Canary in the Algorithmic Coal Mine

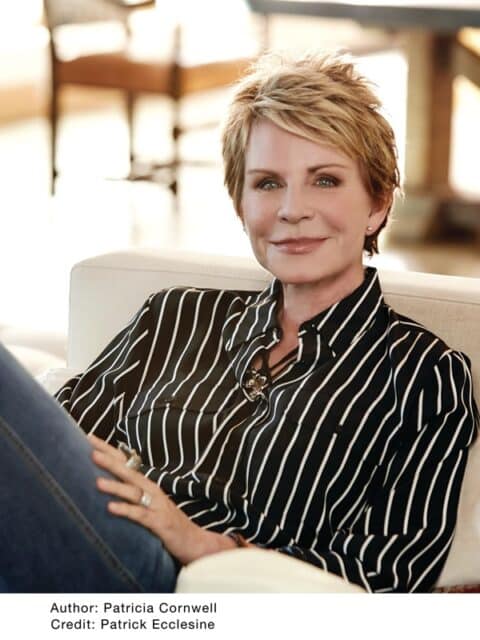

Best-selling author Patricia Cornwell, known for her Kay Scarpetta crime novels now adapted for Prime Video, recently shared a personal anecdote on Facebook detailing a meeting with singer Anne Murray sparked by an interaction on X (formerly Twitter). While seemingly innocuous, this event highlights a growing trend: the increasing influence of social media algorithms in shaping real-world interactions and, crucially, the potential for manipulation and targeted influence operations. This isn’t about celebrity encounters; it’s about the architecture of attention and how it’s being exploited.

The story itself – a fan connection blossoming into a lunch date – is a familiar narrative in the age of social media. However, framing it solely as a heartwarming tale overlooks the underlying mechanics at play. X’s algorithm, driven by a complex network of machine learning models, determined the visibility of Cornwell’s posts and Murray’s response. These algorithms aren’t neutral; they are designed to maximize engagement, often prioritizing sensationalism or emotionally resonant content. The question isn’t *whether* algorithms influence our lives, but *how* and to what extent.

The LLM-Powered Echo Chamber Effect

The core issue lies in the increasing sophistication of Large Language Models (LLMs) powering these platforms. X, like Facebook and TikTok, utilizes LLMs for content recommendation, moderation, and even automated content generation. These models, while impressive in their ability to mimic human language, are susceptible to biases present in their training data. The relentless pursuit of “engagement” can lead to the creation of echo chambers, where users are primarily exposed to information confirming their existing beliefs. This isn’t a novel phenomenon, but the scale and speed at which it’s happening are unprecedented. The interaction between Cornwell and Murray could have been amplified – or suppressed – based on factors entirely unrelated to the inherent value of their exchange.

Consider the implications for political discourse. If an algorithm prioritizes emotionally charged content, it can easily be exploited to spread misinformation or polarize public opinion. The 2016 US presidential election served as a stark warning, and the situation has only become more complex with the advent of more powerful LLMs. The ability to generate realistic-sounding text and images at scale makes it increasingly difficult to distinguish between genuine content and synthetic propaganda. Brookings Institution research details the potential for LLMs to generate hyper-targeted disinformation campaigns.

Beyond Likes and Retweets: The API Economy and Data Harvesting

The story similarly touches upon the broader ecosystem of social media APIs. X, like other platforms, provides APIs that allow third-party developers to access user data and build applications on top of its platform. While these APIs can enable innovation, they also create opportunities for data harvesting and surveillance. The data generated by interactions like Cornwell’s and Murray’s – including timestamps, engagement metrics, and user profiles – is incredibly valuable to advertisers, political campaigns, and even intelligence agencies.

The recent changes to X’s API access, restricting access for researchers and limiting data availability, are particularly concerning. This move effectively creates a “walled garden,” making it more difficult to study the platform’s impact on society. It also raises questions about transparency and accountability. Without independent oversight, it’s impossible to know how X is using its data and whether it’s taking adequate steps to protect user privacy.

What This Means for Enterprise IT

The implications extend beyond individual users and social media platforms. Enterprises are increasingly reliant on social media data for market research, brand monitoring, and customer engagement. However, they must be aware of the risks associated with relying on data from platforms with opaque algorithms and questionable data practices.

- Data Integrity: Verify the authenticity of social media data before using it for critical business decisions.

- API Dependence: Diversify data sources to reduce reliance on any single platform.

- Reputation Management: Monitor social media for misinformation and proactively address negative sentiment.

the rise of LLM-powered social engineering attacks poses a significant threat to enterprise security. Attackers can use LLMs to craft highly personalized phishing emails or social media messages, making it more difficult for employees to identify and avoid scams.

The Cybersecurity Angle: Deepfakes and Synthetic Influence

The potential for synthetic media – deepfakes and AI-generated content – to be used for malicious purposes is a growing concern. While the Cornwell-Murray interaction appears genuine, it’s not difficult to imagine a scenario where a similar encounter is fabricated using AI. A deepfake video of a celebrity endorsing a product or a politician making a controversial statement could have devastating consequences.

“The speed at which deepfake technology is evolving is alarming. We’re moving beyond simple face swaps to fully synthetic videos that are virtually indistinguishable from reality. The challenge isn’t just detecting these fakes, but also mitigating the damage they can cause.”

– Dr. Emily Carter, Chief Technology Officer, Cygnus Security

Detecting deepfakes requires sophisticated techniques, including analyzing facial expressions, lip movements, and audio cues. However, these techniques are constantly being challenged by advancements in AI. NIST’s Deepfake Detection Challenge highlights the ongoing efforts to develop robust detection methods.

The Future of Algorithmic Transparency

The Cornwell-Murray anecdote serves as a microcosm of the larger challenges posed by algorithmic governance. We need greater transparency from social media platforms about how their algorithms work and how they are impacting our lives. We also need stronger regulations to protect user privacy and prevent the spread of misinformation. The European Union’s Digital Services Act (DSA) is a step in the right direction, but more needs to be done.

The current trajectory is concerning. The relentless pursuit of engagement, coupled with the lack of transparency and accountability, is creating a digital environment that is increasingly susceptible to manipulation and control. The seemingly harmless exchange between a novelist and a singer is a reminder that even the most innocuous interactions are shaped by the invisible hand of the algorithm.

The 30-Second Verdict

Social media isn’t just a platform for connection; it’s a complex ecosystem governed by algorithms with potentially far-reaching consequences. Patricia Cornwell’s story isn’t about celebrity encounters; it’s a warning about the erosion of agency in the digital age.

The ongoing “chip wars” – the geopolitical competition for dominance in semiconductor manufacturing – also play a role. The companies controlling the hardware and software infrastructure of these platforms wield immense power. The Semiconductor Industry Association provides detailed analysis of these trends. The concentration of power in the hands of a few tech giants raises concerns about monopolies and the suppression of innovation.

the future of social media depends on our ability to demand greater transparency, accountability, and ethical design. The alternative is a world where our thoughts, beliefs, and behaviors are increasingly shaped by algorithms we don’t understand and can’t control.