Pushing the Boundaries of Computation: A RAM-less PC Experiment and the Future of CPU Caching

Researchers at the University of Kaiserslautern have demonstrated a functional PC operating without traditional RAM, relying entirely on CPU cache for memory. This experiment, detailed in Golem.de, highlights the increasing sophistication of CPU cache hierarchies and their potential to mitigate, though not eliminate, the limitations of traditional DRAM.

The implications extend far beyond a mere technical curiosity. This isn’t about replacing RAM tomorrow. It’s about understanding the fundamental bottlenecks in modern computing and exploring architectural innovations that can squeeze more performance out of existing silicon. The experiment forces a re-evaluation of how we define “memory” in the context of increasingly complex processors.

The Cache Hierarchy as a Last-Mile Solution

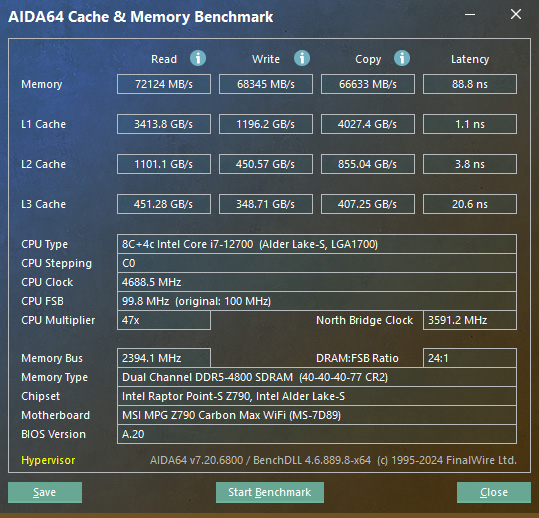

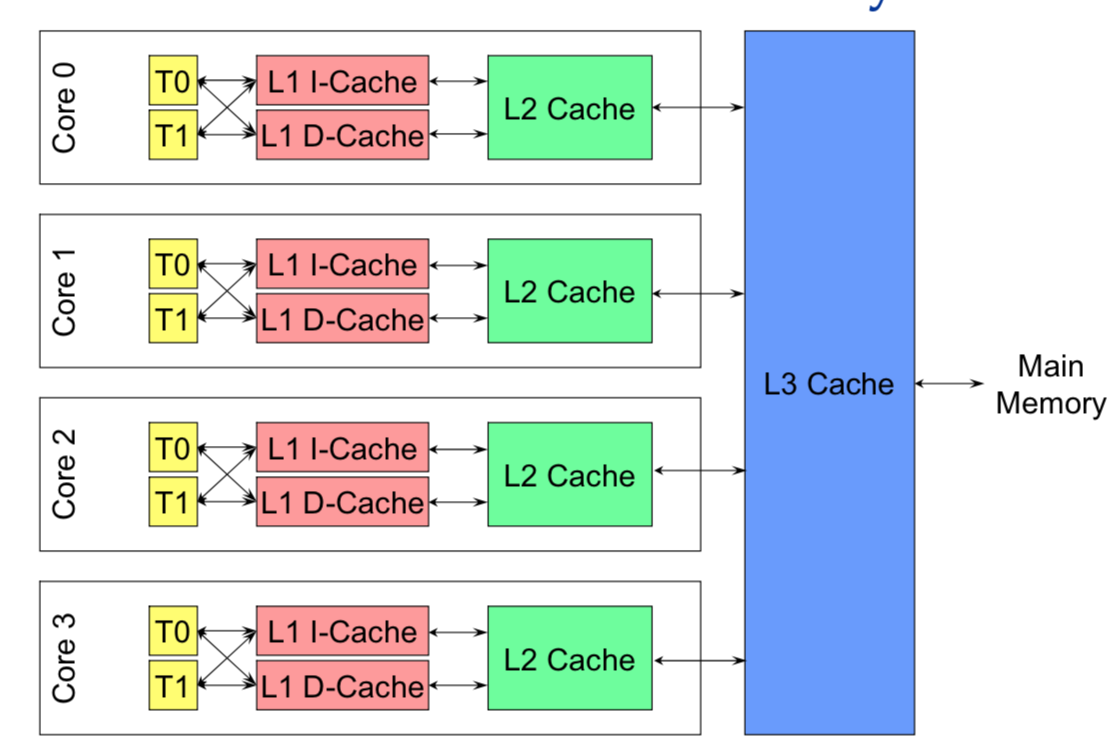

Modern CPUs aren’t just processors; they’re complex systems-on-a-chip (SoCs) incorporating multiple levels of cache – L1, L2 and L3 – each progressively larger and slower. These caches act as temporary storage for frequently accessed data, reducing the latency of accessing main memory (RAM). The Kaiserslautern team essentially bypassed the RAM stage entirely, forcing the CPU to operate solely from its cache. This represents feasible because of the sheer size of modern CPU caches. Consider the AMD Ryzen 9 7950X3D, for example, boasting a massive 128MB of L3 cache. Although not equivalent to even a modest 16GB RAM kit, it’s sufficient to run extremely lightweight operating systems and applications.

However, the limitations are stark. The experiment utilized a heavily modified Linux distribution and extremely optimized software. The performance was, predictably, significantly slower than a comparable system with RAM. The key constraint isn’t just capacity, but also the *write* performance of cache. RAM is designed for frequent read/write operations; cache is optimized for reads. The constant flushing and reloading of data to cache creates a significant bottleneck.

Beyond DRAM: Exploring Persistent Memory and CXL

This experiment isn’t happening in a vacuum. It’s part of a broader trend towards exploring alternatives to DRAM. Persistent memory technologies, like Intel’s Optane (now discontinued, but the technology lives on in other forms) and emerging NVDIMMs, offer a middle ground between the speed of DRAM and the persistence of storage. These technologies utilize 3D XPoint or similar memory types to provide non-volatile storage with significantly lower latency than traditional SSDs.

Crucially, the Compute Express Link (CXL) standard is poised to revolutionize memory architecture. CXL allows for coherent memory access between the CPU, accelerators (like GPUs), and memory devices. This means that persistent memory can be treated as an extension of the CPU’s memory space, offering higher bandwidth and lower latency than PCIe-based access. CXL 3.0, rolling out in this week’s beta SDKs from Intel and AMD, introduces memory pooling and expansion capabilities, further blurring the lines between RAM and persistent storage.

The Software Stack: A Critical Piece of the Puzzle

Running a PC without RAM isn’t just a hardware challenge; it’s a software one. The operating system and applications need to be meticulously optimized to minimize memory usage and maximize cache utilization. This requires a deep understanding of memory management techniques, such as memory compaction, caching algorithms, and data locality optimization. The team’s modified Linux distribution likely employed aggressive memory compression and prioritized frequently used code and data in the cache.

“The biggest challenge isn’t the cache size itself, but the software’s ability to adapt to the constraints. Traditional operating systems are built around the assumption of abundant RAM. Rewriting core OS components to operate efficiently in a RAM-less environment is a monumental task.”

– Dr. Anya Sharma, CTO of MemVerge, a data infrastructure company specializing in memory technologies.

What This Means for Enterprise IT

While a consumer PC running solely on CPU cache isn’t practical, the underlying principles have significant implications for enterprise IT. In-memory databases and analytics applications are already heavily reliant on RAM for performance. Technologies like CXL and persistent memory offer the potential to scale these applications beyond the limitations of DRAM. Imagine a database that can store terabytes of data in persistent memory, accessible with near-RAM speeds. This could unlock new possibilities for real-time analytics and data-intensive applications.

However, security considerations are paramount. The experiment raises questions about data security in a RAM-less environment. If all data resides in cache, it becomes more vulnerable to cold boot attacks and other physical memory attacks. Robust encryption and secure boot mechanisms are essential to mitigate these risks.

The 30-Second Verdict

The University of Kaiserslautern’s experiment is a fascinating demonstration of the potential of CPU caching. It’s not a replacement for RAM, but it’s a valuable research exercise that pushes the boundaries of what’s possible. The real impact will be felt in the development of new memory technologies and software architectures that can leverage the power of CXL and persistent memory.

Architectural Considerations: ARM vs. X86

The experiment’s success is also tied to the underlying CPU architecture. Modern ARM SoCs, commonly found in mobile devices and increasingly in laptops, often feature highly integrated and efficient cache hierarchies. Apple’s M-series chips, for instance, are renowned for their unified memory architecture, where the CPU, GPU, and Neural Processing Unit (NPU) share a single pool of memory. This architecture simplifies memory management and reduces latency. The M5 architecture, expected to debut in late 2026, is rumored to significantly increase L3 cache size and further optimize memory access patterns, potentially making similar experiments even more viable.

x86 processors, while traditionally relying on discrete memory controllers, are also evolving. AMD’s Ryzen processors with 3D V-Cache demonstrate the benefits of stacking cache directly on the CPU die. Intel is also exploring similar technologies. The competition between ARM and x86 is driving innovation in memory architecture, ultimately benefiting consumers and enterprises alike.

The future of computing isn’t just about faster processors; it’s about smarter memory architectures. The Kaiserslautern experiment is a reminder that there’s still plenty of room for innovation in this critical area.

Further research into the specifics of the modified Linux distribution’s kernel patches and memory management algorithms is crucial to fully understand the implications of this experiment. The source code, if released, would provide valuable insights for developers and researchers interested in exploring similar concepts. GitHub remains a central hub for open-source memory management projects and research.