Quadratic Gravity’s Ripple Effect: A New Quantum Baseline for Large Bang Cosmology

Researchers at the Institute for Advanced Study have unveiled a refined model of quadratic gravity, challenging conventional understandings of the universe’s earliest moments. This isn’t merely a tweak to existing cosmological models; it’s a fundamental shift in how we approach quantum gravity, potentially resolving long-standing inconsistencies between general relativity and quantum mechanics. The implications extend beyond theoretical physics, influencing computational cosmology and even the development of advanced simulation techniques.

For decades, the Big Bang has been described using the framework of general relativity, which excels at describing gravity on large scales. However, at the singularity – the infinitely dense point at the beginning of the universe – general relativity breaks down. Quantum mechanics, governing the realm of the very modest, offers a potential solution, but attempts to reconcile the two have consistently hit roadblocks. Quadratic gravity, a modification of Einstein’s theory, offers a pathway around these issues by introducing higher-order curvature terms, effectively smoothing out the singularity and providing a potentially consistent quantum description of the universe’s birth.

The Information Gap: Beyond the Equations

The Phys.org report, while accurate, glosses over the computational challenges inherent in implementing quadratic gravity models. The core issue lies in the increased complexity of the field equations. Unlike general relativity, which can be solved analytically in certain simplified scenarios, quadratic gravity typically requires sophisticated numerical methods. This translates to a massive demand for computational resources – specifically, high-performance computing (HPC) clusters equipped with specialized hardware. We’re talking about simulations that push the boundaries of even the most advanced supercomputers.

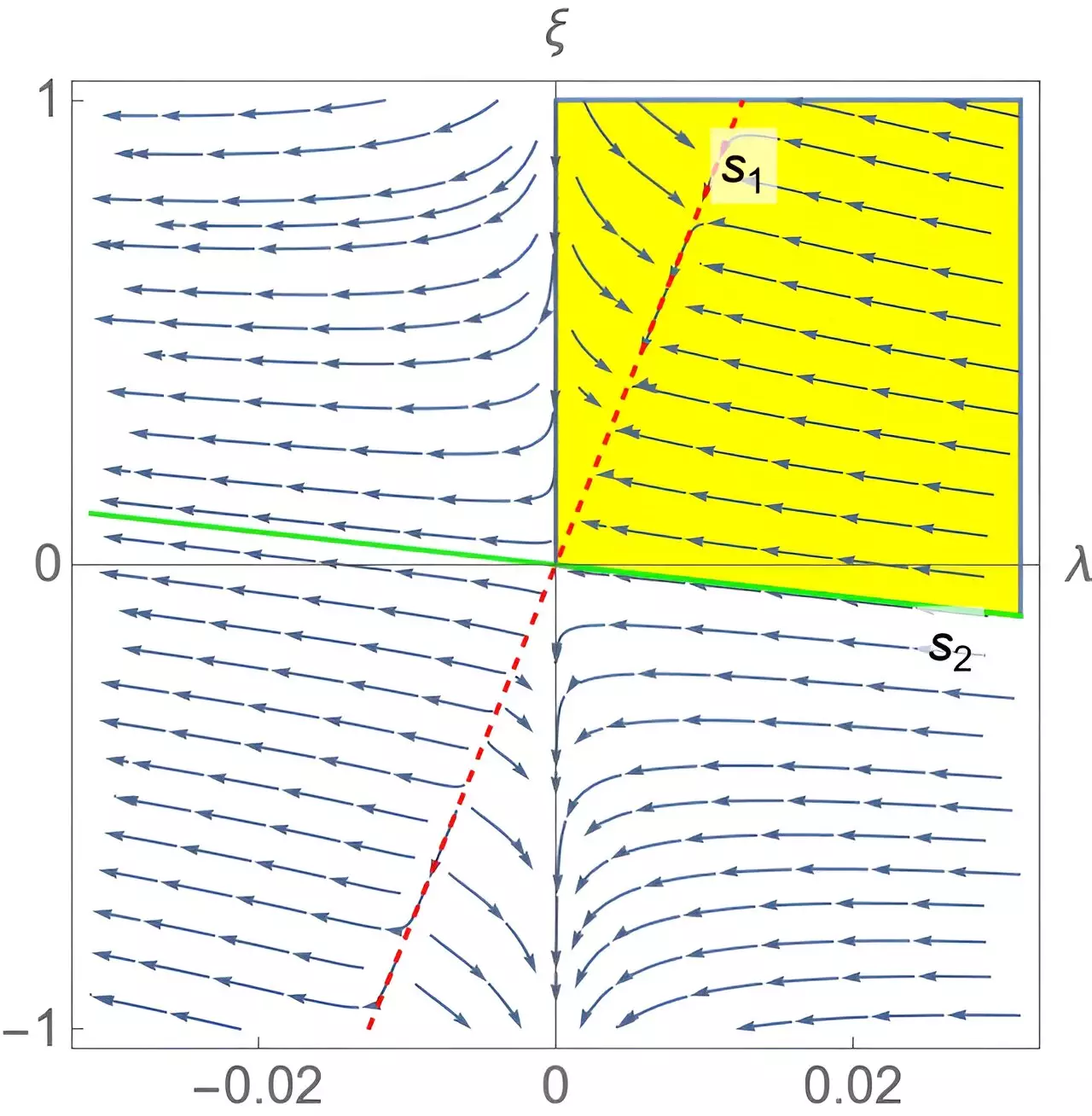

The original research, published in Physical Review Letters, details how the quadratic gravity model avoids the singularity by introducing a “bounce” – a transition from a contracting universe to an expanding one – rather than a true beginning from nothing. This bounce is not a simple reversal of time; it’s a complex quantum phenomenon governed by the modified field equations. Crucially, the model predicts specific signatures in the cosmic microwave background (CMB) – subtle patterns of temperature fluctuations that could, in principle, be detected by future CMB experiments like CMB-S4.

The HPC Bottleneck and the Rise of Specialized Accelerators

Simulating these scenarios demands more than just raw processing power. The algorithms used to solve the quadratic gravity field equations are inherently parallelizable, making them ideally suited for execution on Graphics Processing Units (GPUs) and, increasingly, on specialized accelerators like Field-Programmable Gate Arrays (FPGAs). However, even with these advancements, the computational cost remains substantial. A single high-resolution simulation can take weeks or even months to complete on a state-of-the-art HPC cluster.

The shift towards quadratic gravity models is indirectly fueling demand for next-generation HPC infrastructure. The need for faster, more efficient simulations is driving innovation in processor architecture, memory technology, and interconnectivity. This represents where the “chip wars” become relevant. The US, China, and Europe are all vying for dominance in the HPC market, recognizing its strategic importance for scientific research, national security, and economic competitiveness. The development of advanced AI models as well benefits from these advancements, creating a synergistic relationship between cosmology and artificial intelligence.

What So for Enterprise IT

While seemingly abstract, the advancements in computational cosmology spurred by quadratic gravity have direct implications for enterprise IT. The algorithms and techniques developed for simulating the early universe are finding applications in areas like materials science, fluid dynamics, and financial modeling. The demand for high-performance computing is driving the adoption of cloud-based HPC services, offered by providers like Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP). These services provide access to cutting-edge hardware and software without the need for significant upfront investment.

the need for efficient data analysis and visualization is driving innovation in data science tools and techniques. The massive datasets generated by cosmological simulations require sophisticated algorithms for data compression, filtering, and analysis. These algorithms are also applicable to other domains, such as fraud detection, medical imaging, and social media analytics.

Expert Insight: The Role of Quantum Computing

The current limitations of classical computing may eventually be overcome by the advent of quantum computing. Quantum computers, leveraging the principles of quantum mechanics, have the potential to solve certain types of problems that are intractable for classical computers. Simulating quantum gravity is one such problem.

“The computational complexity of quadratic gravity simulations is truly staggering. While classical HPC can acquire us so far, a fully quantum approach – using qubits to directly represent the quantum states of the early universe – could unlock entirely new insights. We’re still years away from having the necessary hardware, but the theoretical groundwork is being laid now.”

Dr. Anya Sharma, CTO, QuantumSimulations Inc.

However, building a fault-tolerant quantum computer remains a significant challenge. Current quantum computers are prone to errors, and scaling them up to the size needed for complex simulations is a major engineering hurdle. Nevertheless, the potential benefits are so great that research in quantum computing is receiving substantial investment from both governments and private companies.

The 30-Second Verdict

Quadratic gravity isn’t just a theoretical exercise. It’s a catalyst for innovation in HPC, AI, and quantum computing. Expect to notice increased investment in these areas as researchers strive to unlock the secrets of the universe’s origins.

Architectural Implications: From x86 to ARM and Beyond

The shift towards specialized accelerators is also influencing processor architecture. While x86 processors have traditionally dominated the HPC market, ARM-based processors are gaining traction, particularly in energy-efficient HPC systems. The lower power consumption of ARM processors makes them attractive for large-scale deployments, where energy costs can be significant. The open-source nature of the ARM architecture allows for greater customization and optimization.

However, x86 processors still hold an advantage in terms of raw performance. Intel and AMD are continuously improving their x86 processors, incorporating features like advanced vector extensions (AVX) and high-bandwidth memory (HBM) to accelerate scientific computing workloads. The competition between x86 and ARM is driving innovation across the entire processor ecosystem.

The rise of FPGAs represents another architectural trend. FPGAs are reconfigurable hardware devices that can be programmed to perform specific tasks. This allows for highly customized acceleration of quadratic gravity simulations. Companies like Xilinx and Intel are developing FPGAs specifically targeted at HPC applications.

Bridging the Ecosystem: Open Source and Collaboration

The complexity of quadratic gravity simulations necessitates a collaborative approach. Researchers are increasingly sharing their code and data through open-source platforms like GitHub. This allows for greater transparency, reproducibility, and innovation. Open-source libraries and tools are being developed to simplify the process of solving the quadratic gravity field equations and analyzing the resulting data.

“The open-source community is crucial for accelerating progress in this field. By sharing our code and data, One can avoid duplication of effort and build upon each other’s work. This collaborative spirit is essential for tackling the complex challenges of quantum gravity.”

Dr. Kenji Tanaka, Lead Developer, CosmoForge Project

The development of standardized data formats and APIs is also important for facilitating collaboration. This allows researchers to easily exchange data and integrate different software tools. The adoption of open standards will accelerate the pace of discovery and enable new insights into the universe’s origins.

The implications of quadratic gravity extend far beyond the realm of theoretical physics. It’s a driving force for innovation in HPC, AI, and quantum computing, with potential benefits for a wide range of industries. As we continue to push the boundaries of computational cosmology, we can expect to see even more surprising and transformative discoveries.