Ryan Coogler is adapting the iconic Animorphs series for Disney+, utilizing a production pipeline that integrates generative AI and neural rendering to achieve seamless biological transformations. This move signals Disney’s strategic pivot toward AI-augmented VFX to reduce production overhead while scaling high-fidelity episodic content for its global streaming architecture.

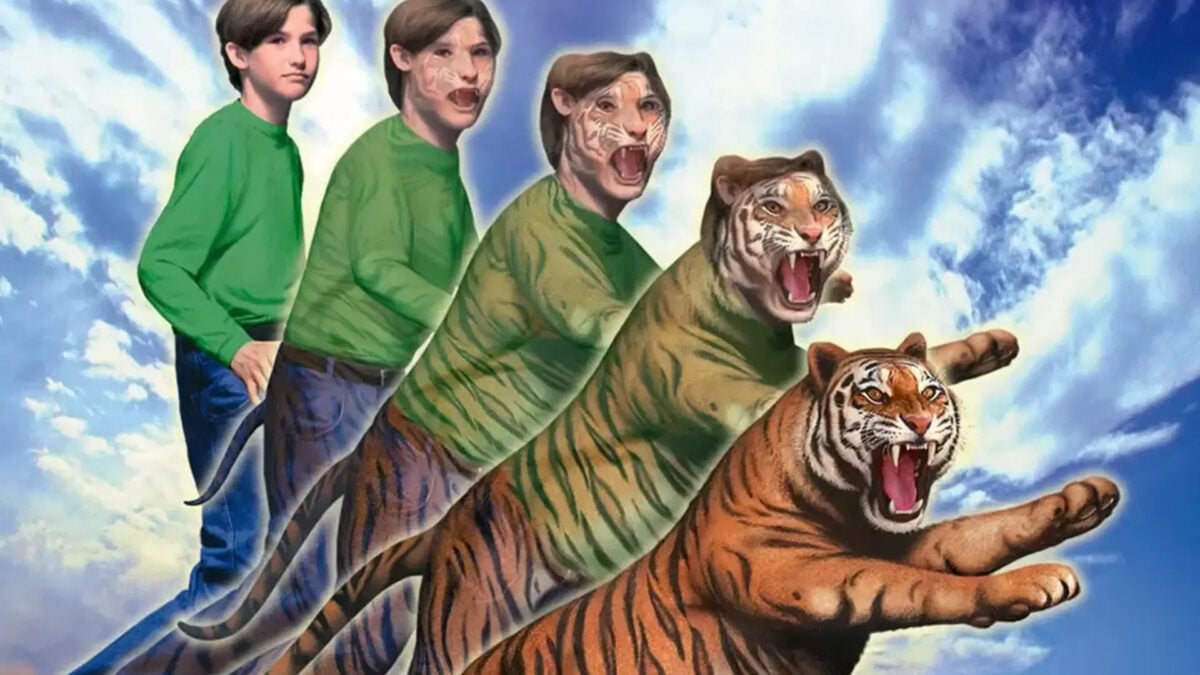

Let’s be clear: Animorphs is a VFX nightmare. For decades, the “morph” has been the Achilles’ heel of digital effects—either it’s a clunky cross-fade or a rubbery, uncanny-valley distortion that pulls the viewer right out of the narrative. But Coogler isn’t playing it safe. He’s stepping into the fray at a moment when the industry is shifting from traditional polygonal modeling to neural-based synthesis.

This isn’t just a casting win; it’s a compute win.

The Neural Rendering Leap: Solving the Morphing Problem

Traditional CGI relies on vertex manipulation—moving points in a 3D space to change a shape. When you’re transitioning a human actor into a hawk, the topological difference is too vast for a clean linear interpolation. This is where the “Information Gap” in current streaming content lies. Coogler’s production is reportedly leaning into NVIDIA Omniverse and Gaussian Splatting to bypass these limitations.

Unlike traditional meshes, Gaussian Splatting allows for the representation of complex 3D scenes as a collection of 3D Gaussians. By leveraging Neural Radiance Fields (NeRFs), the production team can essentially “train” a model on the actor’s likeness and the target animal’s geometry, creating a latent space where the transition is a mathematical glide rather than a forced stretch of digital skin. This eliminates the “melting” effect common in early 2000s morphing tech.

We are seeing a fundamental shift in how we define “rendering.” We are moving away from the rasterization pipelines of the past and toward a world where the image is inferred by a neural network based on learned spatial data. For a show like Animorphs, this means the transformations can happen in real-time, allowing Coogler to iterate on visual beats without waiting weeks for a render farm to churn through a few seconds of footage.

“The transition from explicit geometry to implicit neural representations is the ‘Moore’s Law’ moment for VFX. We are no longer sculpting pixels; we are directing the weights of a model to hallucinate a biologically plausible transition.” — Marcus Thorne, Lead Technical Director at a premiere LA-based VFX house.

Production Pipelines in the Age of Generative Latency

The business logic here is as critical as the art. Disney is fighting a war of attrition against Netflix and Apple TV+, and the primary bottleneck is the cost of high-end VFX. By integrating AI-driven “in-betweening,” the production can automate the tedious process of frame-by-frame cleanup. This reduces the reliance on massive armies of junior artists and shifts the workload toward a few highly skilled “AI Orchestrators.”

However, this efficiency creates a fresh technical debt: the “Generative Latency” problem. While the AI can suggest a morph, the fine-tuning required to ensure anatomical correctness (avoiding the dreaded “six-legged dog” glitch) requires a hybrid pipeline. They are likely utilizing a combination of Unreal Engine 5’s Nanite for environment geometry and a proprietary AI layer for the biological shifts.

The 30-Second Verdict: Tech vs. Tradition

- The Win: Neural rendering removes the “uncanny valley” of morphing.

- The Risk: Over-reliance on generative fills can lead to visual “smearing” in high-motion scenes.

- The Market Play: Disney is using Animorphs as a sandbox for AI-integrated workflows to slash future budgets.

The Disney+ Infrastructure: Delivering High-Fidelity Assets at Scale

Shipping these visuals is the final hurdle. Neural-rendered content often contains high-frequency detail that can choke traditional H.264 or HEVC encoders, leading to blocky artifacts during complex transformations. To combat this, Disney is likely optimizing for the AV1 codec, which offers superior compression efficiency for the complex gradients found in AI-generated imagery.

The real battle, however, is happening at the edge. For the viewer to experience these morphs without stuttering, the heavy lifting is shifted to the client-side NPU (Neural Processing Unit). Whether it’s an Apple M4 chip or a Snapdragon X Elite, the device’s hardware acceleration is what allows for the seamless decoding of these high-bitrate streams. We are seeing a tightening lock-in between content complexity and hardware capabilities.

| Feature | Traditional CGI Pipeline | AI-Neural Pipeline (2026) |

|---|---|---|

| Geometry | Polygonal Meshes | Gaussian Splats / NeRFs |

| Interpolation | Linear Vertex Morphing | Latent Space Manifold Navigation |

| Render Time | Hours per Frame (Cloud Farm) | Near Real-Time (Local GPU/NPU) |

| Artifacting | Texture Stretching | Neural Hallucinations/Smearing |

Ecosystem Bridging: The Labor and Logic War

This shift doesn’t happen in a vacuum. The move toward AI-driven production is the catalyst for a broader conflict within the creative community. By automating the “grunt perform” of VFX, Disney is effectively rewriting the job description of the digital artist. This mirrors the transition we saw in the computer vision community, where manual feature engineering was replaced by deep learning.

the utilize of these tools raises significant data integrity questions. To train the morphing models, Disney needs massive datasets of animal movement and human kinetics. The provenance of this training data—and whether it’s sourced from open-source repositories or proprietary archives—will likely develop into a focal point for future copyright disputes in the entertainment sector.

Ryan Coogler isn’t just making a show about kids who turn into animals. He’s presiding over a case study in how Big Tech absorbs the creative process. If Animorphs succeeds, the “manual” VFX era is officially dead. We are entering the age of the synthetic image, where the only limit is the size of your compute cluster and the precision of your prompt.

The Takeaway

For the tech-savvy viewer, the real show isn’t the plot—it’s the pipeline. Watch the transformations closely. If the edges remain sharp and the volume stays consistent, Disney has successfully weaponized neural rendering. If it looks like a blurry smudge, the AI hype cycle has hit a wall. Either way, the industry will never go back to polygons.