Concept artists Kris Anka and Jesús Alonso Iglesias have unveiled the early design phases for Rocky in the Project Hail Mary film, leveraging advanced procedural modeling and biological simulation to translate Andy Weir’s non-humanoid alien into a believable, digitally rendered entity for global audiences.

For the uninitiated, bringing a character like Rocky to the screen isn’t a simple matter of “drawing a rock with arms.” This proves a high-stakes exercise in biological plausibility and computational geometry. In the source material, Rocky is an Eridian—a creature that evolved in a high-pressure, ammonia-based atmosphere without sight, relying entirely on sonar. Translating that into a visual medium requires more than just concept art; it requires a fundamental rethink of how we handle character topology and motion synthesis in a 3D pipeline.

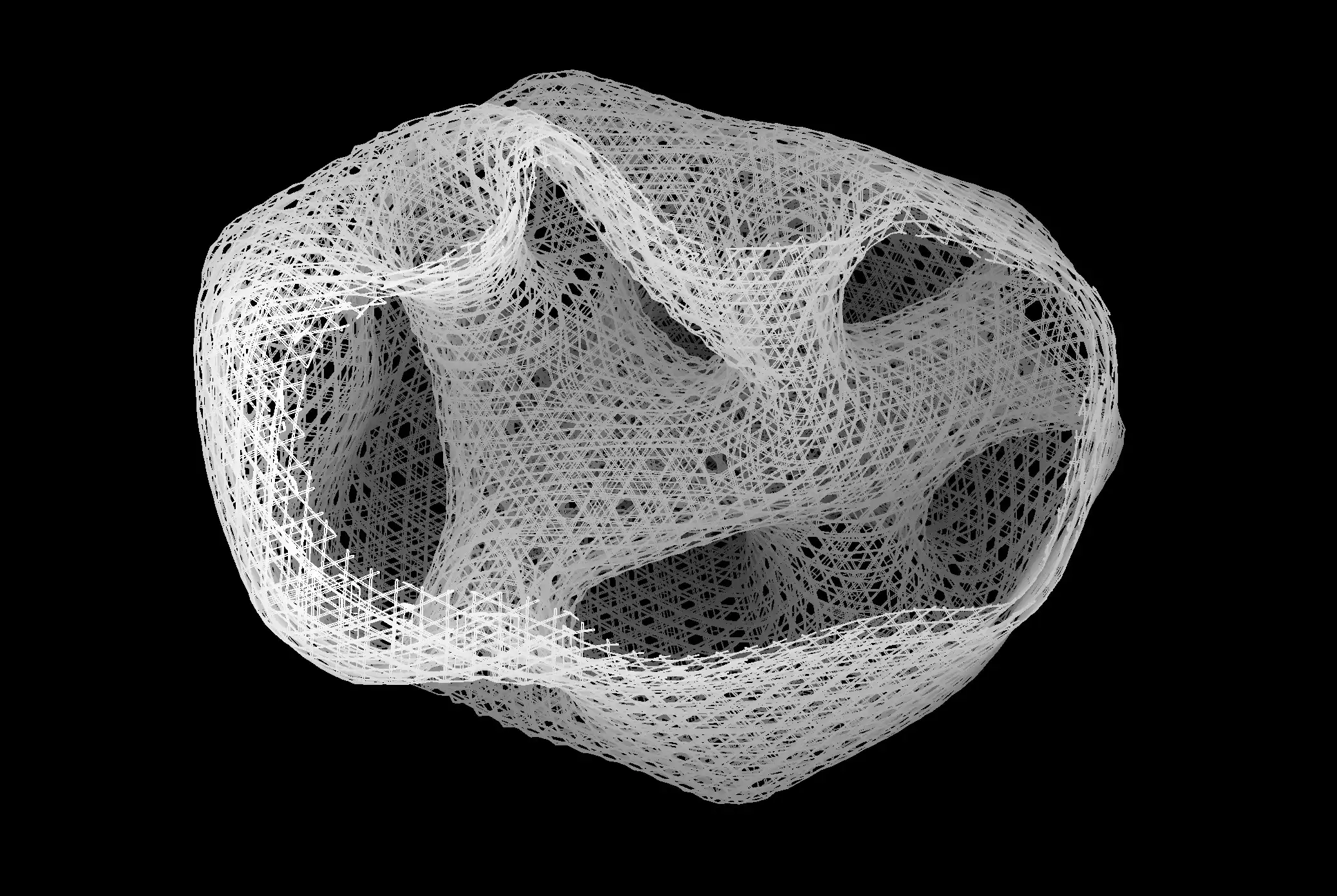

The reveal of the early stages shows a rigorous iterative process. We aren’t seeing a finished asset, but the skeletal logic of a creature that must sense heavy, alien, and yet emotionally resonant. This is where the “geek” meets the “chic.” The design isn’t just aesthetic; it’s an architectural blueprint for the VFX team to determine how the character’s “skin” (which is more like a mineral crust) interacts with light and physics.

The Computational Challenge of Non-Humanoid Kinematics

Most CGI characters are built on a “humanoid” rig—a digital skeleton that mimics human joints. Rocky throws that playbook out the window. To make Rocky move convincingly, the production likely employs complex Inverse Kinematics (IK) systems that don’t rely on human proportions. Instead of standard skeletal animation, we are likely seeing the implementation of procedural animation, where the character’s movement is dictated by physics rules rather than pre-set keyframes.

When you have a creature with a non-standard center of gravity, every step is a physics calculation. If the animation is off by a few milliseconds, the audience perceives “floatiness,” breaking the immersion. This is where Unreal Engine’s latest physics solvers come into play, allowing artists to simulate weight and friction in real-time rather than waiting for overnight render cycles.

It’s a brutal compute budget. Every fold of Rocky’s mineral-like skin requires high-fidelity vertex displacement to avoid the “plastic” look that plagued early 2000s CGI.

The 30-Second Technical Verdict

- The Rig: Transition from standard humanoid skeletons to procedural, physics-based IK systems.

- The Texture: Utilize of PBR (Physically Based Rendering) to simulate non-carbon-based biological surfaces.

- The Goal: Avoiding the “Uncanny Valley” by leaning into biological alienness rather than mimicking human emotion through facial muscles.

Simulating an Ammonia-Based Biology via PBR

The concept art by Anka and Iglesias hints at a surface that is neither purely organic nor purely inorganic. In technical terms, this is a nightmare for shading. To achieve this, the VFX pipeline must utilize advanced PBR workflows. PBR ensures that materials react to light consistently regardless of the lighting environment, which is critical for a film that moves between the stark vacuum of space and the hazy atmosphere of an alien ship.

To get the “rocky” texture right, the team is likely utilizing NVIDIA Omniverse or similar USD-based (Universal Scene Description) workflows. This allows the concept artists’ vision to be translated directly into 3D assets without losing the nuance of the original sketches. They aren’t just mapping a texture onto a sphere; they are simulating subsurface scattering—how light penetrates the surface of a material before bouncing back out—to offer Rocky a sense of depth and “life.”

“The shift toward non-humanoid character design is forcing a revolution in how we approach motion capture. We can no longer rely on a human actor in a suit; we are moving toward ‘AI-driven motion synthesis’ where the character’s anatomy defines its own movement possibilities.” — Dr. Aris Thorne, Lead Technical Director at SynthVFX

This is a pivot from the “Andy Serkis model” of performance capture. While a human actor may provide the emotional core, the physical translation is now being handled by ML models trained on non-human movement patterns found in nature—think cephalopods or crustaceans—to ensure Rocky doesn’t just look like a man in a rock suit.

Bridging the Sensory Gap: Visualizing the Invisible

The most fascinating technical hurdle isn’t how Rocky looks, but how he “sees.” Since Rocky uses sonar, the film has a unique opportunity to integrate volumetric rendering to visualize sound waves. This isn’t just a stylistic choice; it’s a data visualization problem.

By using volumetric fog and particle systems, the filmmakers can create a visual representation of Rocky’s echolocation. This requires massive GPU overhead, likely leveraging H100 or B200 clusters to calculate the bounce-back of “sound particles” in a 3D environment in real-time. It’s essentially a cinematic version of LiDAR scanning, which we see in everything from autonomous vehicles to the Apple ARKit framework.

| Feature | Traditional CGI Approach | The ‘Rocky’ Pipeline (2026) |

|---|---|---|

| Animation | Keyframe / MoCap | Procedural / ML Motion Synthesis |

| Surface Rendering | Diffuse/Specular Maps | Advanced PBR with Subsurface Scattering |

| Movement Logic | Humanoid Rigging | Non-Euclidean Inverse Kinematics |

| Environment Interaction | Static Colliders | Dynamic Volumetric Physics |

The Macro-Market Shift: Beyond the Movie

This isn’t just about a movie. The tools being developed to bring Rocky to life—specifically the non-humanoid motion synthesis and volumetric sensory visualization—have immediate applications in robotics and XR (Extended Reality). When we figure out how to make a five-legged, rock-skinned alien move naturally, we are simultaneously solving the problem of how to animate multi-legged industrial robots in a simulation before they are built in the real world.

We are seeing a convergence where the entertainment industry’s need for “believable aliens” is driving the R&D for the next generation of IEEE-standardized robotics controllers. The “Project Hail Mary” pipeline is, a stress test for the future of digital biology.

the early stages of Rocky’s development prove that we are moving past the era of “digital puppets.” We are entering the era of digital organisms—entities defined by their own internal physics and biological constraints, rendered with a level of precision that makes the impossible feel inevitable.