Sony Pictures is developing a live-action Metal Gear Solid film directed by Zach Lipovsky and Adam B. Stein. This strategic move leverages Sony’s vertical integration of gaming IP and cinematic production to capitalize on the “transmedia” trend, utilizing high-fidelity virtual production pipelines to translate tactical stealth gameplay into cinema.

Let’s be clear: the “video game movie” is no longer a cursed genre. We have moved past the era of clumsy adaptations and entered the age of the ecosystem play. For Sony, Metal Gear Solid isn’t just a movie project. it is a high-stakes exercise in brand synchronization. By bridging the gap between PlayStation Productions and Sony Pictures, the company is building a closed-loop content flywheel. When the film hits screens, the goal isn’t just box office revenue—it’s the immediate spike in digital downloads of the Metal Gear catalog and the reinforcement of the PlayStation hardware moat.

It is a ruthless, efficient piece of corporate architecture.

The Virtual Production Pipeline: Beyond the Green Screen

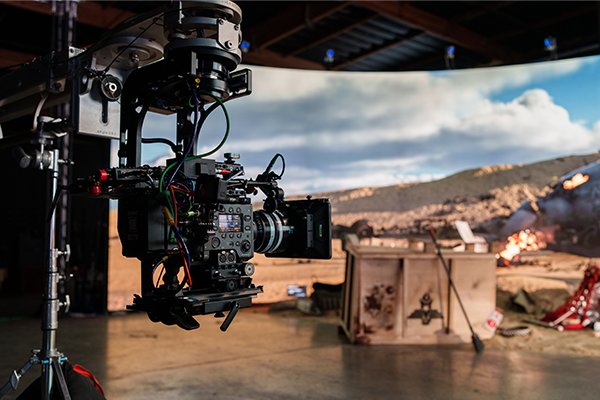

From a technical standpoint, the real story here isn’t the casting or the plot—it’s the pipeline. Modern Sony productions have shifted away from traditional post-production “fixing it in post” toward Virtual Production (VP). This involves the leverage of massive LED volumes powered by Unreal Engine 5 (UE5), allowing directors to witness final-pixel environments in real-time during the shoot.

For a franchise like Metal Gear, which relies heavily on atmospheric tension and claustrophobic environments, UE5’s Lumen (real-time global illumination) and Nanite (virtualized micro-polygon geometry) are critical. Instead of actors staring at a neon-green wall and guessing where a guard is standing, they are immersed in a photorealistic recreation of Shadow Moses or Outer Haven. This eliminates the “uncanny valley” of lighting that plagued earlier game adaptations.

The technical shift is summarized here:

| Feature | Traditional CGI Pipeline | Virtual Production (UE5) |

|---|---|---|

| Lighting | Simulated in post-production (Rasterized) | Real-time Ray Tracing (Lumen) |

| Geometry | Limited by polygon budgets/LODs | Near-infinite detail via Nanite |

| Actor Feedback | Imaginary/Green Screen | Immersive LED Volume |

| Iteration | Weeks for a final render | Instantaneous “What You See Is What You Get” |

This isn’t just about aesthetics. It’s about data efficiency. By using the same engine that powers modern AAA gaming, Sony can theoretically port assets directly from the game’s development environment into the film’s pre-visualization phase, slashing the time between concept art and final frame.

The Transmedia Moat and the Content War

We are currently witnessing a brutal war for “Attention Equity.” Microsoft has been aggressively acquiring publishers like Activision Blizzard to secure IP, but Sony is playing a different game. Sony is focusing on Vertical Integration. They don’t just want to own the game; they want to own the movie, the series, and the merchandise, all while keeping the user locked into the PlayStation ecosystem.

By turning Metal Gear into a cinematic universe, Sony creates a recursive loop. The movie attracts a non-gaming audience, who then buy a console to play the games, who then subscribe to PlayStation Plus to access the legacy titles. This is the “Apple approach” applied to entertainment: creating a walled garden where the IP is the primary incentive for entry.

“The convergence of real-time rendering and cinematic storytelling is fundamentally changing the unit economics of film production. We are no longer talking about ‘game-like’ movies, but rather the total unification of the digital asset pipeline.”

The technical hurdle, however, remains the “Translation Gap.” How do you translate Metal Gear’s core loop—stealth, cardboard boxes, and long-winded philosophical monologues about nuclear deterrence—into a narrative that doesn’t experience like a series of cutscenes? Lipovsky and Stein will need to lean into the “Tactical Espionage Action” aesthetic without letting the plot succumb to the exposition-heavy nature of Hideo Kojima’s original scripts.

The 30-Second Verdict: Why This Matters for the Industry

- Tech Synergy: The use of UE5 for film production proves that game engines are becoming the universal OS for all visual media.

- Market Dominance: Sony is leveraging “transmedia” to protect its hardware market share against the cloud-gaming push from Microsoft and Google.

- Production Efficiency: Moving toward Virtual Production reduces the reliance on costly, time-consuming post-production cycles.

The Latency of Adaptation: Risks and Realities

Despite the technical prowess, there is a significant risk: Over-production. When the tools (like UE5) become too powerful, there is a tendency to prioritize visual fidelity over narrative coherence. We’ve seen this in the “CGI sludge” of recent blockbusters where the scale of the environment dwarfs the human element of the story.

the Metal Gear IP is notoriously complex. Translating the “meta” elements of the series—where the game often breaks the fourth wall to speak directly to the player—into a film requires more than just a high-complete NPU or a massive render farm. It requires a fundamental understanding of ludonarrative harmony. If the film ignores the “game-ness” of the source material, it loses the core fanbase; if it leans too hard into it, it alienates the general public.

For those interested in the underlying tech, the shift toward IEEE standards in real-time data exchange and the evolution of OpenXR are what make these hybrid productions possible. The ability to sync a camera’s physical position in a room with a virtual camera’s position in a digital world (Camera Tracking) is the unsung hero of this production.

As we move further into 2026, the line between “playing a game” and “watching a movie” is blurring. Sony isn’t just making a movie; they are prototyping the future of interactive entertainment. Whether Metal Gear Solid succeeds as a film is almost secondary to the fact that Sony has perfected the machine that makes it.

Maintain an eye on the technical breakdowns of Virtual Production as this movie progresses. The real “bomba” isn’t the announcement—it’s the architecture behind the curtain.