The “Mangofruitloops” phenomenon on X (formerly Twitter) represents a sophisticated evolution in synthetic content generation, where AI-driven personas utilize polyglot linguistic blending—mixing French and Spanish—to bypass automated spam filters and manipulate engagement algorithms. This tactical shift in social engineering targets fragmented demographic clusters to maximize reach while avoiding the pattern-recognition triggers of standard moderation LLMs.

To the casual scroller, a profile like Mangofruitloops looks like a glitchy, multi-lingual bot posting a disjointed mix of aesthetic allure and random health tips. To a technical analyst, We see a textbook example of “algorithmic camouflage.” By weaving together high-engagement keywords (seduction, beauty) with low-risk, utility-based content (sunburn prevention), these accounts create a noise-to-signal ratio that confuses the classifier models used by platform moderators.

This isn’t just a few rogue bots. This is a systemic exploit of how modern Large Language Models (LLMs) handle tokenization across different languages. When a bot switches from French to Spanish mid-stream, it disrupts the semantic continuity that most spam detectors rely on to flag “repetitive” or “templated” content. It is a deliberate attempt to lower the perplexity score of the content, making it appear more “human” and erratic to the machine, while remaining just coherent enough to trigger a click from a human user.

The Mechanics of Polyglot Camouflage and Token Manipulation

At the core of this strategy is the exploitation of the latent space within multi-lingual LLMs. Most moderation tools are trained on massive datasets where “spam” follows a predictable linguistic pattern—usually single-language, high-urgency, and link-heavy. Mangofruitloops breaks this pattern by employing a “semantic pivot.”

The transition from discussing “seduction” in French to “summer burns” in Spanish is not a mistake; it is a feature. By shifting the linguistic context, the bot forces the moderation API to re-evaluate the content’s intent multiple times within a single post. This increases the computational latency for the filter and often results in a “false negative” where the system fails to categorize the post as spam because it doesn’t fit into a single, predefined bucket of prohibited content.

The Technical Breakdown of the Exploit

- Linguistic Drift: Using cross-lingual embeddings to maintain a vague sense of “lifestyle” content while switching languages to evade keyword-based blacklists.

- Engagement Baiting: Leveraging high-weight tokens (e.g., “séduction,” “preventivas”) that trigger the recommendation engine’s “interest” graph.

- Pattern Fragmentation: Avoiding the use of identical strings across multiple accounts, instead using LLM-driven paraphrasing to ensure each post has a unique hash.

This is a sophisticated game of cat-and-mouse. As X updates its moderation layers, the bot operators adjust the temperature settings of their generative models to introduce more randomness, further distancing the output from known spam signatures.

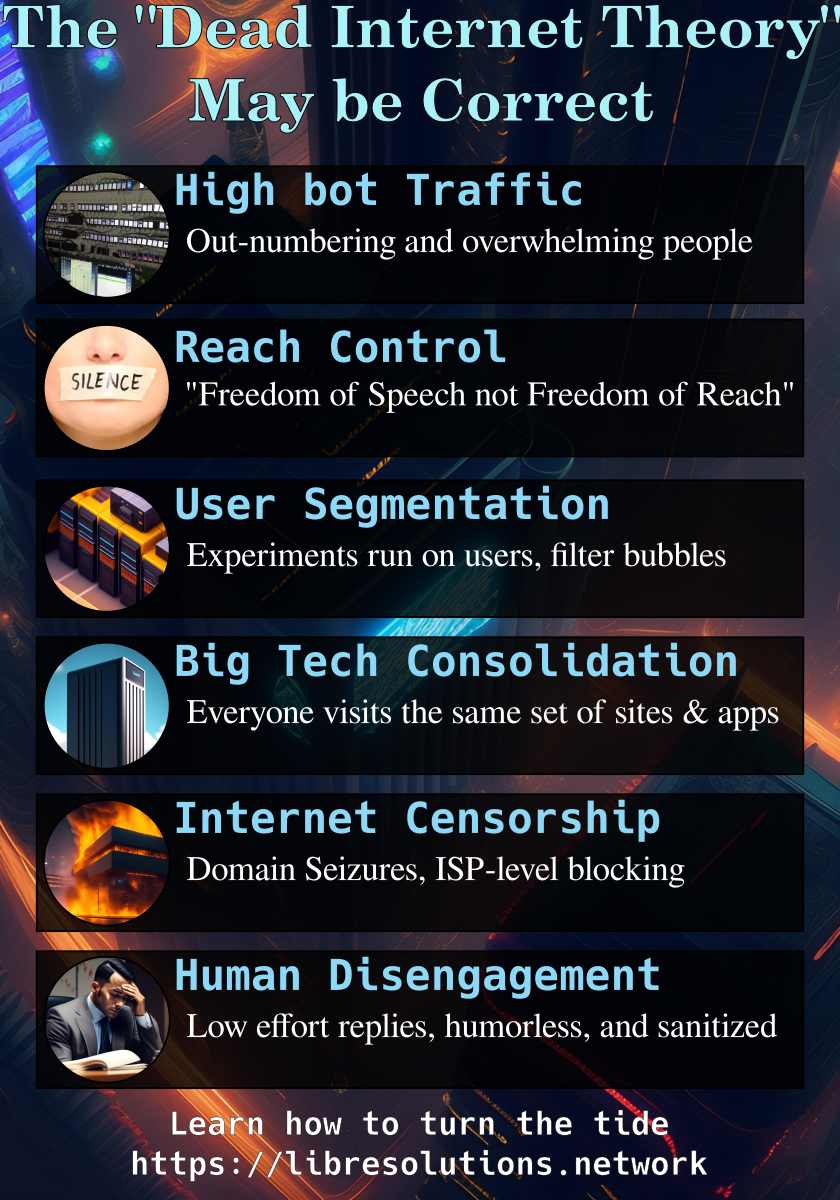

The “Dead Internet Theory” Becomes a Production Reality

We are witnessing the transition of the “Dead Internet Theory” from a fringe conspiracy to a measurable architectural reality. When synthetic personas like Mangofruitloops can successfully mimic human erraticism—including the tendency to post unrelated thoughts in different languages—the cost of trust on social platforms skyrockets.

This affects the broader tech war by accelerating the move toward “Proof of Personhood” (PoP) protocols. We are seeing a push toward integrating decentralized identity (DID) and biometric verification to ensure that the entity behind the keyboard is not a GPU cluster in a server farm. The battle is no longer about blocking “bots”; it is about defining what “human” looks like in a world of perfect generative mimicry.

“The current generation of botnets has moved beyond simple scripting. They are now utilizing RLHF (Reinforcement Learning from Human Feedback) loops to analyze which specific linguistic pivots lead to higher conversion rates, essentially A/B testing human psychology at scale.”

The implications for third-party developers and API users are severe. Data scraped from platforms like X is becoming increasingly polluted with this synthetic noise, leading to “model collapse” where future AI models are trained on the output of current bots, creating a feedback loop of linguistic degradation. If you are training a sentiment analysis model on 2026 Twitter data without rigorous filtering, you are essentially training your AI on the hallucinations of a botnet.

Comparing Synthetic Engagement Tactics

To understand why the Mangofruitloops approach is more effective than traditional botting, we have to look at the evolution of the “engagement stack.”

| Feature | Legacy Botnets (2020-2023) | Synthetic Personas (2024-2026) | Impact on Detection |

|---|---|---|---|

| Content Source | Hard-coded templates | Real-time LLM generation | Impossible to use static hash-blocking |

| Language | Single language/Auto-translate | Dynamic polyglot blending | Bypasses single-language classifiers |

| Behavior | High-frequency bursting | Human-mimetic pacing | Evades rate-limit triggers |

| Goal | Direct link clicks | Algorithmic “warming” & Trust building | Increases account longevity |

The Cybersecurity Risk: Beyond the Annoyance

While a post about sunburns might seem harmless, these accounts serve as “sleeper cells” for more malicious activity. The goal of the polyglot phase is to “warm up” the account—building a history of engagement and a level of perceived legitimacy. Once the account is trusted by the algorithm, the payload changes.

The shift is usually subtle. A profile that spent three months posting aesthetic French quotes and Spanish health tips will suddenly pivot to promoting a “revolutionary” DeFi project or a phishing link disguised as a security update. Because the account has a high “trust score” from the platform’s internal metrics, the malicious link is less likely to be flagged immediately.

For enterprise security teams, this highlights a critical vulnerability in social-media-based OSINT (Open Source Intelligence). Relying on account age or engagement metrics is no longer a viable way to verify a source. The “Mangofruitloops” method proves that authenticity can be synthesized through calculated randomness.

The 30-Second Verdict

The Mangofruitloops phenomenon is a canary in the coal mine for the era of synthetic sociality. It utilizes multi-lingual LLM capabilities to exploit the gaps in platform moderation, turning “noise” into a strategic asset. For the user, it is a reminder that on the modern web, erraticism is often a sign of a very well-tuned machine.

To stay ahead, developers should look toward advanced bot detection frameworks and research into adversarial machine learning to understand how these models are being gamed. As we move further into 2026, the only defense against the synthetic surge is a move toward cryptographically verified identity and a ruthless skepticism of “viral” content that lacks a verifiable human origin. For more on the systemic risks of AI-generated noise, Ars Technica continues to lead the reporting on platform decay.